Submitted:

27 April 2023

Posted:

28 April 2023

You are already at the latest version

Abstract

Keywords:

1. Introduction

- It is conceptually simple, as it translates the problem of explainable RL into a supervised learning setting.

- DTs are fully transparent and (at least for limited depth) offer a set of easily understandable rules.

- The approach is oracle-agnostic: it does not rely on the agent being trained by a specific RL algorithm, as only a training set of state-action pairs is required.

2. Related Work

3. Methods

3.1. Environments

3.2. Deep Reinforcement Learning

3.3. Decision Trees

3.4. DT Training Methods

3.4.1. Episode Samples (EPS)

- A DRL agent (“oracle”) is trained to solve the problem posed by the studied environment.

- The oracle acting according to its trained policy is evaluated for a set number of episodes. At each time step, the state of the environment and action of the agent are logged until a total of samples are collected.

- A decision tree (CART or OPCT) is trained from the samples collected in the previous step.

3.4.2. Bounding Box (BB)

- The oracle is evaluated for a certain number of episodes. Let and be the lower and upper bound of the visited points in the dimension of the observation space and their interquartile range (difference between the and the percentile).

- Based on the statistics of visited points in the observation space from the previous step, we take a certain number of samples from the uniform distribution within a hyper-rectangle of side lengths . The side length is clipped, should it exceed the predefined boundaries of the environment.

- For all investigated depths d, OPCTs are trained from the dataset consisting of the samples and the corresponding actions predicted by the oracle.

3.4.3. Iterative Training of Explainable RL Models (ITER)

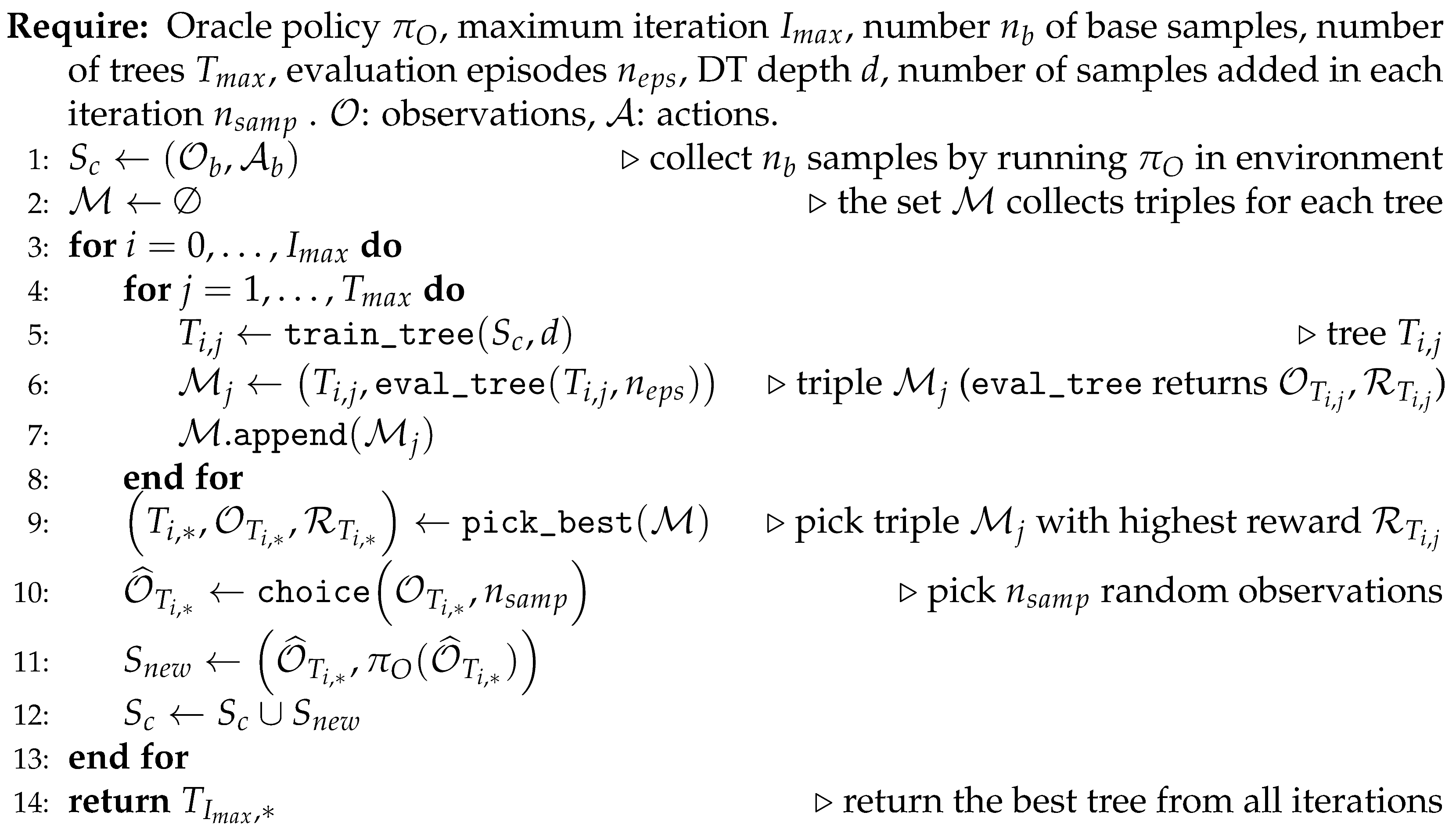

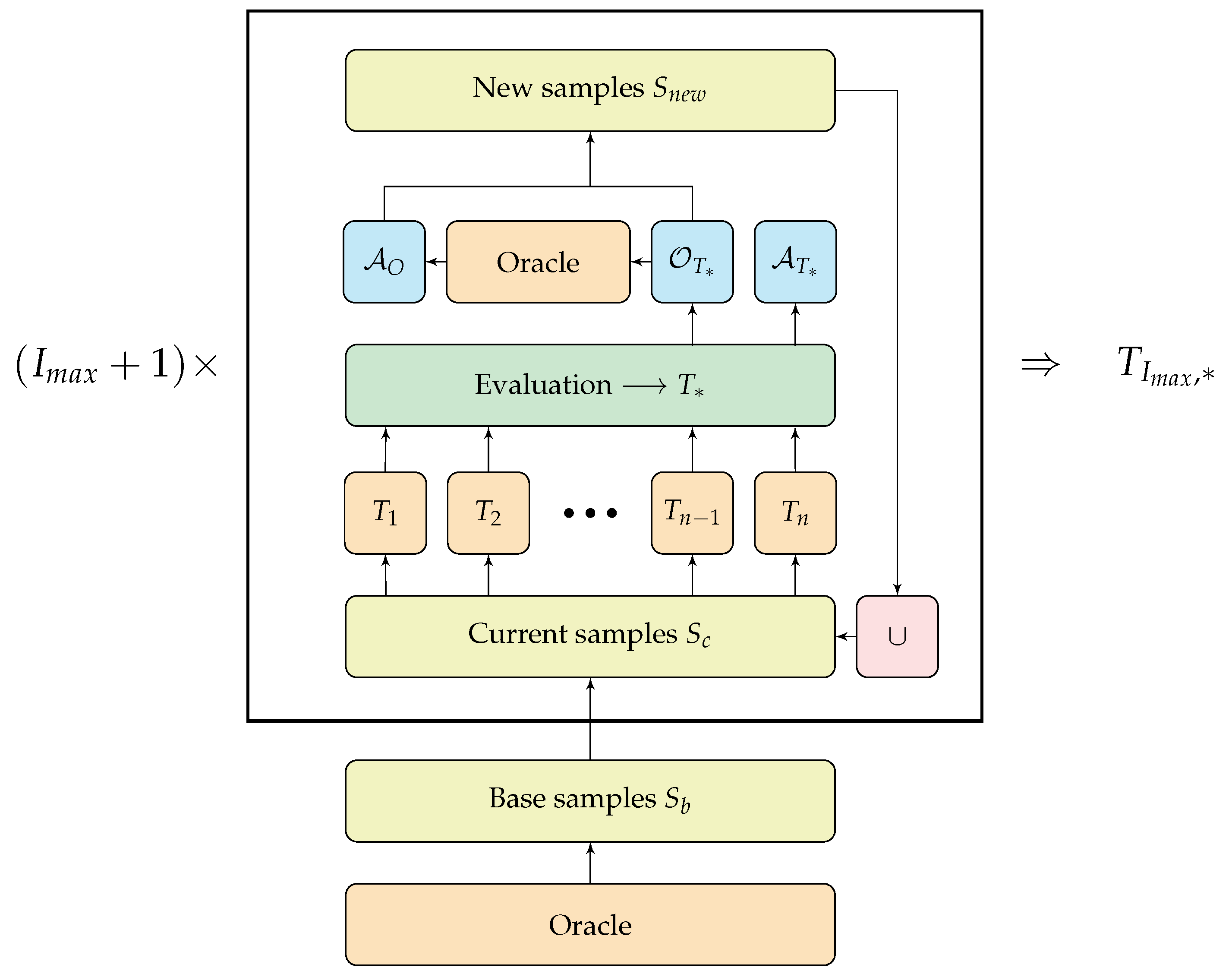

| Algorithm 1 Iterative Training of Explainable RL Models (ITER) |

|

3.5. Experimental Setup

4. Results

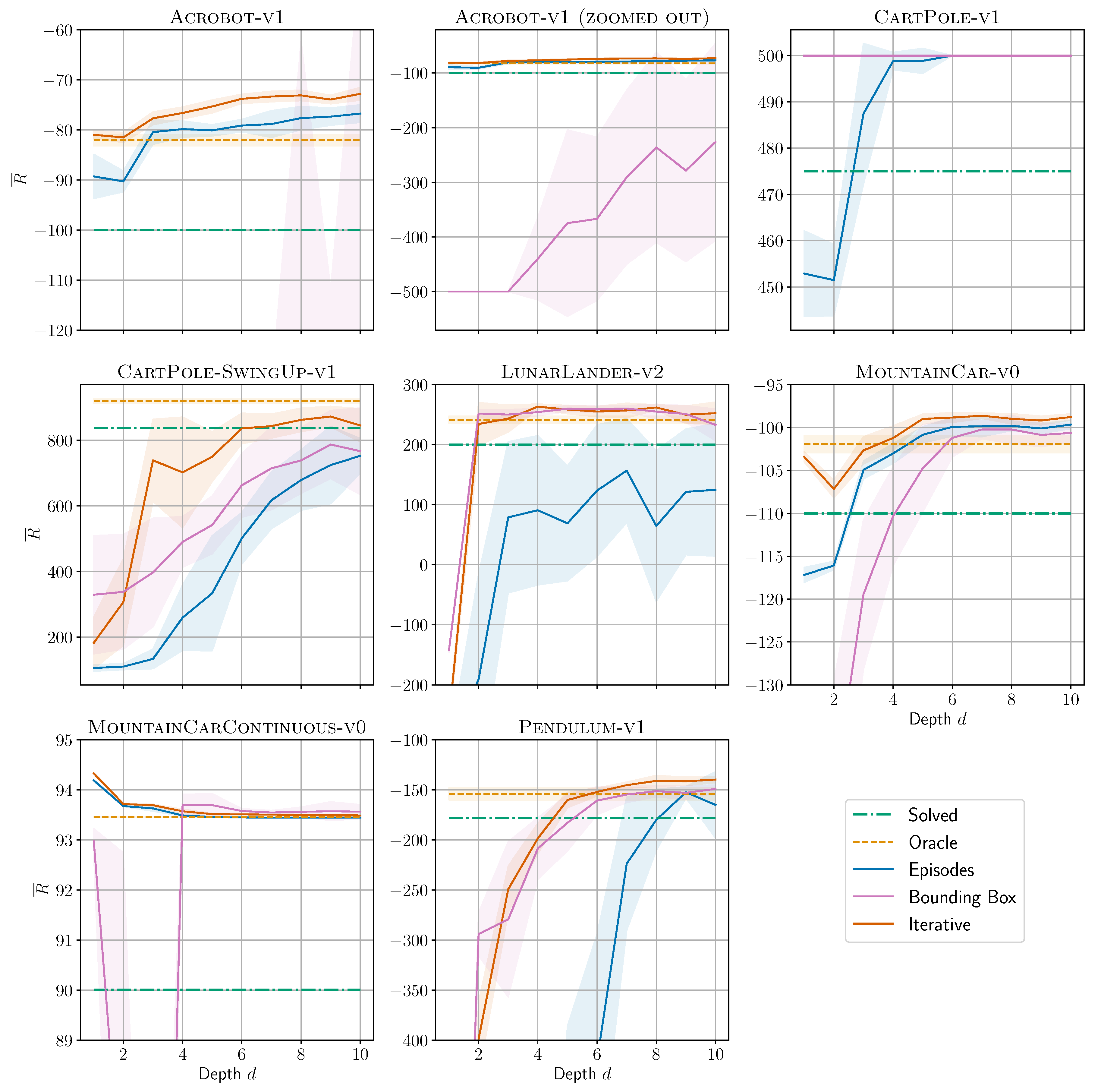

4.1. Solving Environments

4.2. Computational Complexity

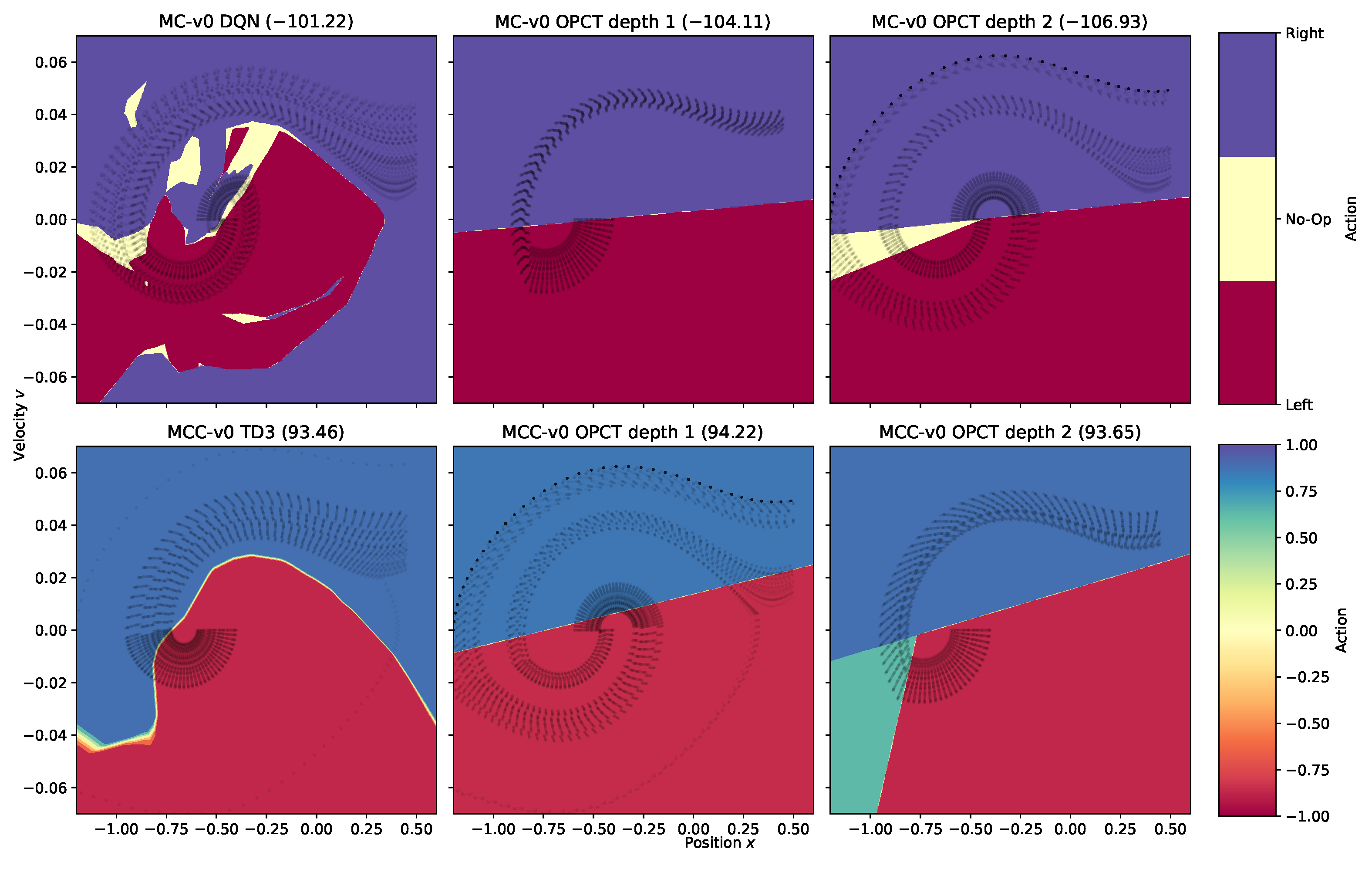

4.3. Decision Space Analysis

| (a) Solving an environment | ||||||

| Algorithms | ||||||

| EPS | EPS | BB | ITER | ITER | ||

| Environment | Model | CART | OPCT | OPCT | CART | OPCT |

| Acrobot-v1 | DQN | 1 | 1 | 1 | 1 | |

| CartPole-v1 | PPO | 3 | 3 | 1 | 3 | 1 |

| CartPole-SwingUp-v1 | DQN | 10 | 10 | 10 | 10 | 7 |

| LunarLander-v2 | PPO | 10 | 10 | 2 | 10 | 2 |

| MountainCar-v0 | DQN | 3 | 3 | 5 | 3 | 1 |

| MountainCarContinuous-v0 | TD3 | 1 | 1 | (1) | 1 | 1 |

| Pendulum-v1 | TD3 | 10 | 9 | 6 | 7 | 5 |

| Sum | 38 | 37 | 35 | 35 | 18 | |

| (b) Surpassing the oracle | ||||||

| Acrobot-v1 | DQN | 3 | 3 | 2 | 1 | |

| CartPole-v1 | PPO | 5 | 6 | 1 | (3) | 1 |

| CartPole-SwingUp-v1 | DQN | |||||

| LunarLander-v2 | PPO | 2 | 3 | |||

| MountainCar-v0 | DQN | 3 | 5 | 6 | 3 | 4 |

| MountainCarContinuous-v0 | TD3 | 1 | 4 | 2 | 1 | |

| Pendulum-v1 | TD3 | 9 | 8 | 10 | 6 | |

| Sum | 44 | 41 | 26 | |||

| Environment | dim D | Algorithm | |||

|---|---|---|---|---|---|

| Acrobot-v1 | 6 | EPS | |||

| BB | |||||

| ITER | |||||

| CartPole-v1 | 4 | EPS | |||

| BB | |||||

| ITER | |||||

| MountainCar-v0 | 2 | EPS | |||

| BB | |||||

| ITER |

4.4. Explainability for Oblique Decision Trees in RL

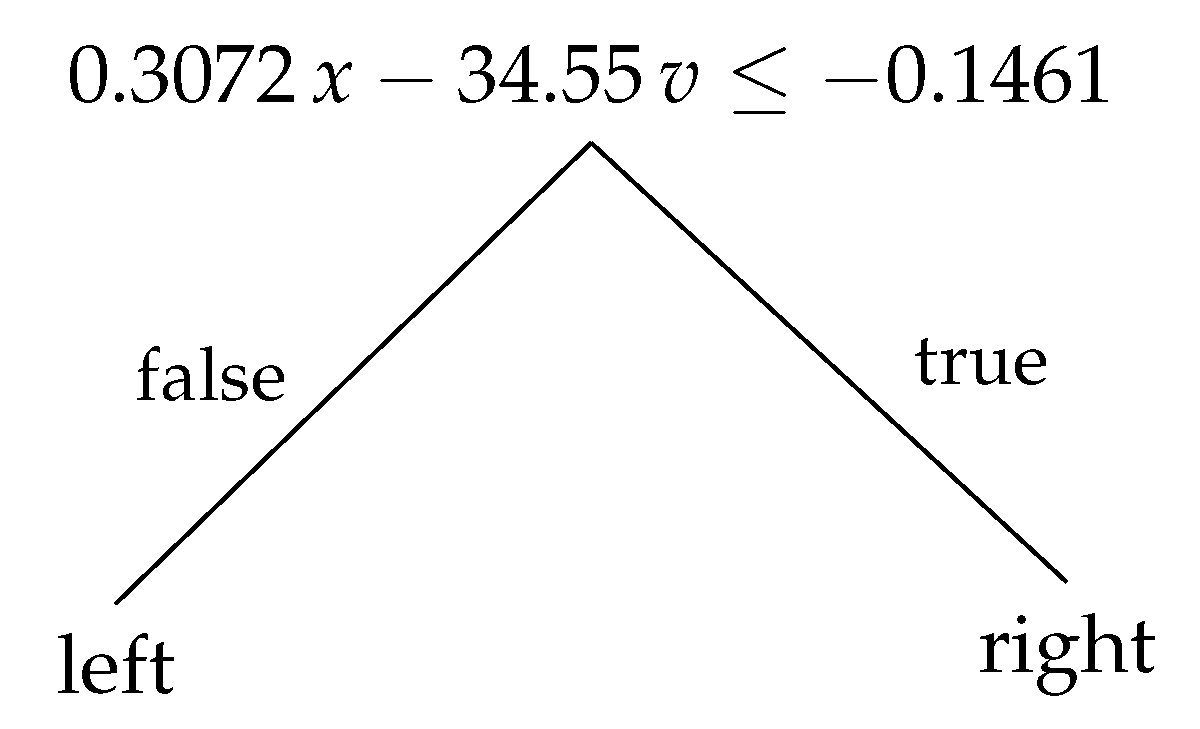

4.4.1. Decision Trees: Compact and Readable Models

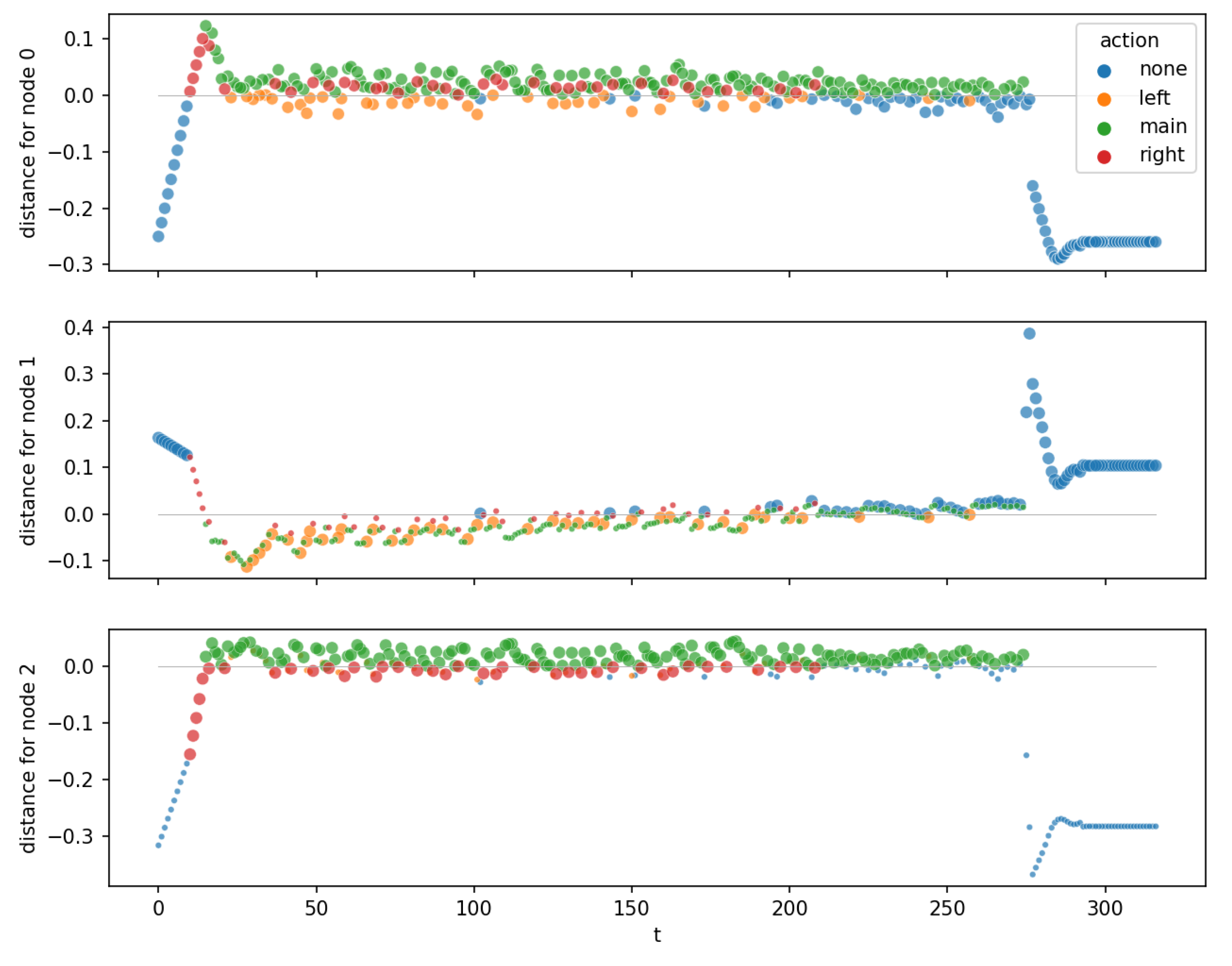

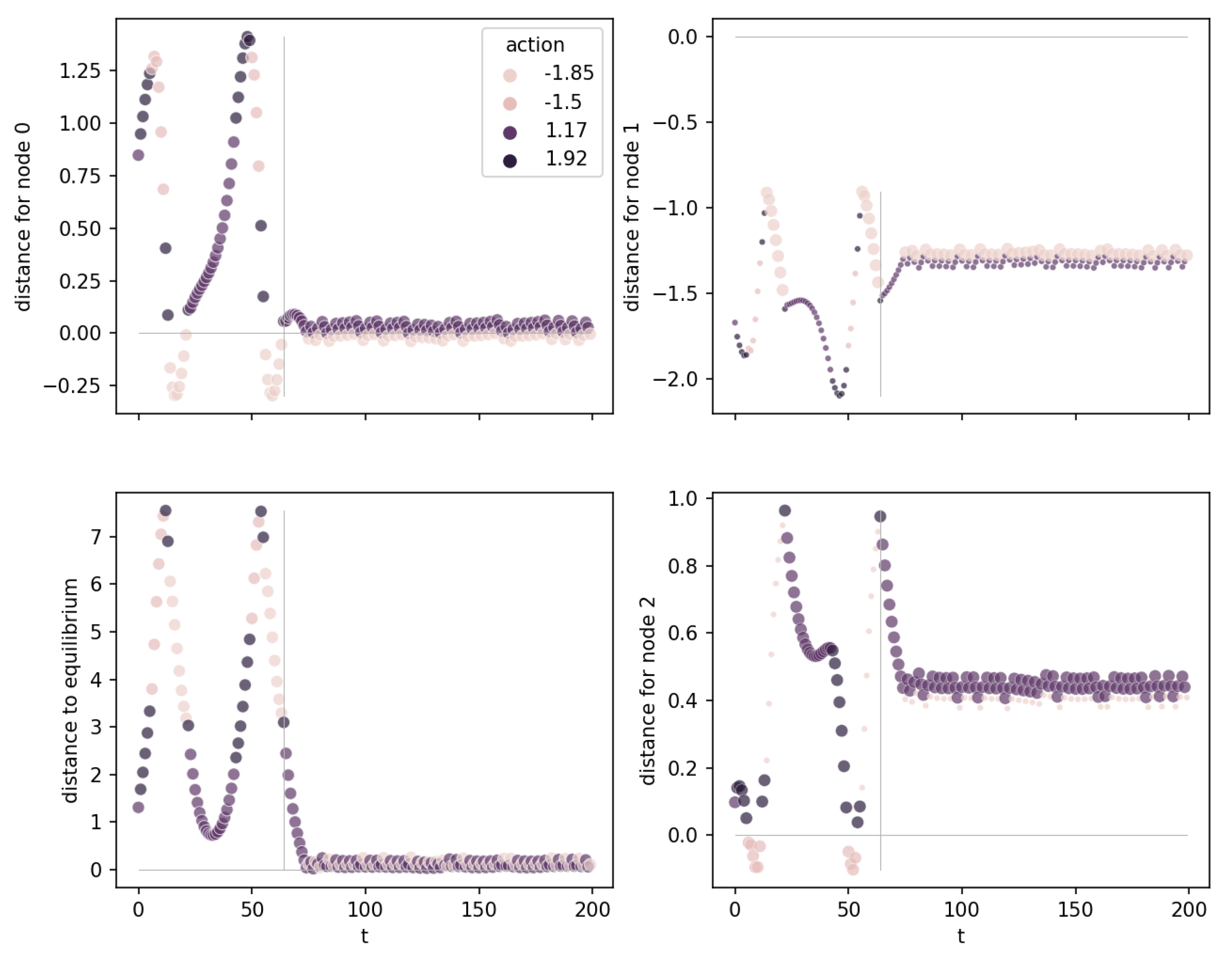

4.4.2. Distance to Hyperplanes

- equilibrium nodes (or attracting nodes): nodes with distance near to zero for all observation points after a transient period. These nodes occur only in those environments where an equilibrium has to be reached.

- swing-up nodes: nodes that do not stabilize at a distance close to zero. These nodes are responsible for swing-up, for bringing energy into a system. The trees for environments MountainCar, MountainCarContinuous, and Acrobot consist only of swing-up nodes because, in these environments, the goal state to reach is not an equilibrium.

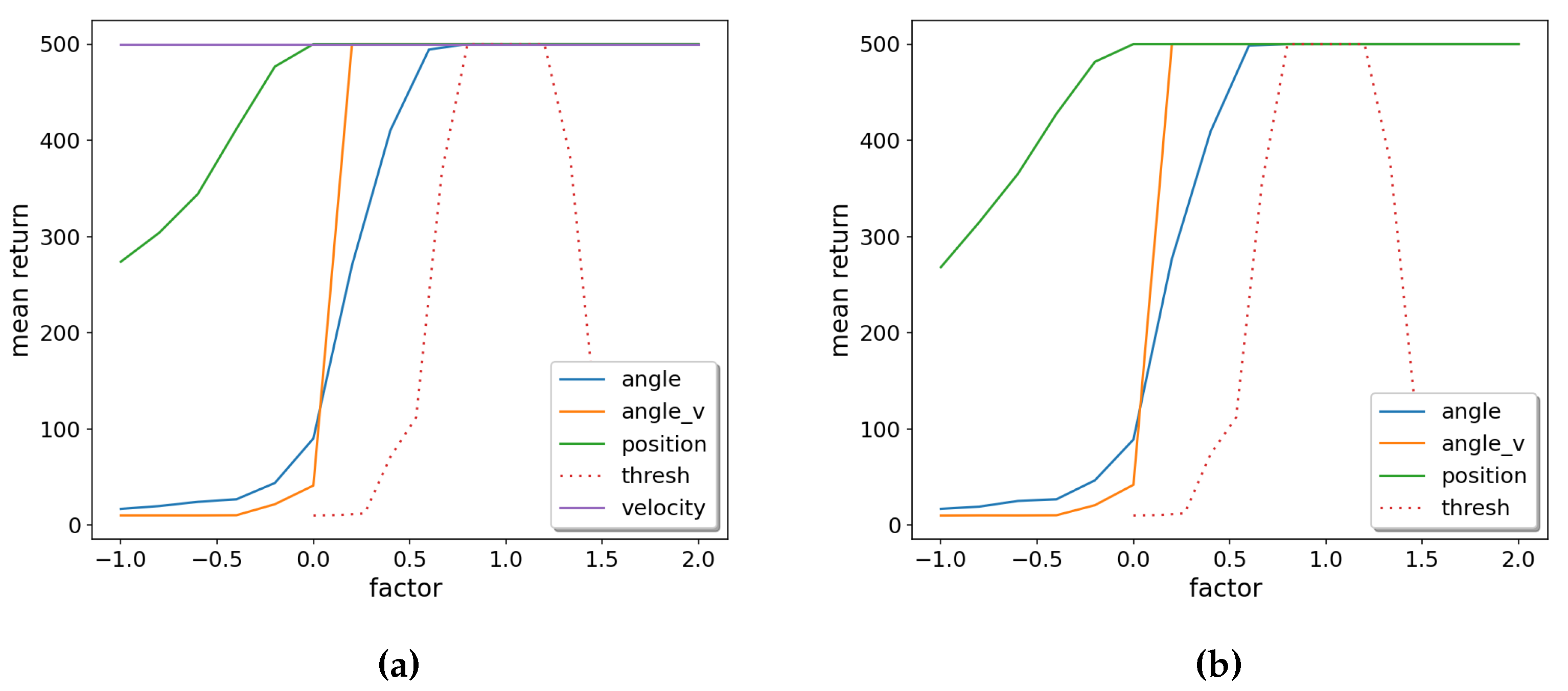

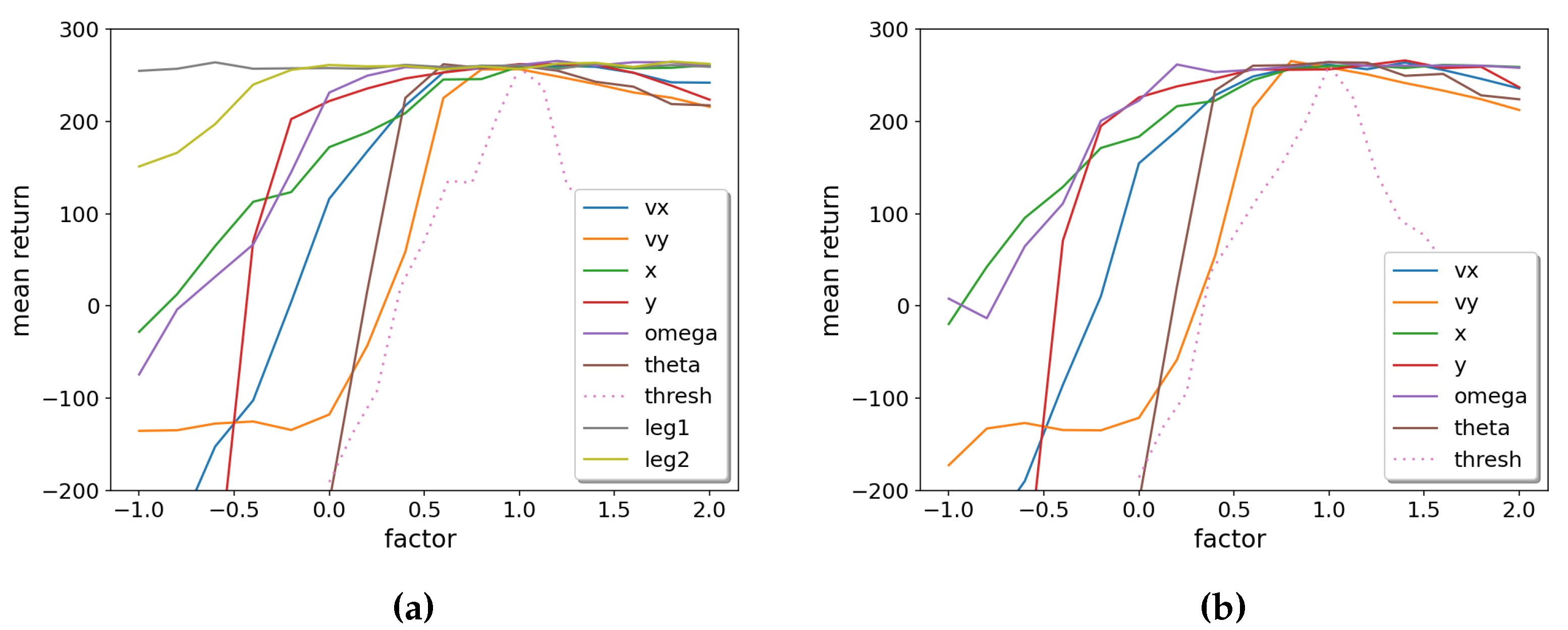

4.4.3. Sensitivity Analysis

5. Discussion

- Can we understand why ITER + OPCT is successful, while OPCT obtained by the EPS algorithm is not? We have no direct proof, but from the experiments conducted, we can share these insights: Firstly, equilibrium problems like CartPole or Pendulum exhibit a severe oversampling of some states (i.e., the upright pendulum state). If, as a consequence, the initial DT is not well-performing, ITER allows to add the correct oracle actions for problematic samples and to improve the tree in critical regions of the observation space. Secondly, for environment Pendulum we observed that the initial DT was “bad” because it learned only from near-perfect oracle episodes: It had not seen any samples outside the near-perfect episode region and hypothesized the wrong actions in the outside regions. Again, ITER helps: A “bad” DT is likely to visit these outside regions and then add samples combined with the correct oracle actions to its sample set.

- We investigated another possible variant of an iterative algorithm: In this variant, observations were sampled while the oracle was training (and probably also visited unfavorable regions of the observation space). After the oracle had completed its training, the samples were labeled with the action predictions of the fully trained oracle. These labeled samples were presented to DTs in a similar iterative algorithm as described above. However, this algorithm was not successful.

- A striking feature of our results is that DTs (predominantly those trained with ITER) can outperform the oracle they were trained from. This seems paradoxical at first glance, but the decision space analysis for MountainCar in Section 4.3 has shown the likely reason: In Figure 3, the decision spaces of the oracles are overly complex (similar to overfitted classification boundaries, although overfitted is not the proper term in the RL context). With their significantly reduced degrees of freedom, the DTs provide simpler, better generalizing models. Since they do not follow the “mistakes” of the overly complex DRL models, they exhibit a better performance. It fits to this picture that the MountainCarContinuous results in Figure 2 are best at (better than oracle) and slowly degrade towards the oracle performance as d increases: The DT gets more degrees of freedom and mimics the (slightly) non-optimal oracle better and better. The fact that DTs can be better than the DRL model they were trained on is compatible with the reasoning of Rudin [18], who stated that transparent models are not only easier to explain but often also outperform black box models.

- We applied algorithm ITER to OPCT. Can CART also benefit from ITER? – The solved-depths shown in column ITER + CART of Table 2a add up to , which is nearly as bad as EPS + CART (). Only the solved-depth for Pendulum improved somewhat from to 7. We conclude that the expressive power of OPCTs is important for being successful in creating shallow DTs with ITER.

- A last point worth mentioning is the intrinsic trustworthiness of DTs. They partition the observation space in a finite (and often small) set of regions, where the decision is the same for all points. (This feature includes smooth extrapolation to yet-unknown regions.) DRL models, on the other hand, may have arbitrarily complex decision spaces: If such a model predicts action a for point x, it is not known which points in the neighborhood of x will have the same action.

6. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Data Availability Statement

Conflicts of Interest

Abbreviations

| BB | Bounding Box algorithm |

| CART | Classification and Regression Trees |

| DRL | Deep Reinforcement Learning |

| DQN | Deep Q-Network |

| DT | Decision Tree |

| EPS | Episode Samples algorithm |

| ITER | Iterative Training of Explainable RL models |

| OPCT | Oblique Predictive Clustering Tree |

| PPO | Proximal Policy Optimization |

| RL | Reinforcement Learning |

| SB3 | Stable-Baselines3 |

| TD3 | Twin Delayed Deep Deterministic Policy Gradient |

References

- Engelhardt, R.C.; Lange, M.; Wiskott, L.; Konen, W. Sample-Based Rule Extraction for Explainable Reinforcement Learning. In Proceedings of the Machine Learning, Optimization, and Data Science; Nicosia, G., Ojha, V., La Malfa, E., La Malfa, G., Pardalos, P., et al., Eds.; Springer: Cham, 2023; Volume 13810, LNCS. pp. 330–345. [Google Scholar] [CrossRef]

- Adadi, A.; Berrada, M. Peeking inside the black-box: a survey on explainable artificial intelligence (XAI). IEEE access 2018, 6, 52138–52160. [Google Scholar] [CrossRef]

- Molnar, C.; Casalicchio, G.; Bischl, B. Interpretable Machine Learning – A Brief History, State-of-the-Art and Challenges. In Proceedings of the ECML PKDD 2020Workshops; Koprinska, I., Kamp, M., Appice, A., et al., Eds.; Springer: Cham, 2020; pp. 417–431. [Google Scholar] [CrossRef]

- Molnar, C. Interpretable Machine Learning: A guide for making black box models explainable. 2022. https://christophm.github.io/interpretable-ml-book/.

- Puiutta, E.; Veith, E.M.S.P. Explainable Reinforcement Learning: A Survey. In Proceedings of the Machine Learning and Knowledge Extraction; Holzinger, A., Kieseberg, P., Tjoa, A.M., Weippl, E., Eds.; Springer: Cham, 2020; pp. 77–95. [Google Scholar] [CrossRef]

- Heuillet, A.; Couthouis, F.; Díaz-Rodríguez, N. Explainability in deep reinforcement learning. Knowledge-Based Systems 2021, 214, 106685. [Google Scholar] [CrossRef]

- Milani, S.; Topin, N.; Veloso, M.; Fang, F. A survey of explainable reinforcement learning. arXiv 2022. [Google Scholar] [CrossRef]

- Lundberg, S.M.; Erion, G.; Chen, H.; DeGrave, A.; Prutkin, J.M.; Nair, B.; Katz, R.; Himmelfarb, J.; Bansal, N.; Lee, S.I. From local explanations to global understanding with explainable AI for trees. Nature Machine Intelligence 2020, 2, 56–67. [Google Scholar] [CrossRef] [PubMed]

- Liu, G.; et al. Toward Interpretable Deep Reinforcement Learning with Linear Model U-Trees. In Proceedings of the Machine 493 Learning and Knowledge Discovery in Databases; Berlingerio, M., et al., Eds.; Springer, 2019; Volume 11052, LNCS. pp. 414–429. [Google Scholar] [CrossRef]

- Mania, H.; Guy, A.; Recht, B. Simple random search of static linear policies is competitive for reinforcement learning. In Proceedings of the Advances in Neural Information Processing Systems; Bengio, S., Wallach, H., Larochelle, H., Grauman, K., Cesa-Bianchi, N., Garnett, R., Eds.; Curran Associates, Inc., 2018; Volume 31. [Google Scholar]

- Coppens, Y.; Efthymiadis, K.; et al. Distilling deep reinforcement learning policies in soft decision trees. In Proceedings of the IJCAI 2019 workshop on explainable artificial intelligence; 2019; pp. 1–6. [Google Scholar]

- Frosst, N.; Hinton, G.E. Distilling a Neural Network Into a Soft Decision Tree. In Proceedings of the First International Workshop on Comprehensibility and Explanation in AI and ML; Besold, T.R., Kutz, O., Eds.; 2017; Volume 2071. CEUR Workshop Proceedings. [Google Scholar]

- Verma, A.; Murali, V.; Singh, R.; Kohli, P.; Chaudhuri, S. Programmatically Interpretable Reinforcement Learning. In Proceedings of the 35th International Conference on Machine Learning; Dy, J., Krause, A., Eds.; PMLR. 2018; Volume 80, Proceedings of Machine Learning Research. pp. 5045–5054. [Google Scholar]

- Zilke, J.R.; Loza Mencía, E.; Janssen, F. DeepRED – Rule Extraction from Deep Neural Networks. In Proceedings of the Discovery Science; Calders, T., Ceci, M., Malerba, D., Eds.; Springer: Cham, 2016; Volume 9956, LNCS. pp. 457–473. [Google Scholar] [CrossRef]

- Qiu, W.; Zhu, H. Programmatic Reinforcement Learning without Oracles. In Proceedings of the International Conference on Learning Representations; 2022. [Google Scholar]

- Schapire, R.E. The strength of weak learnability. Machine Learning 1990, 5, 197–227. [Google Scholar] [CrossRef]

- Freund, Y.; Schapire, R.E. A Decision-Theoretic Generalization of On-Line Learning and an Application to Boosting. Journal of Computer and System Sciences 1997, 55, 119–139. [Google Scholar] [CrossRef]

- Rudin, C. Stop explaining black box machine learning models for high stakes decisions and use interpretable models instead. Nature Machine Intelligence 2019, 1, 206–215. [Google Scholar] [CrossRef] [PubMed]

- Brockman, G.; Cheung, V.; Pettersson, L.; Schneider, J.; Schulman, J.; Tang, J.; Zaremba, W. OpenAI Gym. arXiv 2016. [Google Scholar] [CrossRef]

- Lovatto, A.G. CartPole Swingup - A simple, continuous-control environment for OpenAI Gym. 2021. https://github.com/0xangelo/gym-cartpole-swingup.

- Schulman, J.; Wolski, F.; Dhariwal, P.; Radford, A.; Klimov, O. Proximal Policy Optimization Algorithms. arXiv 2017. [Google Scholar] [CrossRef]

- Mnih, V.; Kavukcuoglu, K.; et al. Playing Atari with Deep Reinforcement Learning. arXiv 2013. [Google Scholar] [CrossRef]

- Fujimoto, S.; van Hoof, H.; Meger, D. Addressing Function Approximation Error in Actor-Critic Methods. In Proceedings of the 35th International Conference on Machine Learning; Dy, J., Krause, A., Eds.; PMLR. 2018; Volume 80, Proceedings of Machine Learning Research. pp. 1587–1596. [Google Scholar]

- Raffin, A.; Hill, A.; Gleave, A.; Kanervisto, A.; Ernestus, M.; Dormann, N. Stable-Baselines3: Reliable Reinforcement Learning Implementations. Journal of Machine Learning Research 2021, 22, 1–8. [Google Scholar]

- Breiman, L.; Friedman, J.H.; Olshen, R.A.; Stone, C.J. Classification And Regression Trees; Routledge, 1984. [Google Scholar]

- Pedregosa, F.; Varoquaux, G.; Gramfort, A.; Michel, V.; Thirion, B.; Grisel, O.; Blondel, M.; Prettenhofer, P.; Weiss, R.; Dubourg, V.; et al. Scikit-learn: Machine Learning in Python. Journal of Machine Learning Research 2011, 12, 2825–2830. [Google Scholar]

- Stepišnik, T.; Kocev, D. Oblique predictive clustering trees. Knowledge-Based Systems 2021, 227, 107228. [Google Scholar] [CrossRef]

- Alipov, V.; Simmons-Edler, R.; Putintsev, N.; Kalinin, P.; Vetrov, D. Towards practical credit assignment for deep reinforcement learning. arXiv 2021. [Google Scholar] [CrossRef]

- Woergoetter, F.; Porr, B. Reinforcement learning. Scholarpedia 2008, 3, 1448. [Google Scholar] [CrossRef]

- Roth, A.E. (Ed.) The Shapley Value: Essays in Honor of Lloyd S. Shapley; Cambridge University Press, 1988. [Google Scholar] [CrossRef]

| 1 | The experiments can be found in the Github repository https://github.com/MarcOedingen/Iterative-Oblique-Decision-Trees

|

| 2 | The ratio between and or the total number of samples are hyperparameters that have not yet been tuned to optimal values. It could be that other values would lead to significantly higher sample efficiency. |

| 3 | Note that these numbers can be deceptive: A might be from a tree being below the threshold only by a tiny margin or a 5 might hide the fact that a depth-4 tree is only slightly below the threshold. |

| 4 | We do not use overly complex DRL architectures but keep the default parameters suggested by the SB3 methods [24]. |

| Environment | Observation space () | Action space () | |

|---|---|---|---|

| Acrobot-v1 | First angle , First angle , Second angle , Second angle , Angular velocity , Angular velocity |

Apply torque{-1 (0), 0 (1), 1 (2)} |

|

| CartPole-v1 | Position cart , Velocity cart , Angle pole , Velocity pole |

Accelerate{left (0), right (1)} |

475 |

|

CartPole- SwingUp-v1 |

Position cart , Velocity cart , Angle pole , Angle pole , Angular velocity pole |

Accelerate{left (0), not (1), right (2)} |

undef. |

| LunarLander-v2 | Position , Position , Velocity , Velocity , Angle , Angular velocity , Contact left leg , Contact right leg |

Fire{not (0), left engine (1), main engine (2), right engine (3)} |

200 |

| MountainCar-v0 | Position , Velocity |

Accelerate{left (0), not (1), right (2)} |

|

|

MountainCar Continuous-v0 |

Same as MountainCar-v0 | Accelerate | 90 |

| Pendulum-v1 | Angle , Angle , Angular velocity |

Apply torque | undef. |

| Number of parameters | |||||

|---|---|---|---|---|---|

| Environment | dim D | Model | Oracle | ITER | depth d |

| Acrobot-v1 | 6 | DQN | 136,710 | 9 | 1 |

| CartPole-v1 | 4 | PPO | 9155 | 7 | 1 |

| CartPole-SwingUp-v1 | 5 | DQN | 534,534 | 890 | 7 |

| LunarLander-v2 | 8 | PPO | 9797 | 31 | 2 |

| MountainCar-v0 | 2 | DQN | 134,656 | 5 | 1 |

| MountainCarContinuous-v0 | 2 | TD3 | 732,406 | 5 | 1 |

| Pendulum-v1 | 3 | TD3 | 734,806 | 156 | 5 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2023 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).