Submitted:

07 April 2023

Posted:

10 April 2023

You are already at the latest version

Abstract

Keywords:

1. Introduction

1.1. Sense of touch and vision-based tactile sensing

1.2. Neuromorphic vision-based tactile sensing

1.3. Challenges with event-based vision and existing solutions

1.4. Contributions

- We introduce, TactiGraph, a graph neural network based on SplineConv layers to handle data from a neuromorphic vision-based tactile sensor. TactiGraph is able to fully account for the spatially sparse and temporally dense nature of event streams.

- We deploy TactiGraph to solve the problem of contact angle prediction using the neuromorphic tactile sensor. We obtain an error of .

- TactiGraph maintains a high level of accuracy, with only an error of , even when the tactile sensor is used without an illumination source. This demonstrates that it is possible to reduce the cost of operating a vision-based tactile sensor by eliminating the need for LEDs, while still achieving reliable results.

1.5. Outline

2. Materials and Methods

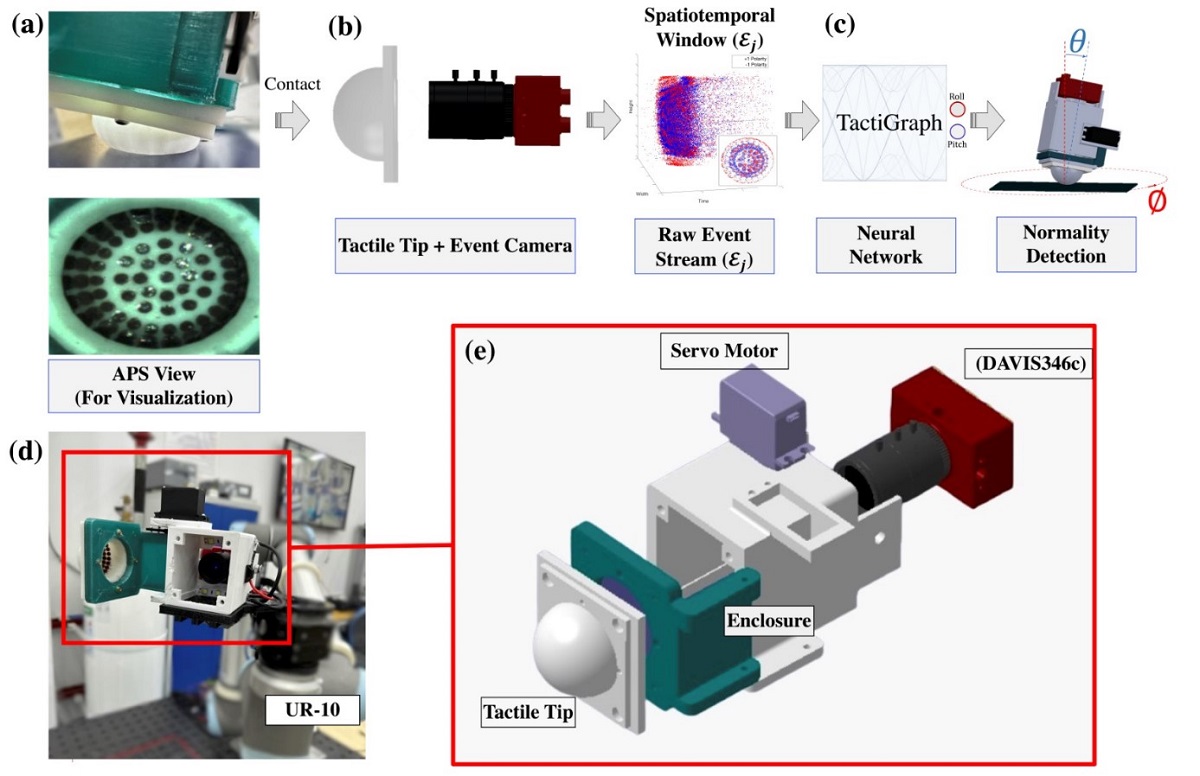

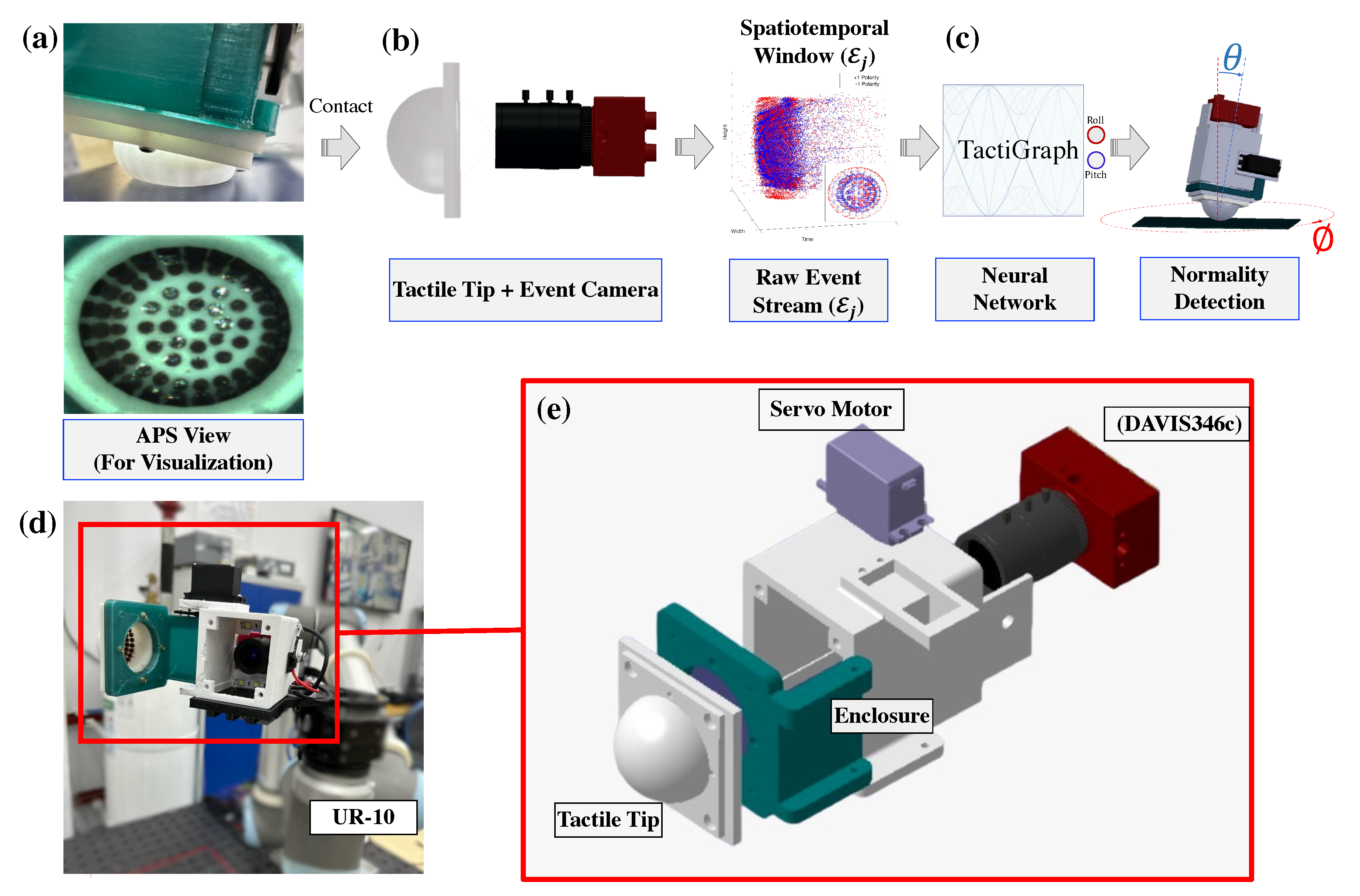

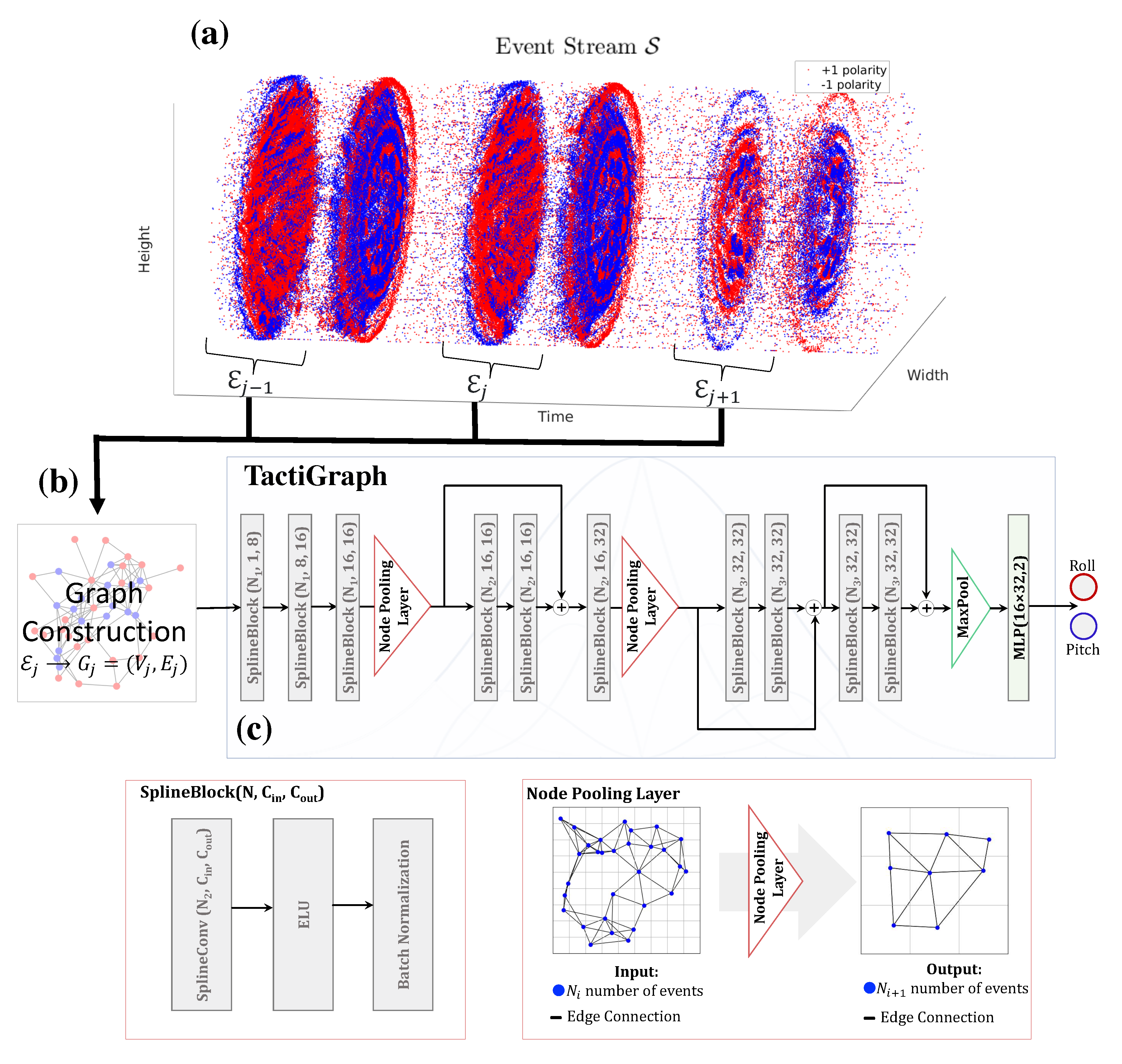

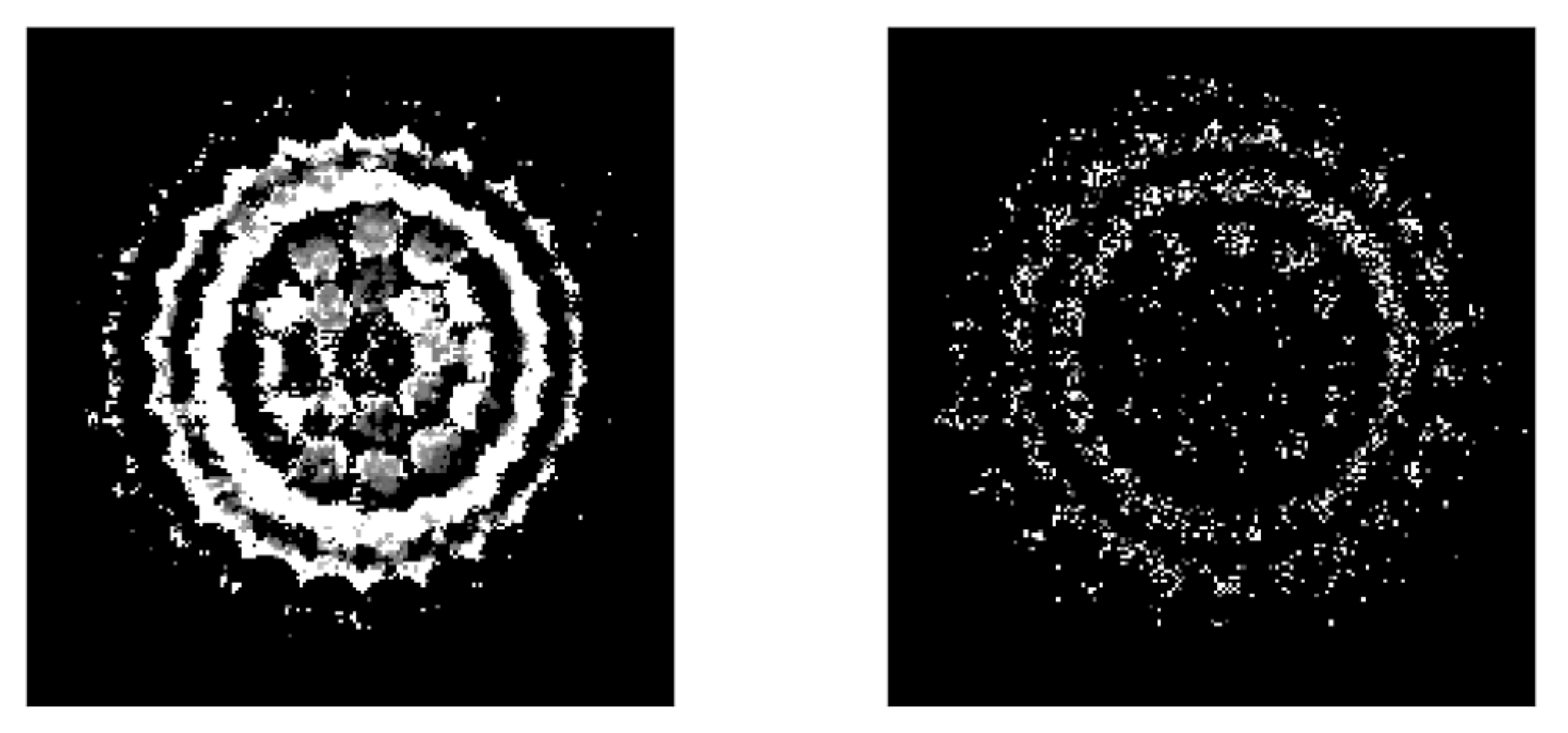

2.1. Data collection

2.2. Preprocessing the event stream

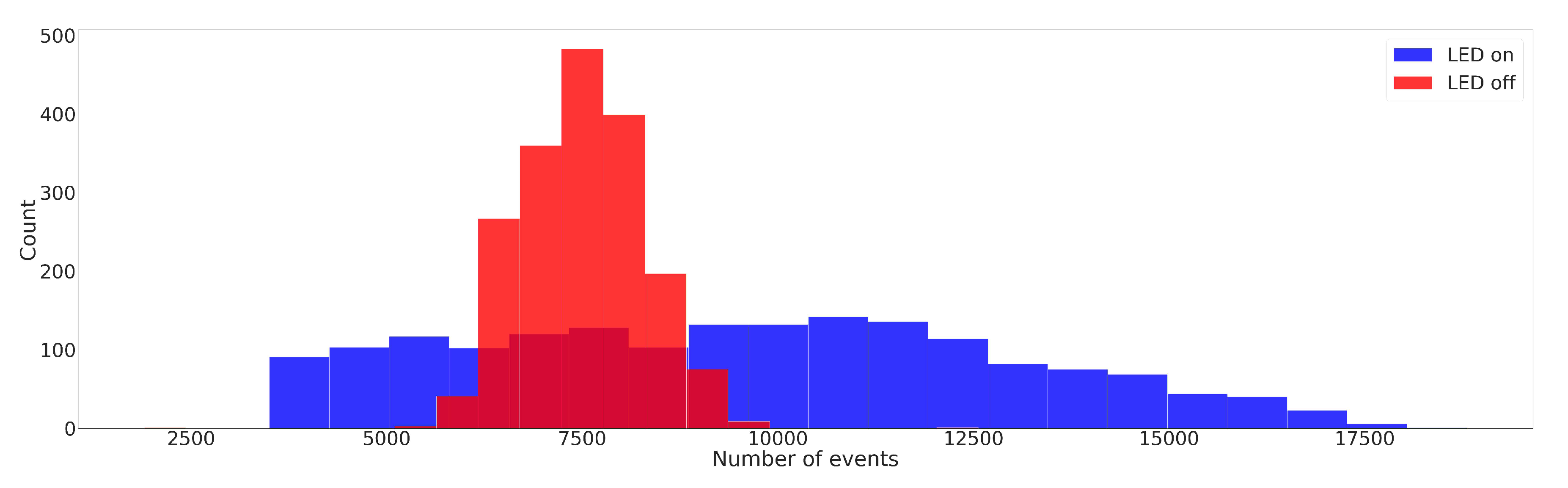

2.3. Graph construction

2.4. TactiGraph

2.5. Training setup

3. Results and Discussion

3.1. Evaluation of TactiGraph and the effect of jittering

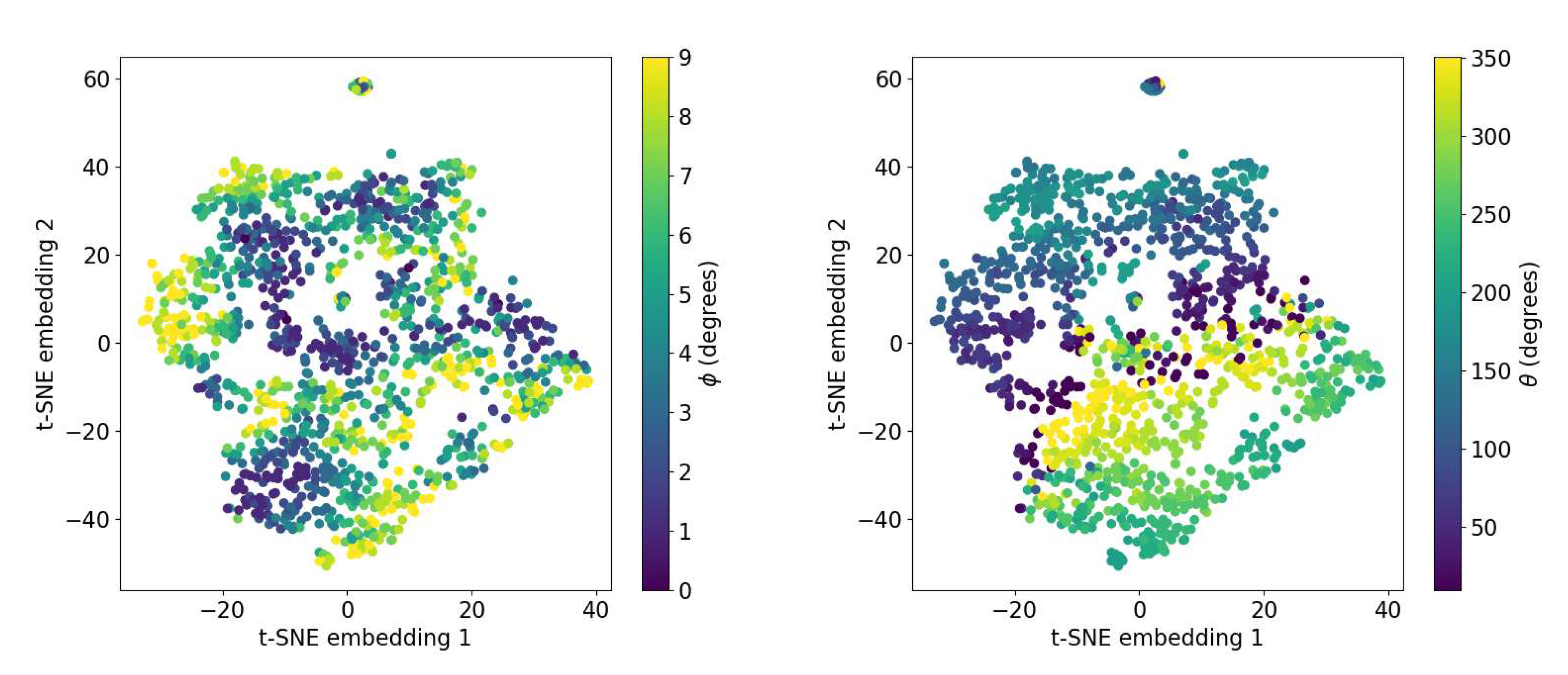

3.2. Visualizing TactiGraph’s embedding space

3.3. Benchmark results

3.4. Runtime analysis

3.5. Future work

4. Conclusions

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

Abbreviations

| VBTS | Vision-based tactile sensing |

| N-VBTS | Neuromorphic vision-based tactile sensing |

| LED | Light-emitting diode |

| GNN | Graph neural network |

| CNN | Convolutional neural network |

| SNN | Spiking neural network |

| MAE | Mean absolute error |

| kNN | k-nearest neighbors |

| t-SNE | t-distributed stochastic neighbor embedding |

References

- Huang, X.; Muthusamy, R.; Hassan, E.; Niu, Z.; Seneviratne, L.; Gan, D.; Zweiri, Y. Neuromorphic Vision Based Contact-Level Classification in Robotic Grasping Applications. Sensors 2020, 20. [Google Scholar] [CrossRef] [PubMed]

- James, J.W.; Pestell, N.; Lepora, N.F. Slip Detection With a Biomimetic Tactile Sensor. IEEE Robotics and Automation Letters 2018, 3, 3340–3346. [Google Scholar] [CrossRef]

- Dong, S.; Jha, D.; Romeres, D.; Kim, S.; Nikovski, D.; Rodriguez, A. Tactile-RL for Insertion: Generalization to Objects of Unknown Geometry. 2021 IEEE International Conference on Robotics and Automation (ICRA), 2021.

- Kim, S.; Rodriguez, A. Active Extrinsic Contact Sensing: Application to General Peg-in-Hole Insertion. 2022 International Conference on Robotics and Automation (ICRA), 2022, pp. 10241–10247. [CrossRef]

- Xia, Z.; Deng, Z.; Fang, B.; Yang, Y.; Sun, F. A review on sensory perception for dexterous robotic manipulation. International Journal of Advanced Robotic Systems 2022, 19, 17298806221095974. [Google Scholar] [CrossRef]

- Li, Q.; Kroemer, O.; Su, Z.; Veiga, F.F.; Kaboli, M.; Ritter, H.J. A Review of Tactile Information: Perception and Action Through Touch. IEEE Transactions on Robotics 2020, 36, 1619–1634. [Google Scholar] [CrossRef]

- Dahiya, R.S.; Valle, M. Robotic Tactile Sensing; Springer Netherlands, 2013. [CrossRef]

- Romeo, R.A.; Zollo, L. Methods and Sensors for Slip Detection in Robotics: A Survey. IEEE Access 2020, 8, 73027–73050. [Google Scholar] [CrossRef]

- Shah, U.H.; Muthusamy, R.; Gan, D.; Zweiri, Y.; Seneviratne, L. On the Design and Development of Vision-based Tactile Sensors. Journal of Intelligent & Robotic Systems 2021, 102, 82. [Google Scholar] [CrossRef]

- Zaid, I.M.; Halwani, M.; Ayyad, A.; Imam, A.; Almaskari, F.; Hassanin, H.; Zweiri, Y. Elastomer-Based Visuotactile Sensor for Normality of Robotic Manufacturing Systems. Polymers 2022, 14. [Google Scholar] [CrossRef] [PubMed]

- Lepora, N.F. Soft Biomimetic Optical Tactile Sensing With the TacTip: A Review. IEEE Sensors Journal 2021, 21, 21131–21143. [Google Scholar] [CrossRef]

- Sferrazza, C.; D’Andrea, R. Design, Motivation and Evaluation of a Full-Resolution Optical Tactile Sensor. Sensors 2019, 19. [Google Scholar] [CrossRef]

- Lambeta, M.; Chou, P.W.; Tian, S.; Yang, B.; Maloon, B.; Most, V.R.; Stroud, D.; Santos, R.; Byagowi, A.; Kammerer, G.; Jayaraman, D.; Calandra, R. DIGIT: A Novel Design for a Low-Cost Compact High-Resolution Tactile Sensor with Application to In-Hand Manipulation. IEEE Robotics and Automation Letters (RA-L) 2020, 5, 3838–3845. [Google Scholar] [CrossRef]

- Yuan, W.; Dong, S.; Adelson, E.H. GelSight: High-Resolution Robot Tactile Sensors for Estimating Geometry and Force. Sensors 2017, 17. [Google Scholar] [CrossRef] [PubMed]

- Wang, S.; She, Y.; Romero, B.; Adelson, E.H. GelSight Wedge: Measuring High-Resolution 3D Contact Geometry with a Compact Robot Finger. 2021 IEEE International Conference on Robotics and Automation (ICRA). IEEE, 2021.

- Ward-Cherrier, B.; Pestell, N.; Lepora, N.F. NeuroTac: A Neuromorphic Optical Tactile Sensor applied to Texture Recognition. 2020 IEEE International Conference on Robotics and Automation (ICRA), 2020, pp. 2654–2660. [CrossRef]

- Bauza, M.; Valls, E.; Lim, B.; Sechopoulos, T.; Rodriguez, A. Tactile Object Pose Estimation from the First Touch with Geometric Contact Rendering, 2020. [CrossRef]

- Li, M.; Li, T.; Jiang, Y. Marker Displacement Method Used in Vision-Based Tactile Sensors—From 2D to 3D: A Review 2023. [CrossRef]

- Lepora, N.F.; Lloyd, J. Optimal Deep Learning for Robot Touch: Training Accurate Pose Models of 3D Surfaces and Edges. IEEE Robotics and Automation Magazine 2020, 27, 66–77. [Google Scholar] [CrossRef]

- Faris, O.; Muthusamy, R.; Renda, F.; Hussain, I.; Gan, D.; Seneviratne, L.; Zweiri, Y. Proprioception and Exteroception of a Soft Robotic Finger Using Neuromorphic Vision-Based Sensing. Soft Robotics.

- Muthusamy, R.; Huang, X.; Zweiri, Y.; Seneviratne, L.; Gan, D. Neuromorphic Event-Based Slip Detection and Suppression in Robotic Grasping and Manipulation. IEEE Access 2020, 8, 153364–153384. [Google Scholar] [CrossRef]

- Faris, O.; Alyammahi, H.; Suthar, B.; Muthusamy, R.; Shah, U.H.; Hussain, I.; Gan, D.; Seneviratne, L.; Zweiri, Y. Design and experimental evaluation of a sensorized parallel gripper with optical mirroring mechanism. Mechatronics 2023, 90, 102955. [Google Scholar] [CrossRef]

- Quan, S.; Liang, X.; Zhu, H.; Hirano, M.; Yamakawa, Y. HiVTac: A High-Speed Vision-Based Tactile Sensor for Precise and Real-Time Force Reconstruction with Fewer Markers. Sensors 2022, 22. [Google Scholar] [CrossRef]

- Li, R.; Adelson, E.H. Sensing and Recognizing Surface Textures Using a GelSight Sensor. 2013 IEEE Conference on Computer Vision and Pattern Recognition, 2013, pp. 1241–1247. [CrossRef]

- Pestell, N.; Lepora, N.F. Artificial SA-I, RA-I and RA-II/vibrotactile afferents for tactile sensing of texture. Journal of The Royal Society Interface 2022, 19. [Google Scholar] [CrossRef]

- Li, R.; Platt, R.; Yuan, W.; ten Pas, A.; Roscup, N.; Srinivasan, M.A.; Adelson, E. Localization and manipulation of small parts using GelSight tactile sensing. 2014 IEEE/RSJ International Conference on Intelligent Robots and Systems, 2014, pp. 3988–3993. [CrossRef]

- She, Y.; Wang, S.; Dong, S.; Sunil, N.; Rodriguez, A.; Adelson, E. Cable manipulation with a tactile-reactive gripper. The International Journal of Robotics Research 2021, 40, 1385–1401. [Google Scholar] [CrossRef]

- Halwani, M.; Ayyad, A.; AbuAssi, L.; Abdulrahman, Y.; Almaskari, F.; Hassanin, H.; Abusafieh, A.; Zweiri, Y. A Novel Vision-based Multi-functional Sensor for Normality and Position Measurements in Precise Robotic Manufacturing. SSRN Electronic Journal 2023. [Google Scholar] [CrossRef]

- Santos, K.R.d.S.; de Carvalho, G.M.; Tricarico, R.T.; Ferreira, L.F.L.R.; Villani, E.; Sutério, R. Evaluation of perpendicularity methods for a robotic end effector from aircraft industry. 2018 13th IEEE International Conference on Industry Applications (INDUSCON), 2018, pp. 1373–1380. [CrossRef]

- Zhang, Y.; Ding, H.; Zhao, C.; Zhou, Y.; Cao, G. Detecting the normal-direction in automated aircraft manufacturing based on adaptive alignment. Sci Prog 2020, 103, 36850420981212. [Google Scholar] [CrossRef]

- Yu, L.; Zhang, Y.; Bi, Q.; Wang, Y. Research on surface normal measurement and adjustment in aircraft assembly. Precision Engineering 2017, 50, 482–493. [Google Scholar] [CrossRef]

- Lin, M.; Yuan, P.; Tan, H.; Liu, Y.; Zhu, Q.; Li, Y. Improvements of robot positioning accuracy and drilling perpendicularity for autonomous drilling robot system. 2015 IEEE International Conference on Robotics and Biomimetics (ROBIO), 2015, pp. 1483–1488. [CrossRef]

- Tian, W.; Zhou, W.; Zhou, W.; Liao, W.; Zeng, Y. Auto-normalization algorithm for robotic precision drilling system in aircraft component assembly. Chinese Journal of Aeronautics 2013, 26, 495–500. [Google Scholar] [CrossRef]

- Psomopoulou, E.; Pestell, N.; Papadopoulos, F.; Lloyd, J.; Doulgeri, Z.; Lepora, N.F. A Robust Controller for Stable 3D Pinching Using Tactile Sensing. IEEE Robotics and Automation Letters 2021, 6, 8150–8157. [Google Scholar] [CrossRef]

- Fan, W.; Yang, M.; Xing, Y.; Lepora, N.F.; Zhang, D. Tac-VGNN: A Voronoi Graph Neural Network for Pose-Based Tactile Servoing, 2023. [CrossRef]

- Rigi, A.; Baghaei Naeini, F.; Makris, D.; Zweiri, Y. A Novel Event-Based Incipient Slip Detection Using Dynamic Active-Pixel Vision Sensor (DAVIS). Sensors 2018, 18. [Google Scholar] [CrossRef] [PubMed]

- Baghaei Naeini, F.; AlAli, A.M.; Al-Husari, R.; Rigi, A.; Al-Sharman, M.K.; Makris, D.; Zweiri, Y. A Novel Dynamic-Vision-Based Approach for Tactile Sensing Applications. IEEE Transactions on Instrumentation and Measurement 2020, 69, 1881–1893. [Google Scholar] [CrossRef]

- Naeini, F.B.; Kachole, S.; Muthusamy, R.; Makris, D.; Zweiri, Y. Event Augmentation for Contact Force Measurements. IEEE Access 2022, 10, 123651–123660. [Google Scholar] [CrossRef]

- Macdonald, F.L.A.; Lepora, N.F.; Conradt, J.; Ward-Cherrier, B. Neuromorphic Tactile Edge Orientation Classification in an Unsupervised Spiking Neural Network. Sensors 2022, 22. [Google Scholar] [CrossRef] [PubMed]

- Mead, C.A.; Mahowald, M. A silicon model of early visual processing. Neural Networks 1988, 1, 91–97. [Google Scholar] [CrossRef]

- Hanover, D.; Loquercio, A.; Bauersfeld, L.; Romero, A.; Penicka, R.; Song, Y.; Cioffi, G.; Kaufmann, E.; Scaramuzza, D. Autonomous Drone Racing: A Survey, 2023. [CrossRef]

- Ralph, N.O.; Marcireau, A.; Afshar, S.; Tothill, N.; van Schaik, A.; Cohen, G. Astrometric Calibration and Source Characterisation of the Latest Generation Neuromorphic Event-based Cameras for Space Imaging, 2022. [CrossRef]

- Salah, M.; Chehadah, M.; Humais, M.; Wahbah, M.; Ayyad, A.; Azzam, R.; Seneviratne, L.; Zweiri, Y. A Neuromorphic Vision-Based Measurement for Robust Relative Localization in Future Space Exploration Missions. IEEE Transactions on Instrumentation and Measurement, 2022. [Google Scholar] [CrossRef]

- Ayyad, A.; Halwani, M.; Swart, D.; Muthusamy, R.; Almaskari, F.; Zweiri, Y. Neuromorphic vision based control for the precise positioning of robotic drilling systems. Robotics and Computer-Integrated Manufacturing 2023, 79, 102419. [Google Scholar] [CrossRef]

- Muthusamy, R.; Ayyad, A.; Halwani, M.; Swart, D.; Gan, D.; Seneviratne, L.; Zweiri, Y. Neuromorphic Eye-in-Hand Visual Servoing. IEEE Access 2021, 9, 55853–55870. [Google Scholar] [CrossRef]

- Hay, O.A.; Chehadeh, M.; Ayyad, A.; Wahbah, M.; Humais, M.A.; Boiko, I.; Seneviratne, L.; Zweiri, Y. Noise-Tolerant Identification and Tuning Approach Using Deep Neural Networks for Visual Servoing Applications. IEEE Transactions on Robotics, 2023; 1–13. [Google Scholar] [CrossRef]

- Rebecq, H.; Ranftl, R.; Koltun, V.; Scaramuzza, D. Events-to-Video: Bringing Modern Computer Vision to Event Cameras. IEEE Conf. Comput. Vis. Pattern Recog. (CVPR) 2019. [Google Scholar]

- Gallego, G.; Delbrück, T.; Orchard, G.; Bartolozzi, C.; Taba, B.; Censi, A.; Leutenegger, S.; Davison, A.J.; Conradt, J.; Daniilidis, K.; Scaramuzza, D. Event-Based Vision: A Survey. IEEE Transactions on Pattern Analysis and Machine Intelligence 2022, 44, 154–180. [Google Scholar] [CrossRef]

- Bing, Z.; Baumann, I.; Jiang, Z.; Huang, K.; Cai, C.; Knoll, A. Supervised Learning in SNN via Reward-Modulated Spike-Timing-Dependent Plasticity for a Target Reaching Vehicle. Frontiers in Neurorobotics 2019, 13. [Google Scholar] [CrossRef]

- Schuman, C.D.; Kulkarni, S.R.; Parsa, M.; Mitchell, J.P.; Date, P.; Kay, B. Opportunities for neuromorphic computing algorithms and applications. Nature Computational Science 2022, 2, 10–19. [Google Scholar] [CrossRef]

- Gehrig, D.; Loquercio, A.; Derpanis, K.G.; Scaramuzza, D. End-to-End Learning of Representations for Asynchronous Event-Based Data. Int. Conf. Comput. Vis. (ICCV), 2019.

- Gehrig, M.; Scaramuzza, D. Recurrent Vision Transformers for Object Detection with Event Cameras, 2022. [CrossRef]

- Gehrig, M.; Millhäusler, M.; Gehrig, D.; Scaramuzza, D. E-RAFT: Dense Optical Flow from Event Cameras. International Conference on 3D Vision (3DV), 2021.

- Barchid, S.; Mennesson, J.; Djéraba, C. Bina-Rep Event Frames: A Simple and Effective Representation for Event-Based Cameras. 2022 IEEE International Conference on Image Processing (ICIP), 2022, pp. 3998–4002. [CrossRef]

- Bi, Y.; Chadha, A.; Abbas, A. ;.; Bourtsoulatze, E.; Andreopoulos, Y. Graph-based Object Classification for Neuromorphic Vision Sensing. 2019 IEEE International Conference on Computer Vision (ICCV). IEEE, 2019.

- Li, Y.; Zhou, H.; Yang, B.; Zhang, Y.; Cui, Z.; Bao, H.; Zhang, G. Graph-based Asynchronous Event Processing for Rapid Object Recognition. 2021 IEEE/CVF International Conference on Computer Vision (ICCV), 2021, pp. 914–923. [CrossRef]

- Fey, M.; Lenssen, J.E.; Weichert, F.; Müller, H. SplineCNN: Fast Geometric Deep Learning with Continuous B-Spline Kernels. IEEE Conference on Computer Vision and Pattern Recognition (CVPR), 2018.

- Deng, Y.; Chen, H.; Xie, B.; Liu, H.; Li, Y. A Dynamic Graph CNN with Cross-Representation Distillation for Event-Based Recognition, 2023. [CrossRef]

- Alkendi, Y.; Azzam, R.; Ayyad, A.; Javed, S.; Seneviratne, L.; Zweiri, Y. Neuromorphic Camera Denoising Using Graph Neural Network-Driven Transformers. IEEE Transactions on Neural Networks and Learning Systems, 2022; 1–15. [Google Scholar] [CrossRef]

- Bronstein, M.M.; Bruna, J.; Cohen, T.; Veličković, P. Geometric Deep Learning: Grids, Groups, Graphs, Geodesics, and Gauges, 2021. [CrossRef]

- Schaefer, S.; Gehrig, D.; Scaramuzza, D. AEGNN: Asynchronous Event-based Graph Neural Networks. IEEE Conference on Computer Vision and Pattern Recognition, 2022.

- You, J.; Du, T.; Leskovec, J. ROLAND: graph learning framework for dynamic graphs. Proceedings of the 28th ACM SIGKDD Conference on Knowledge Discovery and Data Mining, 2022, pp. 2358–2366.

- Gehrig, D.; Scaramuzza, D. Pushing the Limits of Asynchronous Graph-based Object Detection with Event Cameras, 2022. [CrossRef]

- Gong, L.; Cheng, Q. Exploiting Edge Features for Graph Neural Networks. 2019 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), 2019, pp. 9203–9211. [CrossRef]

- Wang, K.; Han, S.C.; Long, S.; Poon, J. ME-GCN: Multi-dimensional Edge-Embedded Graph Convolutional Networks for Semi-supervised Text Classification, 2022. [CrossRef]

- iniVation. DAVIS 346. https://inivation.com/wp-content/uploads/2019/08/DAVIS346.pdf.

- Universal Robotics. USER MANUAL - UR10 CB-SERIES - SW3.15 - ENGLISH INTERNATIONAL (EN). https://www.universal-robots.com/download/manuals-cb-series/user/ur10/315/user-manual-ur10-cb-series-sw315-english-international-en/.

- Guo, S.; Delbruck, T. Low Cost and Latency Event Camera Background Activity Denoising. IEEE Transactions on Pattern Analysis and Machine Intelligence 2023, 45, 785–795. [Google Scholar] [CrossRef] [PubMed]

- Feng, Y.; Lv, H.; Liu, H.; Zhang, Y.; Xiao, Y.; Han, C. Event Density Based Denoising Method for Dynamic Vision Sensor. Applied Sciences 2020, 10. [Google Scholar] [CrossRef]

- Delbrück, T. Frame-free dynamic digital vision. Proceedings of International Symposium on Secure-Life Electronics, Advanced Electronics for Quality Life and Society, Univ. of Tokyo, Mar. 6-7, 2008; Hotate, K., Ed.; nternational Symposium on Secure-Life Electronics, Advanced Electronics for Quality Life and Society 2008: Tokyo, 2008. [Google Scholar]

- Fey, M.; Lenssen, J.E. Fast Graph Representation Learning with PyTorch Geometric. ICLR Workshop on Representation Learning on Graphs and Manifolds, 2019.

- Kingma, D.P.; Ba, J. Adam: A method for stochastic optimization. arXiv preprint arXiv:1412.6980, 2014; arXiv:1412.6980 2014. [Google Scholar]

- Paszke, A.; Gross, S.; Massa, F.; Lerer, A.; Bradbury, J.; Chanan, G.; Killeen, T.; Lin, Z.; Gimelshein, N.; Antiga, L.; Desmaison, A.; Köpf, A.; Yang, E.Z.; DeVito, Z.; Raison, M.; Tejani, A.; Chilamkurthy, S.; Steiner, B.; Fang, L.; Bai, J.; Chintala, S. PyTorch: An Imperative Style, High-Performance Deep Learning Library. CoRR 2019, abs/1912.01703, [1912.01703].

- van der Maaten, L.; Hinton, G. Visualizing Data using t-SNE. Journal of Machine Learning Research 2008, 9, 2579–2605. [Google Scholar]

| Hyperparameter | Hyperparameter range | Optimal value |

| Number of SplineConv layers | {6, 7, 8, 9, 10, 11} | 10 |

| Number of channels in layers | {8, 16, 32, 64, 128} | (8, 8, 16, 16, 16, 16, 32, 32, 32, 32) |

| Number of pooling layers | {1, 2, 3} | 3 |

| Number of skip connections | {0, 1, 2, 3, 4} | 3 |

| Dataset used | MAE Before jittering | MAE After jittering |

| 0.63 | ||

| 0.71 |

| Neuromorphic VBTS | VBTS | |||

| Internal illumination | TactiGraph | MacDonald et al.[39] | Halwani et al. [28] | Tac-VGNN [35] |

| With illumination | ||||

| Without illumination | - | - | - | |

| † Contact is not made against a flat surface. | ||||

| ‡ For TactiGraph results, the datasets used for different illumination conditions are and | ||||

| as described in Section 2. | ||||

| Dataset used | TactiGraph | CNN on event-frame |

| 0.63 | 0.79 | |

| 0.71 |

| Hyperparameter | Hyperparameter range | Optimal value |

| Number of convolutional layers | {3,4,5,6,7} | 6 |

| Number of channels in layers | {16,32,64,128,256} | (32, 32, 32, 128, 128, 256) |

| Number of dense layers | {2,3,4,5} | 4 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2023 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).