Submitted:

05 April 2023

Posted:

06 April 2023

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Related Work

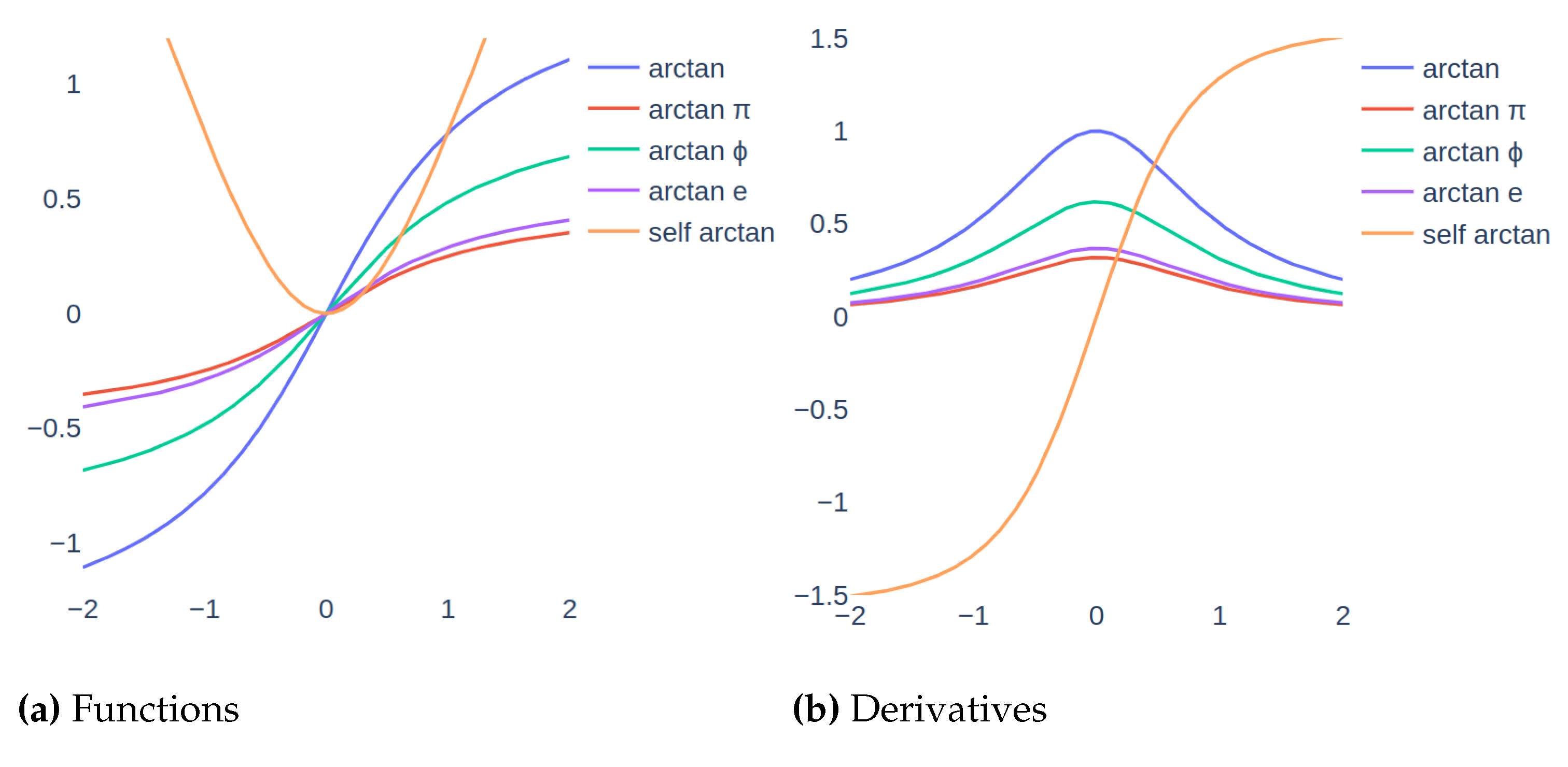

3. Activation Functions

3.1. Rectified Linear Unit (ReLU)

3.2. Leaky ReLU

3.3. Sigmoid

3.4. Hyperbolic Tangent (tanh)

3.5. Swish

3.6. Arctan

4. Promised Activation Functions

4.1. Arctan

4.2. Arctan Golden Ratio ()

4.3. Arctan Euler

4.4. Self Arctan

| Function name | Function | Derivative | Range |

|---|---|---|---|

| ReLU | |||

| leaky ReLU | |||

| sigmoid | |||

| tanh | |||

| swish | |||

| arctan | |||

| arctan | |||

| arctan | |||

| arctan e | |||

| self arctan |

5. Experiment

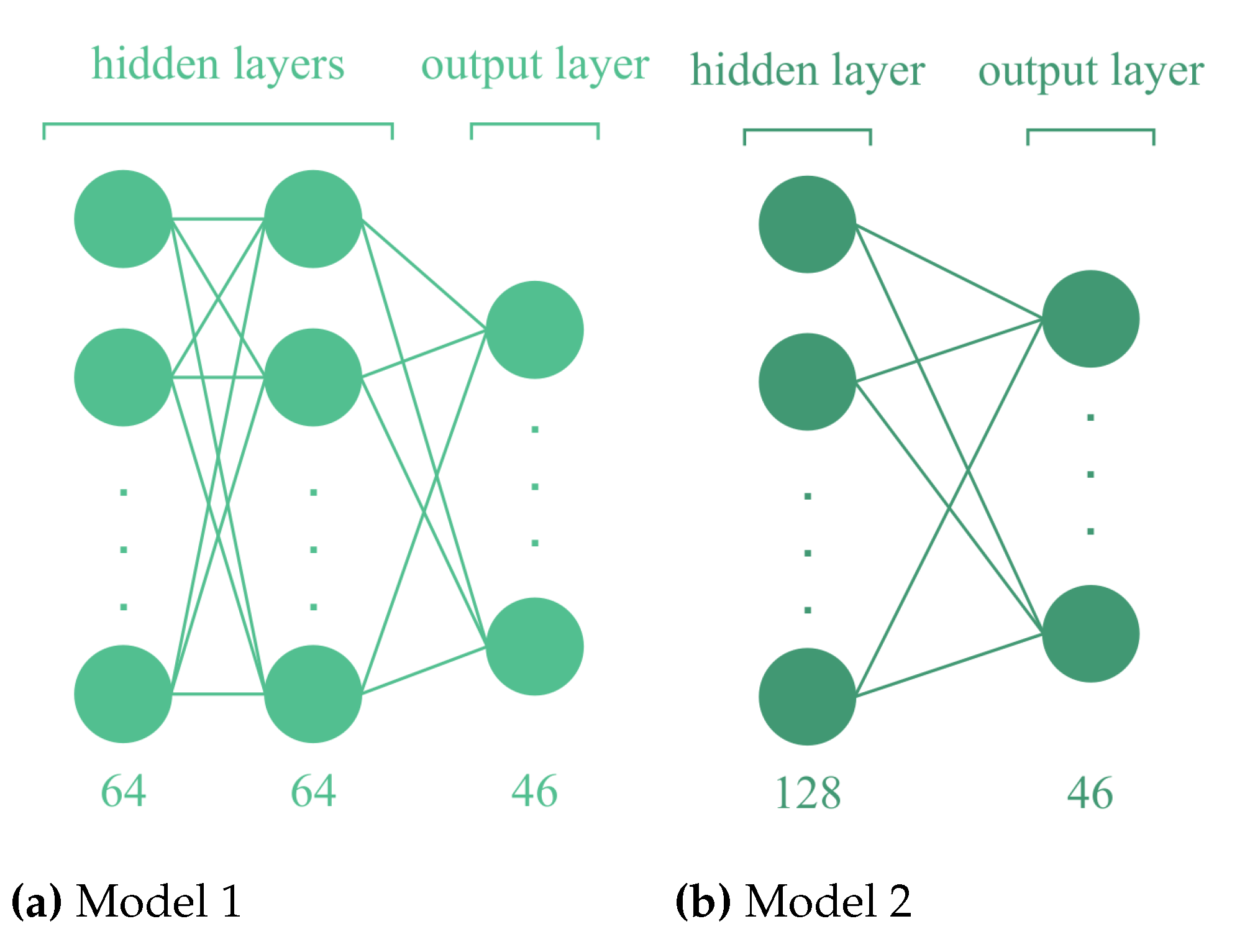

5.1. Topic Classification

5.1.1. Data Preprocessing

5.1.2. Models

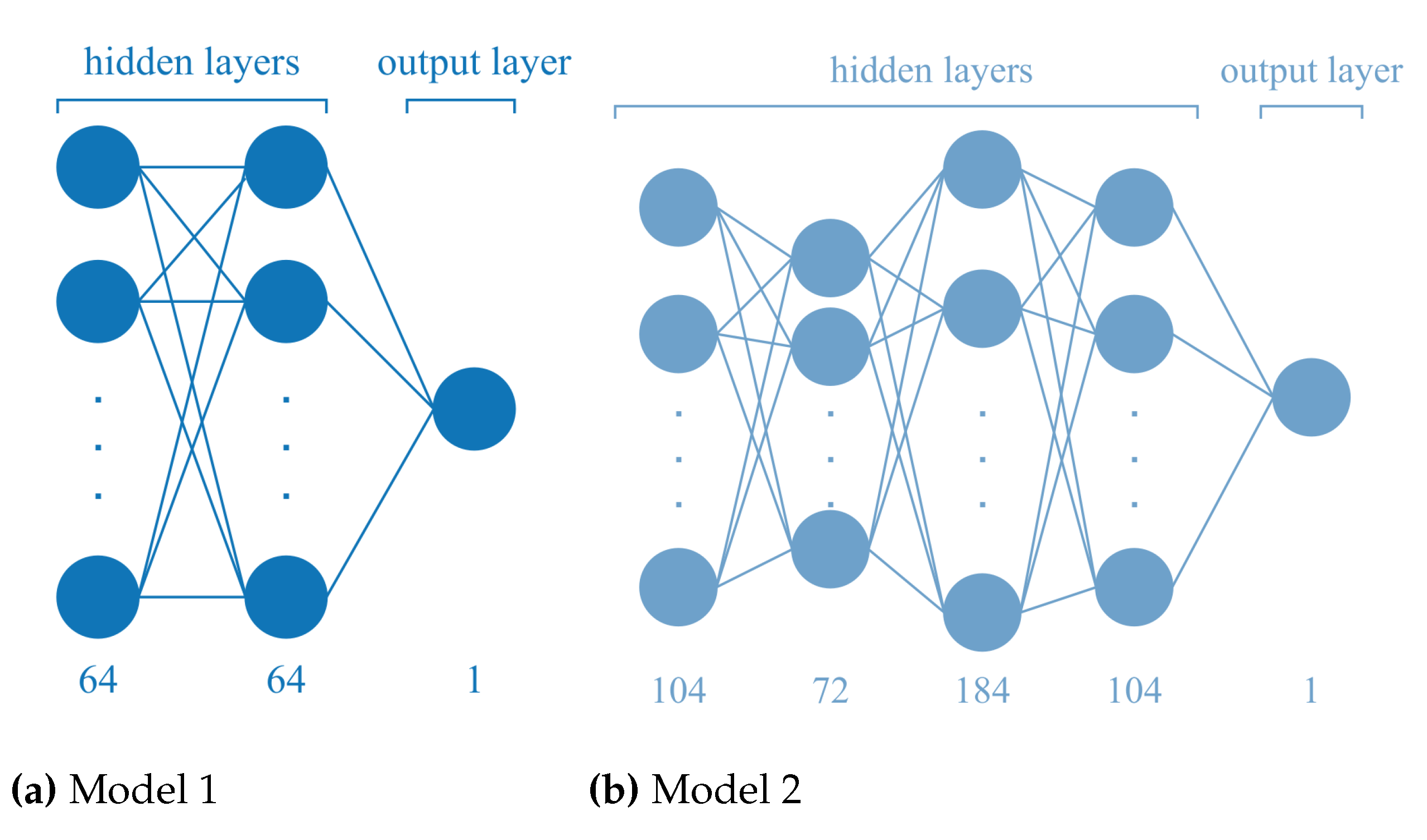

5.2. Time Series Prediction

5.2.1. Data Preprocessing

5.2.2. Models

6. Results

- At least one of promised activation functions beat the sigmoid in every case;

- It is significant to gain attention to functions’ range for solving problems with deep learning;

- Arctan is the best for the multiclass classification problem;

- Arctan e is the best for the time series prediction problem;

- Promising activation functions are shown that they can learn stably with different neuron numbers and model depths.

7. Discussion

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

Abbreviations

| EXIST | Energy Exchange Istanbul |

| RMSE | Root Mean Squared Error |

| MSE | Mean Squared Error |

| PACF | Partial Auto Correlation Function |

| ACF | Auto Correlation Function |

| DNN | Deep Neural Network |

| ReLU | Rectified Linear Unit |

| FCNN | Full Connected Neural Network |

| CNN | Convolutional Neural Network |

| LSTM | Long Short-Term Memory |

References

- Sivri, T. T. , Akman, N. P., & Berkol, A. (2022, June). Multiclass Classification Using Arctangent Activation Function and Its Variations. 2022 14th International Conference on Electronics, Computers and Artificial Intelligence (ECAI); IEEE; pp. 1–6.

- Sivri, T. T., Akman, N. P., Berkol, A., and Peker, C. (2022). Web Intrusion Detection Using Character Level Machine Learning Approaches with Upsampled Data. Annals of Computer Science and Information Systems 2022, 32, 269–274.

- Kamruzzaman, J. (2002). Arctangent Activation Function to Accelerate Backpropagation Learning. IEICE TRANSACTIONS on Fundamentals of Electronics. Communications and Computer Sciences 2002, E85(A), 2373–2376. [Google Scholar]

- Sharma, S.; Sharma, S.; Athaiya, A. Activation Functions In Neural Networks. International Journal of Engineering Applied Sciences and Technology 2020, 4(12), 310–316. [Google Scholar] [CrossRef]

- Paul, D., Sanap, G., Shenoy, S., Kalyane, D., Kalia, K., and Tekade, R. K. (2021). Artificial intelligence in drug discovery and 407 development. Drug discovery today, 26(1), 80–93. [CrossRef]

- B. Kisačanin, "Deep Learning for Autonomous Vehicles," 2017 IEEE 47th International Symposium on Multiple-Valued Logic (ISMVL), 2017, pp. 142-142. [CrossRef]

- D. W. Otter, J. R. D. W. Otter, J. R. Medina and J. K. Kalita, "A Survey of the Usages of Deep Learning for Natural Language Processing," in IEEE Transactions on Neural Networks and Learning Systems, vol. 32, no. 2, pp. 604-624, Feb. 2021. [CrossRef]

- Riccardo Miotto, Fei Wang, Shuang Wang, Xiaoqian Jiang, Joel T Dudley, Deep learning for healthcare: review, opportunities and challenges, Briefings in Bioinformatics, Volume 19, Issue 6, November 2018, Pages 1236–1246. 20 November. [CrossRef]

- Lee, S.I. , Yoo, S.J. Multimodal deep learning for finance: integrating and forecasting international stock markets. J Supercomput 76, 8294–8312 (2020). [CrossRef]

- Team, K. (n.d.). Keras Documentation: Reuters Newswire Classification Dataset. Keras. Retrieved December 2022, from https://keras.io/api/datasets/reuters/. 20 December.

- T. Kim and T. Adali, "Complex backpropagation neural network using elementary transcendental activation functions," 2001 IEEE International Conference on Acoustics, Speech, and Signal Processing. Proceedings (Cat. No.01CH37221), 2001, pp. 1281-1284 vol.2. [CrossRef]

- Cybenko, G. Approximation by superpositions of a sigmoidal function. Math. Control, Signals Syst. 1989, 2, 303–314. [Google Scholar] [CrossRef]

- K. Hornik, M. Stinchcombe, and H. White, “Multilayer feedforward networks are universal approximators. Neural Netw 1989, 2, 359–366. [CrossRef]

- Funahashi, K.I. On the approximate realization of continuous mappings by neural networks. Neural Netw. 1989, 2, 183–192. [Google Scholar] [CrossRef]

- A. R. Barron, “Universal approximation bounds for superpositions of a sigmoidal function. IEEE Trans. Inf. Theory, 19 May 1993; 39, 930–945.

- M. Leshno, V. Y. M. Leshno, V. Y. Lin, A. Pinkus, and S. Schocken, “Multilayer feedforward networks with a nonpolynomial activation function can approximate any function. Neural Netw. 1993; 6, 861–867. [Google Scholar]

- Misra, D. (2020). Mish: A Self Regularized Non-Monotonic Activation Function. British Machine Vision Conference.

- Parhi, R., and Nowak, R. D. (2020). The role of neural network activation functions. IEEE Signal Processing Letters, 27, 1779–1783. [CrossRef]

- Kulathunga, N. , Ranasinghe, N. R., Kinsman, Z., Vrinceanu, D., Huang, L., and Wang, Y. (2020). Effects of the Nonlinearity in Activation Functions on the Performance of Deep Learning Models. CoRR, abs/2010.07359.

- LeCun, Y.; Boser, B.; Denker, J.S.; Henderson, D.; Howard, R.E.; Hubbard, W.; et al. Backpropagation applied to handwritten zip code recognition. Neural Comput. 1989, 1, 541–551. [Google Scholar] [CrossRef]

- Sharma, O. A new activation function for deep neural network. 2019 International Conference on Machine Learning, Big Data, Cloud and Parallel Computing (COMITCon). [CrossRef]

- Liew, S. S., Khalil-Hani, M.,and Bakhteri, R. (2016). Bounded activation functions for enhanced training stability of deep neural networks on visual pattern recognition problems. Neurocomputing, 216, 718–734. [CrossRef]

- Efe, M. Ö. (2008). Novel neuronal activation functions for Feedforward Neural Networks. Neural Processing Letters, 28(2), 63–79. [CrossRef]

- Skoundrianos, E.N.; Tzafestas, S.G. Modelling and FDI of dynamic discrete time systems using a MLP with a new sigmoidal activation function. J Intell Robotics Syst 2004, 19–36. [Google Scholar] [CrossRef]

- Shen, S.-L., Zhang, N., Zhou, A., Yin, Z.-Y. (2022). Enhancement of neural networks with an alternative activation function tanhLU. Expert Systems with Applications, 199, 117181. [CrossRef]

- Augusteijn, M. F.,and Harrington, T. P. (2004). Evolving transfer functions for Artificial Neural Networks. Neural Computing and Applications, 13(1), 38–46. 1). [CrossRef]

- Benítez, José Manuel, Juan Luis Castro, and Ignacio Requena. "Are artificial neural networks black boxes? IEEE Transactions on neural networks 1997, 8, 1156–1164. [CrossRef] [PubMed]

- Jin, J. , Zhu, J., Gong, J. et al. Novel activation functions-based ZNN models for fixed-time solving dynamirc Sylvester equation. Neural Comput & Applic 34, 14297–14315 (2022). [CrossRef]

- Wang, X.; Ren, H.; Wang, A. Smish: A Novel Activation Function for Deep Learning Methods. Electronics 2022, 11, 540. [Google Scholar] [CrossRef]

- Trade Value - Day Ahead Market - Electricity Markets| EPIAS Transparency Platform. (n.d.). Retrieved December 20, 2022, from https://seffaflik.epias.com.tr/transparency/piyasalar/gop/islem-hacmi.xhtml.

| Evaluation Metrics | sigmoid | tanh | relu | leaky relu | swish | arctan | arctan | arctan | arctan e | self arctan |

|---|---|---|---|---|---|---|---|---|---|---|

| Precision1 | 0.29 | 0.65 | 0.41 | 0.61 | 0.62 | 0.67 | 0.36 | 0.61 | 0.48 | 0.40 |

| Recall1 | 0.25 | 0.45 | 0.32 | 0.42 | 0.43 | 0.45 | 0.31 | 0.44 | 0.38 | 0.29 |

| F1Score1 | 0.25 | 0.50 | 0.35 | 0.46 | 0.47 | 0.50 | 0.31 | 0.48 | 0.40 | 0.31 |

| Precision2 | 0.71 | 0.78 | 0.74 | 0.77 | 0.78 | 0.78 | 0.73 | 0.77 | 0.75 | 0.74 |

| Recall2 | 0.76 | 0.79 | 0.77 | 0.78 | 0.79 | 0.79 | 0.76 | 0.79 | 0.78 | 0.77 |

| F1Score2 | 0.72 | 0.77 | 0.75 | 0.77 | 0.77 | 0.78 | 0.74 | 0.77 | 0.76 | 0.77 |

| Evaluation Metrics | sigmoid | tanh | relu | leaky relu | swish | arctan | arctan | arctan | arctan e | self arctan |

|---|---|---|---|---|---|---|---|---|---|---|

| Precision1 | 0.72 | 0.71 | 0.71 | 0.72 | 0.71 | 0.69 | 0.74 | 0.72 | 0.75 | 0.71 |

| Recall1 | 0.49 | 0.54 | 0.54 | 0.52 | 0.55 | 0.55 | 0.53 | 0.56 | 0.53 | 0.53 |

| F1Score1 | 0.55 | 0.58 | 0.57 | 0.58 | 0.59 | 0.59 | 0.59 | 0.60 | 0.58 | 0.57 |

| Precision2 | 0.79 | 0.80 | 0.80 | 0.79 | 0.80 | 0.80 | 0.80 | 0.81 | 0.80 | 0.80 |

| Recall2 | 0.79 | 0.80 | 0.80 | 0.80 | 0.81 | 0.80 | 0.80 | 0.81 | 0.80 | 0.80 |

| F1Score2 | 0.78 | 0.79 | 0.78 | 0.79 | 0.80 | 0.79 | 0.79 | 0.80 | 0.79 | 0.79 |

| Evaluation Metrics | sigmoid | tanh | relu | leaky relu | swish | arctan | arctan | arctan | arctan e | self arctan |

|---|---|---|---|---|---|---|---|---|---|---|

| RMSE | 0.17 | 0.19 | 0.18 | 0.12 | 0.12 | 0.17 | 0.43 | 0.25 | 0.14 | 0.39 |

| MSE | 0.03 | 0.03 | 0.03 | 0.01 | 0.01 | 0.02 | 0.19 | 0.06 | 0.02 | 0.15 |

| R2 score | 0.63 | 0.54 | 0.61 | 0.82 | 0.82 | 0.66 | -1.20 | 0.22 | 0.74 | -0.76 |

| Evaluation Metrics | sigmoid | tanh | relu | leaky relu | swish | arctan | arctan | arctan | arctan e | self arctan |

|---|---|---|---|---|---|---|---|---|---|---|

| RMSE | 0.18 | 0.20 | 0.23 | 0.13 | 0.12 | 0.17 | 0.42 | 0.27 | 0.14 | 0.38 |

| MSE | 0.03 | 0.04 | 0.05 | 0.01 | 0.01 | 0.02 | 0.18 | 0.07 | 0.02 | 0.15 |

| R2 score | 0.61 | 0.50 | 0.34 | 0.79 | 0.80 | 0.65 | -1.12 | 0.14 | 0.76 | -0.74 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2023 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).