Submitted:

04 November 2025

Posted:

05 November 2025

You are already at the latest version

Abstract

Keywords:

1. Introduction

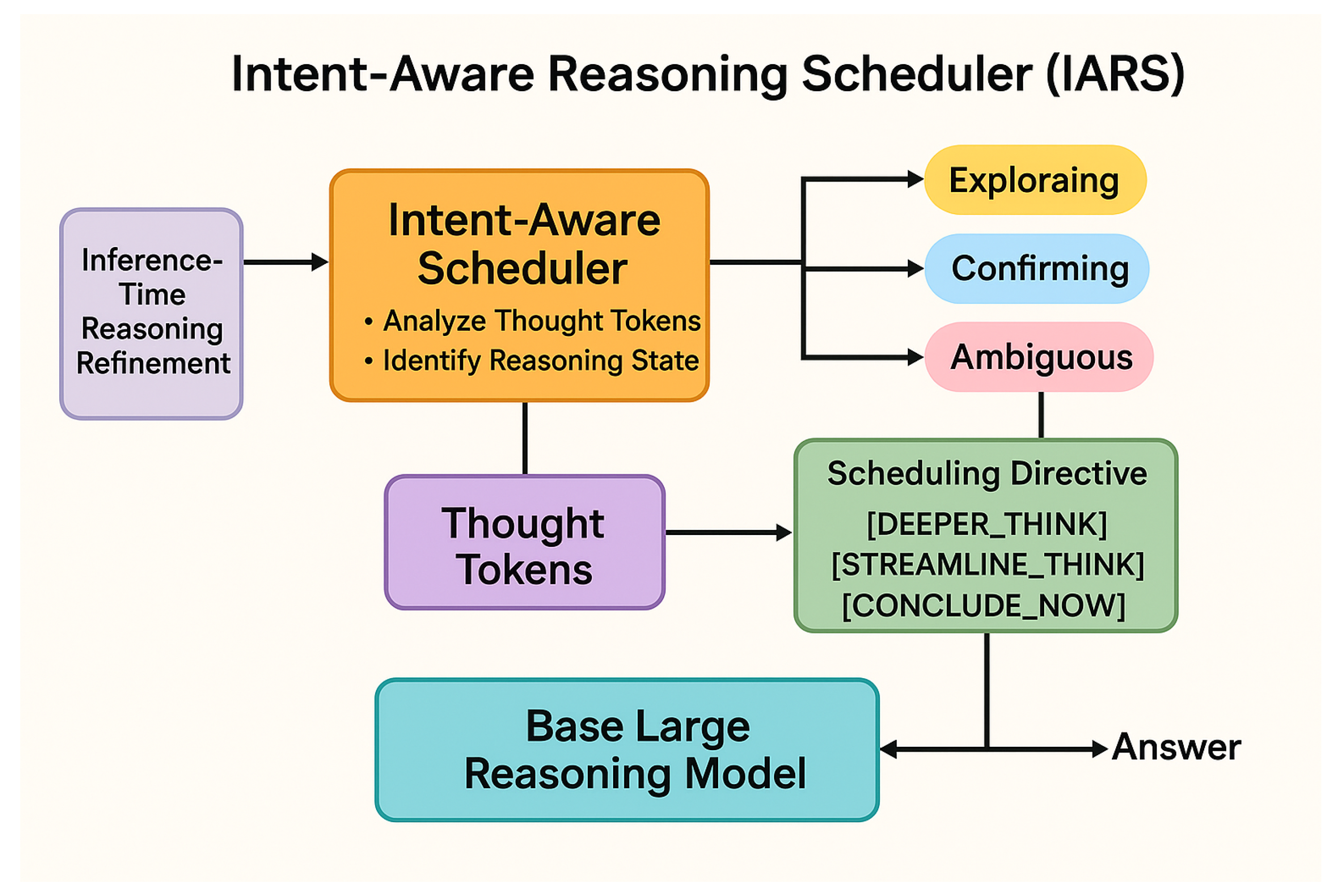

- We propose the Intent-Aware Reasoning Scheduler (IARS), a novel framework that enables large language models to dynamically adjust their thinking depth and strategy during inference by perceiving their internal reasoning intent and progress state.

- We introduce the concept of an Intent-Aware Scheduler that monitors the LLM’s generated thought tokens in real-time and issues dynamic, context-specific scheduling directives, significantly enhancing the adaptability of inference-time reasoning.

- We demonstrate through extensive experiments on diverse reasoning benchmarks that IARS consistently achieves superior accuracy and efficiency compared to existing state-of-the-art inference-time control methods, all without requiring any re-training of the base large language model.

2. Related Work

2.1. Large Language Model Reasoning and Chain-of-Thought

2.2. Dynamic Inference-Time Control and Adaptive Thinking for LLMs

3. Method

3.1. Overview of the IARS Framework

3.2. The Intent-Aware Scheduler (IAS)

3.2.1. Intent State Detection

3.2.2. Dynamic Scheduling Directives

3.3. Integration and Inference-Time Modulation

4. Experiments

4.1. Experimental Setup

4.1.1. Base Models

- DeepSeek-R1-Distill-Qwen-1.5B (1.5 billion parameters)

- DeepSeek-R1-Distill-Qwen-7B (7 billion parameters)

- Qwen QwQ-32B (32 billion parameters)

4.1.2. Datasets and Benchmarks

- Mathematical Reasoning: AIME 2024 (AIME24), AMC 2023 (AMC23), Minerva-Math, and MATH500. These datasets demand multi-step arithmetic, algebraic, and geometric problem-solving.

- Code Generation: LiveCodeBench (LiveCode). This benchmark assesses the model’s ability to generate correct and efficient code solutions given problem descriptions.

- Scientific Reasoning: OlympiadBench (Olympiad). This dataset features complex science problems requiring deep understanding and logical deduction.

4.1.3. Evaluation Metrics

- Pass@1 (%): This metric measures the percentage of problems for which the model generates a correct final answer on its first attempt. It is the primary indicator of reasoning accuracy.

- Average Generated Token Count (#Tk): This metric quantifies the average number of tokens generated by the model during its entire reasoning process, including intermediate thoughts and the final answer. A lower token count, coupled with high accuracy, indicates a more efficient and less verbose reasoning trajectory.

4.1.4. Comparison Methods

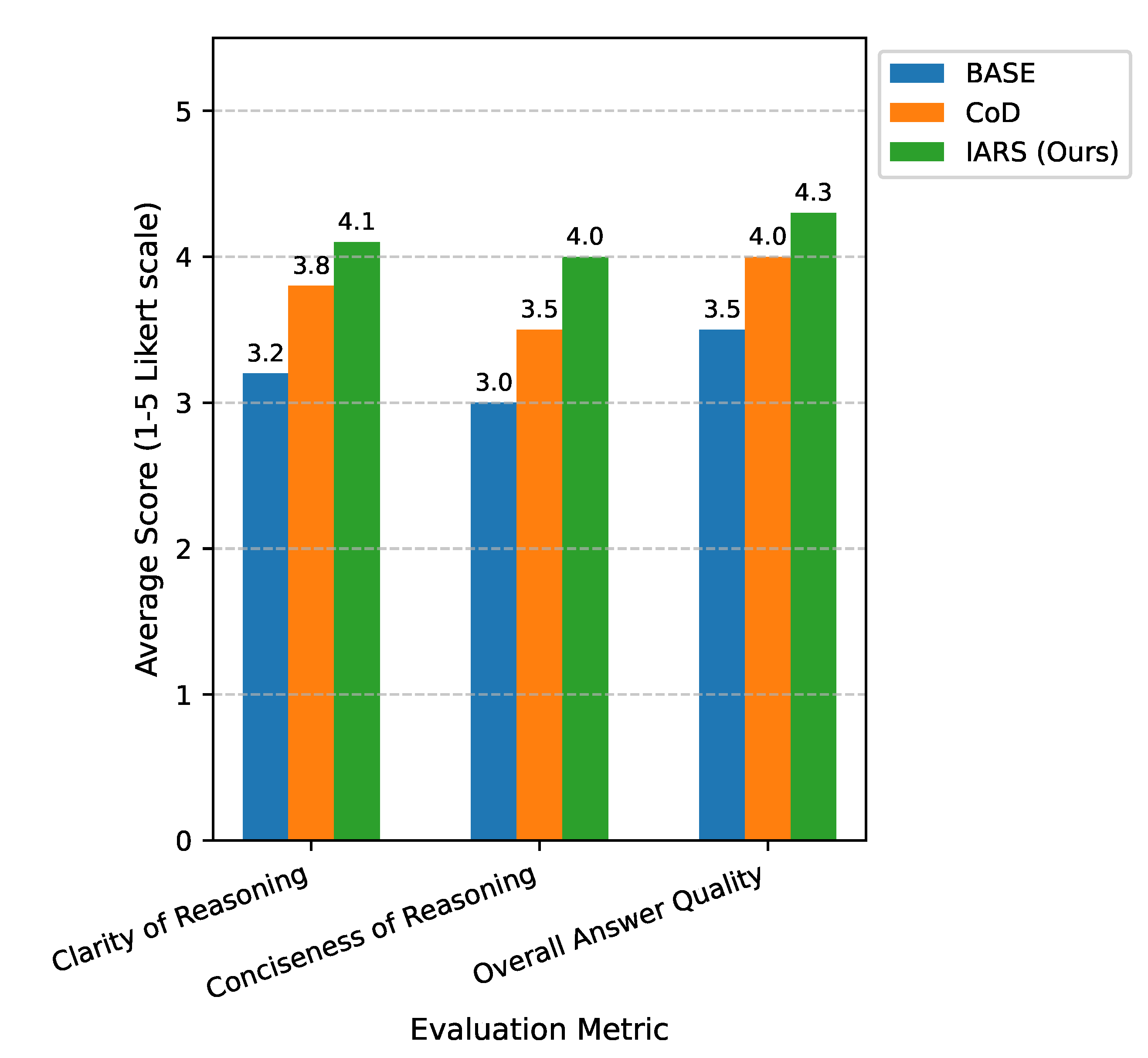

- BASE: This represents the performance of the raw LRM without any inference-time modulation or specialized prompting for reasoning.

- CoD (Chain-of-Density): A robust baseline that utilizes advanced prompt engineering to encourage dense, multi-faceted reasoning, as referenced in the AlphaOne paper.

- AlphaOne (s1* / CoD): This is the state-of-the-art inference-time modulation strategy proposed by AlphaOne, which dynamically inserts "wait" tokens and switches between slow and fast thinking at predefined -moments. This serves as our direct competitor.

4.2. Experimental Results

4.2.1. Main Results on DeepSeek-R1-Distill-Qwen-1.5B

4.2.2. Analysis of IARS Effectiveness

4.3. Human Evaluation

4.4. Comparison with State-of-the-Art (AlphaOne)

4.5. Scalability Analysis Across LRM Sizes

4.6. Ablation Study of IAS Components

- IARS-HeuristicSwitch: This configuration represents a basic dynamic switching mechanism based on simple rules (e.g., fixed token count thresholds) without explicit intent detection or decoding parameter modulation. While it offers a modest improvement over BASE, it significantly underperforms the full IARS, indicating that simple heuristics are insufficient for complex adaptive reasoning.

- IARS-NoModulation: In this setup, the IAS still detects intent and injects directive tokens, but these tokens do not trigger dynamic changes in the LRM’s decoding parameters (e.g., temperature, top-p). The LRM relies solely on prompt engineering to interpret directives. This configuration shows better performance than IARS-HeuristicSwitch, demonstrating that merely injecting intent-aware prompts is beneficial. However, the gap to full IARS highlights that dynamic decoding parameter modulation is crucial for fine-grained control and unlocking the full potential of adaptive reasoning.

- IARS-NoIntent: This variant simulates an IAS that uses dynamic decoding parameter modulation and prompt engineering, but the scheduling decisions are not based on sophisticated intent state detection. Instead, it might rely on simpler signals or a pre-programmed sequence. This configuration performs comparably to the AlphaOne method, showing strong results but still falling short of the full IARS. This underscores that the real-time, context-sensitive Intent State Detection is the primary driver of IARS’s superior performance, allowing for truly adaptive and intelligent guidance.

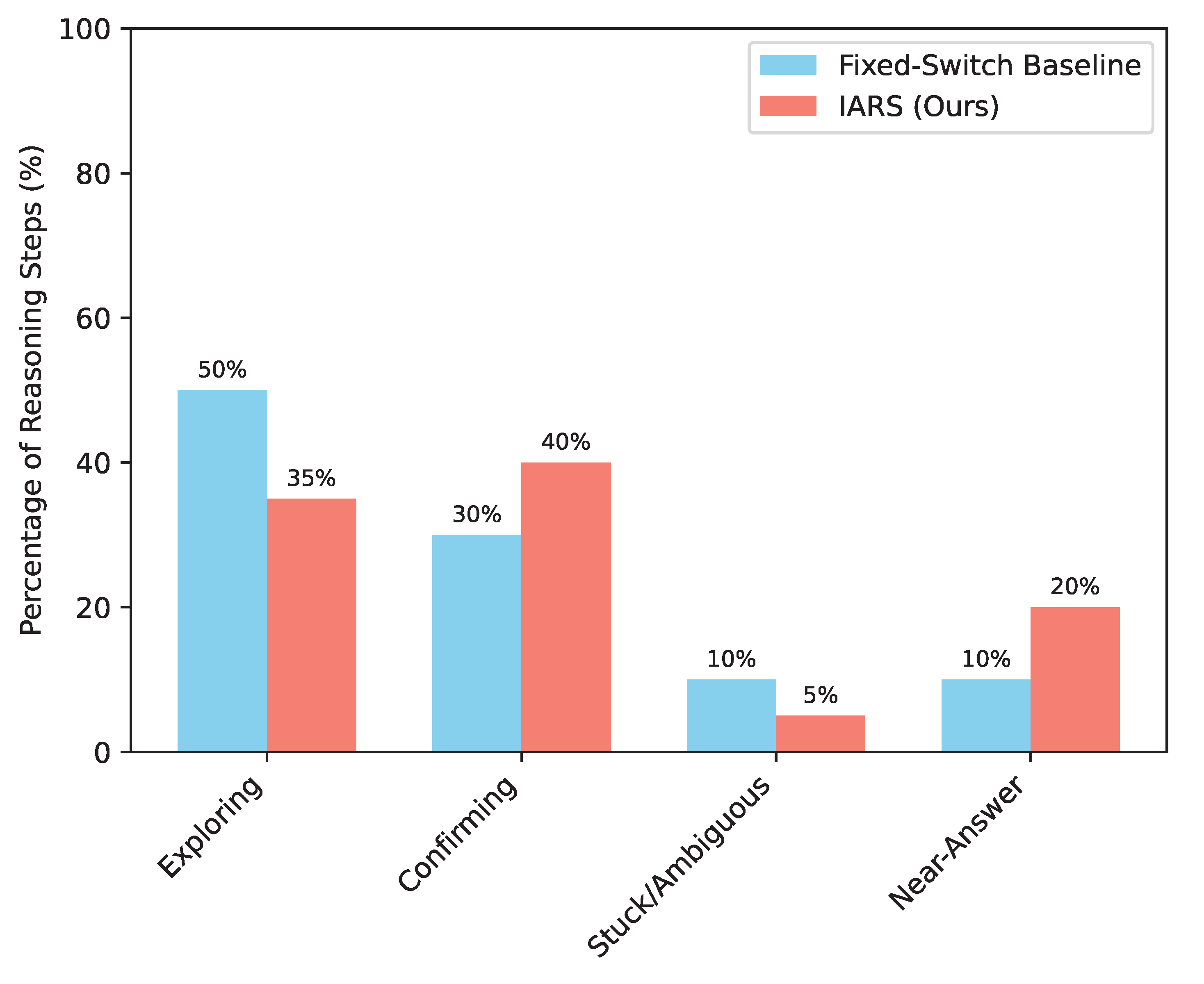

4.7. Analysis of Intent State Dynamics

5. Conclusion

References

- Weng, Y.; Zhu, M.; Xia, F.; Li, B.; He, S.; Liu, S.; Sun, B.; Liu, K.; Zhao, J. Large Language Models are Better Reasoners with Self-Verification. In Proceedings of the Findings of the Association for Computational Linguistics: EMNLP 2023. Association for Computational Linguistics; 2023; pp. 2550–2575. [Google Scholar] [CrossRef]

- Zhou, Y.; Shen, J.; Cheng, Y. Weak to strong generalization for large language models with multi-capabilities. In Proceedings of the The Thirteenth International Conference on Learning Representations; 2025. [Google Scholar]

- Press, O.; Zhang, M.; Min, S.; Schmidt, L.; Smith, N.; Lewis, M. Measuring and Narrowing the Compositionality Gap in Language Models. In Proceedings of the Findings of the Association for Computational Linguistics: EMNLP 2023. Association for Computational Linguistics; 2023; pp. 5687–5711. [Google Scholar] [CrossRef]

- Ma, X.; Gong, Y.; He, P.; Zhao, H.; Duan, N. Query Rewriting in Retrieval-Augmented Large Language Models. In Proceedings of the Proceedings of the 2023 Conference on Empirical Methods in Natural Language Processing. [CrossRef]

- Zhang, H.; Lu, J.; Jiang, S.; Zhu, C.; Xie, L.; Zhong, C.; Chen, H.; Zhu, Y.; Du, Y.; Gao, Y.; et al. Co-Sight: Enhancing LLM-Based Agents via Conflict-Aware Meta-Verification and Trustworthy Reasoning with Structured Facts. arXiv preprint arXiv:2510.21557 2025.

- Wang, Q.; Ye, H.; Chung, M.Y.; Liu, Y.; Lin, Y.; Kuo, M.; Ma, M.; Zhang, J.; Chen, Y. CoreMatching: A Co-adaptive Sparse Inference Framework with Token and Neuron Pruning for Comprehensive Acceleration of Vision-Language Models. arXiv preprint arXiv:2505.19235 2025.

- Tian, Z.; Lin, Z.; Zhao, D.; Zhao, W.; Flynn, D.; Ansari, S.; Wei, C. Evaluating scenario-based decision-making for interactive autonomous driving using rational criteria: A survey. arXiv preprint arXiv:2501.01886 2025.

- Paranjape, B.; Michael, J.; Ghazvininejad, M.; Hajishirzi, H.; Zettlemoyer, L. Prompting Contrastive Explanations for Commonsense Reasoning Tasks. In Proceedings of the Findings of the Association for Computational Linguistics: ACL-IJCNLP 2021. Association for Computational Linguistics; 2021; pp. 4179–4192. [Google Scholar] [CrossRef]

- Chen, Z.; Chen, W.; Smiley, C.; Shah, S.; Borova, I.; Langdon, D.; Moussa, R.; Beane, M.; Huang, T.H.; Routledge, B.; et al. FinQA: A Dataset of Numerical Reasoning over Financial Data. In Proceedings of the Proceedings of the 2021 Conference on Empirical Methods in Natural Language Processing. Association for Computational Linguistics, 2021, pp. 3697–3711. https://doi.org/10.18653/v1/2021.emnlp-main.300.

- Fei, H.; Li, B.; Liu, Q.; Bing, L.; Li, F.; Chua, T.S. Reasoning Implicit Sentiment with Chain-of-Thought Prompting. In Proceedings of the Proceedings of the 61st Annual Meeting of the Association for Computational Linguistics (Volume 2: Short Papers). Association for Computational Linguistics, 2023, pp. 1171–1182. https://doi.org/10.18653/v1/2023.acl-short.101.

- Ren, L.; et al. Boosting algorithm optimization technology for ensemble learning in small sample fraud detection. Academic Journal of Engineering and Technology Science 2025, 8, 53–60. [Google Scholar] [CrossRef]

- Ren, L. Reinforcement Learning for Prioritizing Anti-Money Laundering Case Reviews Based on Dynamic Risk Assessment. Journal of Economic Theory and Business Management 2025, 2, 1–6. [Google Scholar] [CrossRef]

- Ren, L. Causal Modeling for Fraud Detection: Enhancing Financial Security with Interpretable AI. European Journal of Business, Economics & Management 2025, 1, 94–104. [Google Scholar]

- Chen, Q. Data-Driven and Sustainable Transportation Route Optimization in Green Logistics Supply Chain. Asia Pacific Economic and Management Review 2024, 1, 140–146. [Google Scholar] [CrossRef]

- Zhang, J.; Zhang, X.; Yu, J.; Tang, J.; Tang, J.; Li, C.; Chen, H. Subgraph Retrieval Enhanced Model for Multi-hop Knowledge Base Question Answering. In Proceedings of the Proceedings of the 60th Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers). Association for Computational Linguistics, 2022, pp. 5773–5784. https://doi.org/10.18653/v1/2022.acl-long.396.

- Lin, Z.; Lan, J.; Anagnostopoulos, C.; Tian, Z.; Flynn, D. Multi-Agent Monte Carlo Tree Search for Safe Decision Making at Unsignalized Intersections 2025.

- Lin, Z.; Lan, J.; Anagnostopoulos, C.; Tian, Z.; Flynn, D. Safety-Critical Multi-Agent MCTS for Mixed Traffic Coordination at Unsignalized Intersections. IEEE Transactions on Intelligent Transportation Systems. [CrossRef]

- Wei, Q.; Shan, J.; Cheng, H.; Yu, Z.; Lijuan, B.; Haimei, Z. A method of 3D human-motion capture and reconstruction based on depth information. In Proceedings of the 2016 IEEE International Conference on Mechatronics and Automation. IEEE; 2016; pp. 187–192. [Google Scholar]

- Zhao, H.; Bian, W.; Yuan, B.; Tao, D. Collaborative Learning of Depth Estimation, Visual Odometry and Camera Relocalization from Monocular Videos. In Proceedings of the IJCAI; 2020; pp. 488–494. [Google Scholar]

- Ho, N.; Schmid, L.; Yun, S.Y. Large Language Models Are Reasoning Teachers. In Proceedings of the Proceedings of the 61st Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers). Association for Computational Linguistics, 2023, pp. 14852–14882. https://doi.org/10.18653/v1/2023.acl-long.830.

- Suzgun, M.; Scales, N.; Schärli, N.; Gehrmann, S.; Tay, Y.; Chung, H.W.; Chowdhery, A.; Le, Q.; Chi, E.; Zhou, D.; et al. Challenging BIG-Bench Tasks and Whether Chain-of-Thought Can Solve Them. In Proceedings of the Findings of the Association for Computational Linguistics: ACL 2023. Association for Computational Linguistics; 2023; pp. 13003–13051. [Google Scholar] [CrossRef]

- Jiang, J.; Zhou, K.; Dong, Z.; Ye, K.; Zhao, X.; Wen, J.R. StructGPT: A General Framework for Large Language Model to Reason over Structured Data. In Proceedings of the Proceedings of the 2023 Conference on Empirical Methods in Natural Language Processing. Association for Computational Linguistics, 2023, pp. 9237–9251. https://doi.org/10.18653/v1/2023.emnlp-main.574. [CrossRef]

- Zhuang, J.; Miao, S. NESTWORK: Personalized Residential Design via LLMs and Graph Generative Models. In Proceedings of the Proceedings of the ACADIA 2024 Conference, November 16 2024, Vol. 3, pp. 99–100.

- Zhuang, J.; Li, G.; Xu, H.; Xu, J.; Tian, R. TEXT-TO-CITY Controllable 3D Urban Block Generation with Latent Diffusion Model. In Proceedings of the Proceedings of the 29th International Conference of the Association for Computer-Aided Architectural Design Research in Asia (CAADRIA), Singapore, 2024, pp. 20–26.

- Luo, Z.; Hong, Z.; Ge, X.; Zhuang, J.; Tang, X.; Du, Z.; Tao, Y.; Zhang, Y.; Zhou, C.; Yang, C.; et al. Embroiderer: Do-It-Yourself Embroidery Aided with Digital Tools. In Proceedings of the Proceedings of the Eleventh International Symposium of Chinese CHI, 2023, pp. 614–621.

- Zhou, Y.; Li, X.; Wang, Q.; Shen, J. Visual In-Context Learning for Large Vision-Language Models. In Proceedings of the Findings of the Association for Computational Linguistics, ACL 2024, Bangkok, Thailand and virtual meeting, August 11-16, 2024. Association for Computational Linguistics, 2024, pp. 15890–15902.

- Zhou, Y.; Song, L.; Shen, J. Improving Medical Large Vision-Language Models with Abnormal-Aware Feedback. arXiv preprint arXiv:2501.01377, arXiv:2501.01377 2025.

- Zhou, S.; Alon, U.; Agarwal, S.; Neubig, G. CodeBERTScore: Evaluating Code Generation with Pretrained Models of Code. In Proceedings of the Proceedings of the 2023 Conference on Empirical Methods in Natural Language Processing. Association for Computational Linguistics, 2023, pp. 13921–13937. https://doi.org/10.18653/v1/2023.emnlp-main.859.

- Liu, A.; Sap, M.; Lu, X.; Swayamdipta, S.; Bhagavatula, C.; Smith, N.A.; Choi, Y. DExperts: Decoding-Time Controlled Text Generation with Experts and Anti-Experts. In Proceedings of the Proceedings of the 59th Annual Meeting of the Association for Computational Linguistics and the 11th International Joint Conference on Natural Language Processing (Volume 1: Long Papers). Association for Computational Linguistics, 2021, pp. 6691–6706. https://doi.org/10.18653/v1/2021.acl-long.522.

- Zhang, K.; Zhang, K.; Zhang, M.; Zhao, H.; Liu, Q.; Wu, W.; Chen, E. Incorporating Dynamic Semantics into Pre-Trained Language Model for Aspect-based Sentiment Analysis. In Proceedings of the Findings of the Association for Computational Linguistics: ACL 2022. Association for Computational Linguistics; 2022; pp. 3599–3610. [Google Scholar] [CrossRef]

- Wang, P.; Zhu, Z.; Liang, D. Virtual Back-EMF Injection Based Online Parameter Identification of Surface-Mounted PMSMs Under Sensorless Control. IEEE Transactions on Industrial Electronics 2024.

- Wang, P.; Zhu, Z.; Feng, Z. Virtual Back-EMF Injection-based Online Full-Parameter Estimation of DTP-SPMSMs Under Sensorless Control. IEEE Transactions on Transportation Electrification 2025.

- Wang, P.; Zhu, Z.; Liang, D. Improved position-offset based online parameter estimation of PMSMs under constant and variable speed operations. IEEE Transactions on Energy Conversion 2024, 39, 1325–1340. [Google Scholar] [CrossRef]

- Wang, Q.; Zhang, S. DGL: Device generic latency model for neural architecture search on mobile devices. IEEE Transactions on Mobile Computing 2023, 23, 1954–1967. [Google Scholar] [CrossRef]

- Zhang, H.; Wang, Y.; Yin, G.; Liu, K.; Liu, Y.; Yu, T. Learning Language-guided Adaptive Hyper-modality Representation for Multimodal Sentiment Analysis. In Proceedings of the Proceedings of the 2023 Conference on Empirical Methods in Natural Language Processing. [CrossRef]

- Pang, S.; Xue, Y.; Yan, Z.; Huang, W.; Feng, J. Dynamic and Multi-Channel Graph Convolutional Networks for Aspect-Based Sentiment Analysis. In Proceedings of the Findings of the Association for Computational Linguistics: ACL-IJCNLP 2021. Association for Computational Linguistics; 2021; pp. 2627–2636. [Google Scholar] [CrossRef]

- He, J.; Kryscinski, W.; McCann, B.; Rajani, N.; Xiong, C. CTRLsum: Towards Generic Controllable Text Summarization. In Proceedings of the Proceedings of the 2022 Conference on Empirical Methods in Natural Language Processing. Association for Computational Linguistics, 2022, pp. 5879–5915. https://doi.org/10.18653/v1/2022.emnlp-main.396.

- Wang, Q.; Liu, B.; Zhou, T.; Shi, J.; Lin, Y.; Chen, Y.; Li, H.H.; Wan, K.; Zhao, W. Vision-Zero: Scalable VLM Self-Improvement via Strategic Gamified Self-Play. arXiv preprint arXiv:2509.25541, arXiv:2509.25541 2025.

- Ren, X.; Zhai, Y.; Gan, T.; Yang, N.; Wang, B.; Liu, S. Real-Time Detection of Dynamic Restructuring in KNixFe1-xF3 Perovskite Fluorides for Enhanced Water Oxidation. Small 2025, 21, 2411017. [Google Scholar] [CrossRef] [PubMed]

- Zhai, Y.; Ren, X.; Gan, T.; She, L.; Guo, Q.; Yang, N.; Wang, B.; Yao, Y.; Liu, S. Deciphering the Synergy of Multiple Vacancies in High-Entropy Layered Double Hydroxides for Efficient Oxygen Electrocatalysis. Advanced Energy Materials 2025, p. 2502065.

- Gupta, P.; Bigham, J.; Tsvetkov, Y.; Pavel, A. Controlling Dialogue Generation with Semantic Exemplars. In Proceedings of the Proceedings of the 2021 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies. Association for Computational Linguistics, 2021, pp. 3018–3029. https://doi.org/10.18653/v1/2021.naacl-main.240.

- Labrak, Y.; Bazoge, A.; Morin, E.; Gourraud, P.A.; Rouvier, M.; Dufour, R. BioMistral: A Collection of Open-Source Pretrained Large Language Models for Medical Domains. In Proceedings of the Findings of the Association for Computational Linguistics: ACL 2024. Association for Computational Linguistics, 2024, pp. 5848–5864. https://doi.org/10.18653/v1/2024.findings-acl.348.

- Zhang, W.; Deng, Y.; Liu, B.; Pan, S.; Bing, L. Sentiment Analysis in the Era of Large Language Models: A Reality Check. In Proceedings of the Findings of the Association for Computational Linguistics: NAACL 2024. Association for Computational Linguistics; 2024; pp. 3881–3906. [Google Scholar] [CrossRef]

| Model & Method | Benchmark | Pass@1 (%) | #Tk (Avg. Tokens) |

|---|---|---|---|

| DeepSeek-R1-Distill-Qwen-1.5B (BASE) | AIME24 | 23.3 | 7280 |

| DeepSeek-R1-Distill-Qwen-1.5B (BASE) | AMC23 | 57.5 | 5339 |

| DeepSeek-R1-Distill-Qwen-1.5B (BASE) | Minerva-Math | 32.0 | 4935 |

| DeepSeek-R1-Distill-Qwen-1.5B (BASE) | MATH500 | 79.2 | 3773 |

| DeepSeek-R1-Distill-Qwen-1.5B (BASE) | LiveCode | 17.8 | 6990 |

| DeepSeek-R1-Distill-Qwen-1.5B (BASE) | Olympiad | 38.8 | 5999 |

| CoD | AIME24 | 30.0 (+6.7) | 6994 |

| CoD | AMC23 | 65.0 (+7.5) | 5415 |

| CoD | Minerva-Math | 29.0 (-3.0) | 4005 |

| CoD | MATH500 | 81.4 (+2.2) | 3136 |

| CoD | LiveCode | 20.3 (+2.5) | 6657 |

| CoD | Olympiad | 40.6 (+1.8) | 5651 |

| IARS (Ours) | AIME24 | 32.5 (+9.2) | 6850 |

| IARS (Ours) | AMC23 | 67.0 (+9.5) | 5200 |

| IARS (Ours) | Minerva-Math | 33.5 (+1.5) | 4050 |

| IARS (Ours) | MATH500 | 83.0 (+3.8) | 3050 |

| IARS (Ours) | LiveCode | 22.0 (+4.2) | 6400 |

| IARS (Ours) | Olympiad | 42.5 (+3.7) | 5450 |

| Model & Method | Benchmark | Pass@1 (%) | Improvement vs. BASE (%) | #Tk (Avg. Tokens) | Reduction vs. BASE (#Tk) |

|---|---|---|---|---|---|

| DeepSeek-R1-Distill-Qwen-1.5B (BASE) | AIME24 | 23.3 | - | 7280 | - |

| DeepSeek-R1-Distill-Qwen-1.5B (BASE) | AMC23 | 57.5 | - | 5339 | - |

| DeepSeek-R1-Distill-Qwen-1.5B (BASE) | Minerva-Math | 32.0 | - | 4935 | - |

| DeepSeek-R1-Distill-Qwen-1.5B (BASE) | MATH500 | 79.2 | - | 3773 | - |

| DeepSeek-R1-Distill-Qwen-1.5B (BASE) | LiveCode | 17.8 | - | 6990 | - |

| DeepSeek-R1-Distill-Qwen-1.5B (BASE) | Olympiad | 38.8 | - | 5999 | - |

| AlphaOne (s1* / CoD) | AIME24 | 31.0 | +7.7 | 6900 | 380 |

| AlphaOne (s1* / CoD) | AMC23 | 66.0 | +8.5 | 5300 | 39 |

| AlphaOne (s1* / CoD) | Minerva-Math | 31.0 | -1.0 | 4100 | 835 |

| AlphaOne (s1* / CoD) | MATH500 | 82.0 | +2.8 | 3100 | 673 |

| AlphaOne (s1* / CoD) | LiveCode | 21.0 | +3.2 | 6500 | 490 |

| AlphaOne (s1* / CoD) | Olympiad | 41.5 | +2.7 | 5550 | 449 |

| IARS (Ours) | AIME24 | 32.5 | +9.2 | 6850 | 430 |

| IARS (Ours) | AMC23 | 67.0 | +9.5 | 5200 | 139 |

| IARS (Ours) | Minerva-Math | 33.5 | +1.5 | 4050 | 885 |

| IARS (Ours) | MATH500 | 83.0 | +3.8 | 3050 | 723 |

| IARS (Ours) | LiveCode | 22.0 | +4.2 | 6400 | 590 |

| IARS (Ours) | Olympiad | 42.5 | +3.7 | 5450 | 549 |

| Model & Method | Benchmark | Pass@1 (%) | #Tk (Avg. Tokens) |

|---|---|---|---|

| DeepSeek-R1-Distill-Qwen-7B (BASE) | AIME24 | 30.0 | 8000 |

| DeepSeek-R1-Distill-Qwen-7B (BASE) | AMC23 | 65.0 | 6000 |

| DeepSeek-R1-Distill-Qwen-7B (BASE) | Minerva-Math | 38.0 | 5500 |

| DeepSeek-R1-Distill-Qwen-7B (BASE) | MATH500 | 85.0 | 4000 |

| DeepSeek-R1-Distill-Qwen-7B (BASE) | LiveCode | 25.0 | 7500 |

| DeepSeek-R1-Distill-Qwen-7B (BASE) | Olympiad | 45.0 | 6500 |

| CoD | AIME24 | 35.0 | 7800 |

| CoD | AMC23 | 70.0 | 5800 |

| CoD | Minerva-Math | 36.0 | 5000 |

| CoD | MATH500 | 86.5 | 3800 |

| CoD | LiveCode | 28.0 | 7200 |

| CoD | Olympiad | 46.5 | 6200 |

| AlphaOne (s1* / CoD) | AIME24 | 36.5 | 7600 |

| AlphaOne (s1* / CoD) | AMC23 | 71.5 | 5700 |

| AlphaOne (s1* / CoD) | Minerva-Math | 39.0 | 5100 |

| AlphaOne (s1* / CoD) | MATH500 | 87.5 | 3700 |

| AlphaOne (s1* / CoD) | LiveCode | 29.5 | 7000 |

| AlphaOne (s1* / CoD) | Olympiad | 47.5 | 6100 |

| IARS (Ours) | AIME24 | 38.0 | 7400 |

| IARS (Ours) | AMC23 | 73.0 | 5500 |

| IARS (Ours) | Minerva-Math | 40.5 | 4900 |

| IARS (Ours) | MATH500 | 88.5 | 3600 |

| IARS (Ours) | LiveCode | 31.0 | 6800 |

| IARS (Ours) | Olympiad | 49.0 | 5900 |

| Model & Method | Benchmark | Pass@1 (%) | #Tk (Avg. Tokens) |

|---|---|---|---|

| Qwen QwQ-32B (BASE) | AIME24 | 35.0 | 9000 |

| Qwen QwQ-32B (BASE) | AMC23 | 70.0 | 7000 |

| Qwen QwQ-32B (BASE) | Minerva-Math | 45.0 | 6000 |

| Qwen QwQ-32B (BASE) | MATH500 | 90.0 | 4500 |

| Qwen QwQ-32B (BASE) | LiveCode | 30.0 | 8000 |

| Qwen QwQ-32B (BASE) | Olympiad | 50.0 | 7000 |

| CoD | AIME24 | 40.0 | 8800 |

| CoD | AMC23 | 75.0 | 6800 |

| CoD | Minerva-Math | 43.0 | 5500 |

| CoD | MATH500 | 91.5 | 4300 |

| CoD | LiveCode | 33.0 | 7700 |

| CoD | Olympiad | 51.5 | 6700 |

| AlphaOne (s1* / CoD) | AIME24 | 41.5 | 8600 |

| AlphaOne (s1* / CoD) | AMC23 | 76.5 | 6700 |

| AlphaOne (s1* / CoD) | Minerva-Math | 46.0 | 5600 |

| AlphaOne (s1* / CoD) | MATH500 | 92.5 | 4200 |

| AlphaOne (s1* / CoD) | LiveCode | 34.5 | 7500 |

| AlphaOne (s1* / CoD) | Olympiad | 52.5 | 6600 |

| IARS (Ours) | AIME24 | 43.0 | 8400 |

| IARS (Ours) | AMC23 | 78.0 | 6500 |

| IARS (Ours) | Minerva-Math | 47.5 | 5400 |

| IARS (Ours) | MATH500 | 93.5 | 4100 |

| IARS (Ours) | LiveCode | 36.0 | 7300 |

| IARS (Ours) | Olympiad | 54.0 | 6400 |

| Method | Benchmark | Pass@1 (%) | #Tk (Avg. Tokens) |

|---|---|---|---|

| BASE | AIME24 | 23.3 | 7280 |

| BASE | LiveCode | 17.8 | 6990 |

| IARS-HeuristicSwitch | AIME24 | 25.0 | 7100 |

| IARS-HeuristicSwitch | LiveCode | 19.0 | 6800 |

| IARS-NoModulation | AIME24 | 28.0 | 7000 |

| IARS-NoModulation | LiveCode | 20.5 | 6700 |

| IARS-NoIntent | AIME24 | 30.0 | 6900 |

| IARS-NoIntent | LiveCode | 21.0 | 6500 |

| IARS (Full) | AIME24 | 32.5 | 6850 |

| IARS (Full) | LiveCode | 22.0 | 6400 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).