Submitted:

29 September 2025

Posted:

29 September 2025

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Methodology

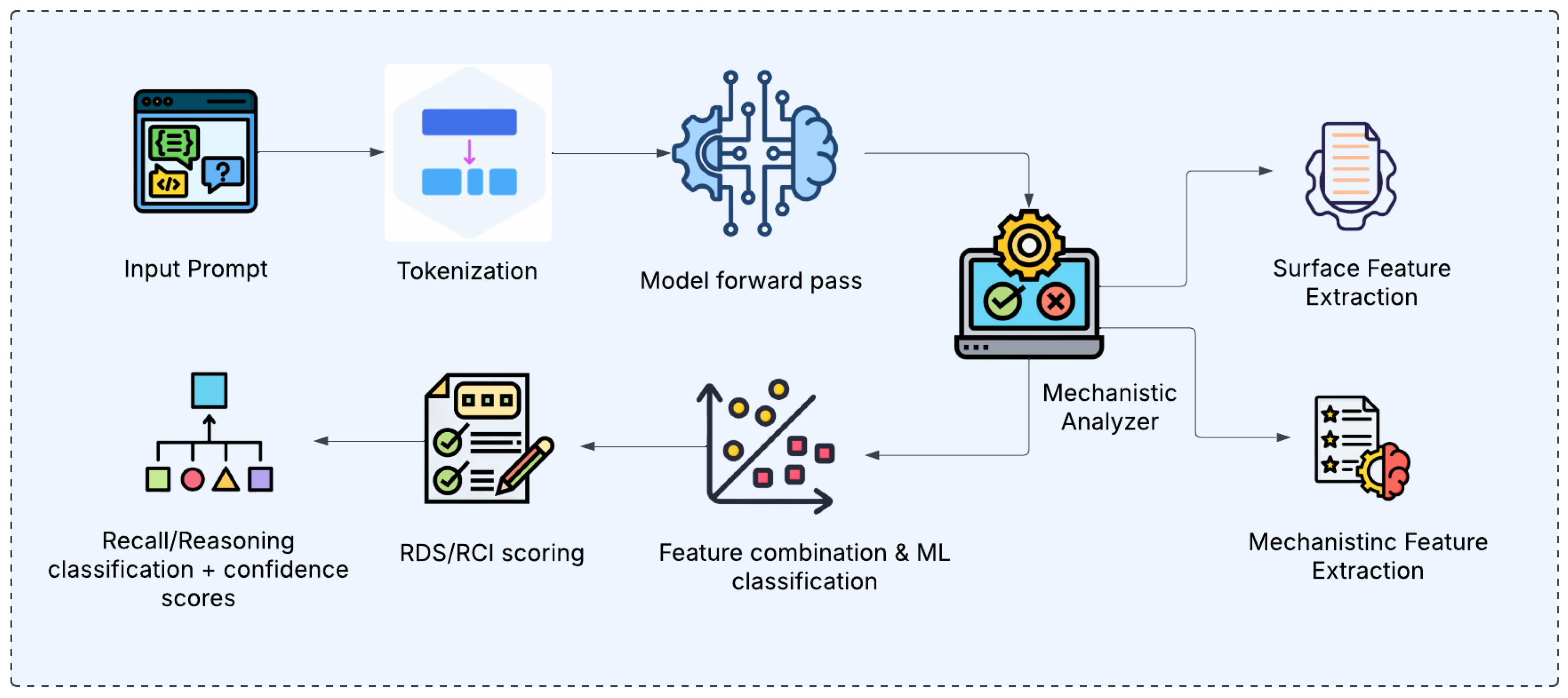

2.1. Framework Architecture

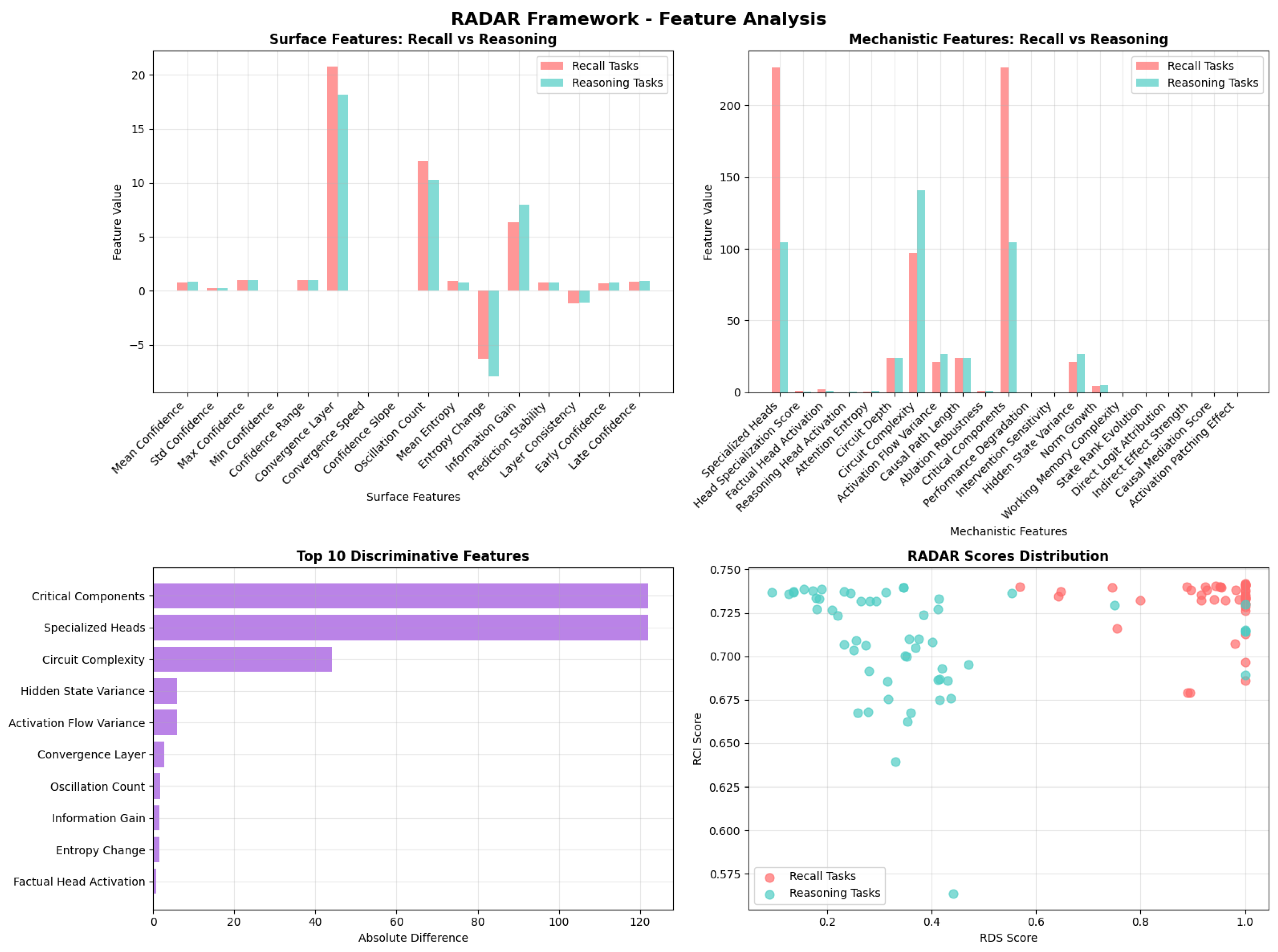

2.2. Feature Engineering

2.3. Classification System

3. Experiments and Results

3.1. Experimental Setup and Results

3.2. Feature Analysis

4. Discussion and Implications

4.1. Contamination Detection Applications

4.2. Interpretability Insights

5. Conclusion

Appendix A. Implementation Details

Appendix A.1. Model Configuration

Appendix A.2. Feature Computation

Appendix A.3. Training Procedure

Appendix B. Training and Test Datasets

Appendix B.1. Training Dataset

| Category | Count |

|---|---|

| Total Examples | 30 |

| Recall Examples | 15 |

| Reasoning Examples | 15 |

| Prompt | Label |

|---|---|

| “The capital of France is” | recall |

| “If X is the capital of France, then X is” | reasoning |

| “2 + 2 equals” | recall |

| “If a triangle has angles 60, 60, and X degrees, then X equals” | reasoning |

Appendix B.2. Test Dataset

| Category | Count |

|---|---|

| Total Examples | 100 |

| Clear Recall Examples | 20 |

| Clear Reasoning Examples | 20 |

| Challenging/Ambiguous Cases | 30 |

| Complex Reasoning Cases | 30 |

| Category | Example Prompt | Label |

|---|---|---|

| Clear Recall | “The capital of Germany is” | recall |

| Clear Reasoning | “If a rectangle has length 5 and width 3, its area is” | reasoning |

| Challenging/Ambiguous | “What is the sum of 10 and 15?” | reasoning |

| Complex Reasoning | “If a store has 100 items and sells 30% of them, how many items remain?” | reasoning |

Appendix B.3. Why Challenging or Ambiguous Prompts Are Difficult

- Some prompts may appear to require reasoning (e.g., arithmetic) but can be solved by memorized recall if the model has seen similar examples during training.

- Conversely, some factual prompts may trigger reasoning-like processing if the information is incomplete or framed indirectly.

- Ambiguity arises when the surface form of the task does not clearly signal whether the solution requires stored knowledge or active inference.

Appendix C. Scoring

- Recall Detection Score (RDS): Indicates how strongly the analysis suggests a recall-based process, combining specific surface and mechanistic features.

- Reasoning Complexity Index (RCI): Reflects the complexity and depth of processing, suggesting a reasoning-based process. Derived from a combination of surface and mechanistic features.

- Mechanistic Score: Focuses on features related to causal effects and intervention sensitivity.

- Circuit Complexity Score: Based on features describing the depth and complexity of the activated computational graph.

Appendix D. Feature Documentation

Appendix D.1. Surface Features (16 Features)

Appendix D.1.1. Confidence-Based Features (8 Features)

| Feature | Type | Definition & Computation |

| mean_confidence | float | Mean confidence across all layers: where is the maximum softmax probability at layer l, and L is the total number of layers. |

| std_confidence | float | Standard deviation of confidence trajectory: . Higher values indicate more variable confidence across layers. |

| max_confidence | float | Maximum confidence achieved: . Indicates peak certainty reached by the model. |

| min_confidence | float | Minimum confidence observed: . Represents lowest certainty point in processing. |

| confidence_range | float | Range of confidence values: . Measures the span of confidence variation across layers. |

| convergence_layer | int | Layer index where maximum confidence is achieved: . Earlier convergence may indicate simpler recall tasks. |

| convergence_speed | float | Inverse of convergence layer: . Higher values indicate faster convergence to high confidence. |

| confidence_slope | float | Linear regression slope of confidence trajectory: where . Positive slopes indicate increasing confidence. |

Appendix D.1.2. Trajectory Dynamics Features (4 Features)

| Feature | Type | Definition & Computation |

| oscillation_count | int | Number of sign changes in the discrete confidence derivative. Let for . Then oscillation_count i.e., consecutive derivatives with opposite sign. Zeros in are ignored for sign changes. |

| early_confidence | float | Mean confidence in the first half of layers:

|

| late_confidence | float | Mean confidence in the second half of layers:

|

| prediction_stability | float | Inverse of confidence standard deviation:

|

Appendix D.1.3. Information-Theoretic Features (4 Features)

| Feature | Type | Definition & Computation |

| mean_entropy | float | Average entropy across layers:

|

| entropy_change | float | Change from first to last layer:

|

| information_gain | float | Negative entropy change:

|

| layer_consistency | float | Inverse of entropy standard deviation:

|

Appendix D.2. Mechanistic Features (21 Features)

Appendix D.2.1. Attention Specialization Features (5 Features)

| Feature | Type | Definition & Computation |

| num_specialized_heads | int | Total count of attention heads with entropy below a specialization threshold (typically ):

|

| head_specialization_score | float | Normalized specialization measure:

|

| factual_head_activation | float | Inverse relationship with attention entropy:

|

| reasoning_head_activation | float | Proportional to attention entropy:

|

| attention_entropy | float | Mean entropy across all attention heads:

|

Appendix D.2.2. Circuit Dynamics Features (4 Features)

| Feature | Type | Definition & Computation |

| effective_circuit_depth | float | Number of layers with significant causal effects. Equal to the number of attention layers analyzed. Represents the depth of the computational circuit. |

| circuit_complexity | float | Product of variance and norm growth:

|

| activation_flow_variance | float | Variance in activation magnitudes across layers. Measures how much activation patterns change between layers, indicating computational complexity. |

| causal_path_length | float | Length of the causal computation path. Currently equal to circuit depth, representing the number of processing steps in the causal chain. |

Appendix D.2.3. Intervention Sensitivity Features (4 Features)

| Feature | Type | Definition & Computation |

| ablation_robustness | float | Robustness to component removal:

|

| critical_component_count | int | Number of critical components:

|

| performance_degradation_slope | float | Rate of performance degradation under intervention:

|

| intervention_sensitivity | float | Sensitivity to interventions:

|

Appendix D.2.4. Working Memory Features (4 Features)

| Feature | Type | Definition & Computation |

| hidden_state_variance | float | Variance in hidden state activations. Measures variability in internal representations across layers, indicating working memory usage. |

| norm_growth_trajectory | float | Growth pattern of activation norms. tracks how activation magnitudes change across layers, indicating information accumulation. |

| working_memory_complexity | float | Complexity of working memory usage. Currently uses rank evolution as a proxy for working memory complexity. |

| state_rank_evolution | float | Evolution of representation rank. measures how the effective dimensionality of representations changes across layers. |

Appendix D.2.5. Causal Effect Features (4 Features)

| Feature | Type | Definition & Computation |

| direct_logit_attribution | float | Direct causal effect on output:

|

| indirect_effect_strength | float | Strength of indirect causal effects:

|

| causal_mediation_score | float | Mediation effect strength:

|

| activation_patching_effect | float | Proxy measure for activation patching:

|

Appendix E. Feature Computation Pipeline

Appendix E.1. Surface Feature Extraction

- Extract confidence trajectory:

- Extract entropy trajectory:

- Compute statistical measures: mean, standard deviation, minimum, maximum, and range.

- Analyze trajectory dynamics: slope, oscillations, and convergence properties.

- Calculate information-theoretic measures.

Appendix E.2. Mechanistic Feature Extraction

- Analyze attention patterns across all layers and heads.

- Compute attention entropy for each head:

- Identify specialized heads:

- Analyze activation patterns (variance, norms, and rank evolution).

- Compute proxy causal effects from attention entropy.

- Calculate intervention sensitivity measures.

Appendix F. Important Notes and Limitations

Appendix F.1. Proxy Measures

- Causal effects: Derived from attention entropy instead of actual interventions.

- Activation patching: Approximated via attention entropy proxy, not true patching experiments.

- Critical components: Approximated using specialized head counts.

- Working memory: Approximated using rank evolution as a complexity proxy.

Appendix F.2. Computational Considerations

- All features can be computed in a single forward pass.

- No gradient computation is required for feature extraction.

- Attention patterns are analyzed across all layers and heads.

- Surface features require only the output probability distributions.

Appendix G. Usage in Classification

References

- Golchin, S.; Surdeanu, M. Time travel in llms: Tracing data contamination in large language models. arXiv preprint arXiv:2308.08493, arXiv:2308.08493 2023.

- Deng, C.; Zhao, Y.; Tang, X.; Gerstein, M. Investigating data contamination in modern benchmarks for large language models. arXiv preprint arXiv:2311.09783, arXiv:2311.09783 2023.

- Feldman, V. Does learning require memorization? a short tale about a long tail. In Proceedings of the Proceedings of the 52nd Annual ACM SIGACT Symposium on Theory of Computing, 2020, pp.

- Carlini, N.; Tramer, F.; Wallace, E.; Jagielski, M.; Herbert-Voss, A.; Lee, K.; et al. Extracting training data from large language models. In Proceedings of the 30th USENIX Security Symposium (USENIX Security 21); 2021; pp. 2633–2650. [Google Scholar]

- Elhage, N.; Nanda, N.; Olsson, C.; Henighan, T.; Joseph, N.; Mann, B.; et al. A mathematical framework for transformer circuits. Anthropic 2021. [Google Scholar]

- Olah, C.; Cammarata, N.; Schubert, L.; Goh, G.; Petrov, M.; Carter, S. Zoom in: An introduction to circuits. Distill 2020, 5, e00024–001. [Google Scholar] [CrossRef]

- Breiman, L. Random forests. Machine learning 2001, 45, 5–32. [Google Scholar] [CrossRef]

- Friedman, J.H. Greedy function approximation: A gradient boosting machine. Annals of statistics, 1189. [Google Scholar]

- Cortes, C.; Vapnik, V. Support-vector networks. Machine learning 1995, 20, 273–297. [Google Scholar] [CrossRef]

- Hosmer, D.W.; Lemeshow, S.; Sturdivant, R.X. Applied Logistic Regression; Wiley, 2013.

| Overall Performance | Category-wise Performance | ||

|---|---|---|---|

| Overall Accuracy | 93.0% | Clear Recall | 100% (20/20) |

| Recall Tasks | 97.7% | Clear Reasoning | 100% (20/20) |

| Reasoning Tasks | 89.3% | Challenging Cases | 76.7% (23/30) |

| Complex Reasoning | 100% (30/30) | ||

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).