Submitted:

15 May 2025

Posted:

16 May 2025

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Related Work

3. Methodology

3.1. Retrieval-Augmented Generation (RAG) Pipeline

- Data Acquisition: Corporate documents were accessed from a Microsoft SharePoint site using the Microsoft Graph API. This involved an initial HTTP Request node to the SharePoint site, followed by subsequent requests and JavaScript code nodes to extract page content, including text and metadata.

- Segmentation: The extracted text was segmented using the Recursive Character Text Splitter (LangChain node within n8n) [19] with a chunk overlap of 10 characters to maintain contextual continuity. Text chunks were transformed into numerical vector representations using the OpenAI Embeddings model (LangChain node within n8n) [44], specifically the text-embedding-3-large model. The generated embeddings, along with their corresponding text chunks and metadata, were stored in a Supabase Vector Store (LangChain node within n8n) [56] for efficient similarity-based retrieval.

- Retrieval Process: LLM queries were embedded using the same OpenAI Embeddings model. A similarity search was performed in the Supabase Vector Store to retrieve relevant document chunks. The retrieved chunks were provided as context to the LLM to generate a ranked list of documents.

- Automation and Scheduling: The entire workflow was automated using n8n and scheduled to run periodically (frequency specified in the "Schedule Trigger" node) to ensure the vector store remained synchronized with updates to the SharePoint data source.

3.2. Dataset Creation and Augmentation

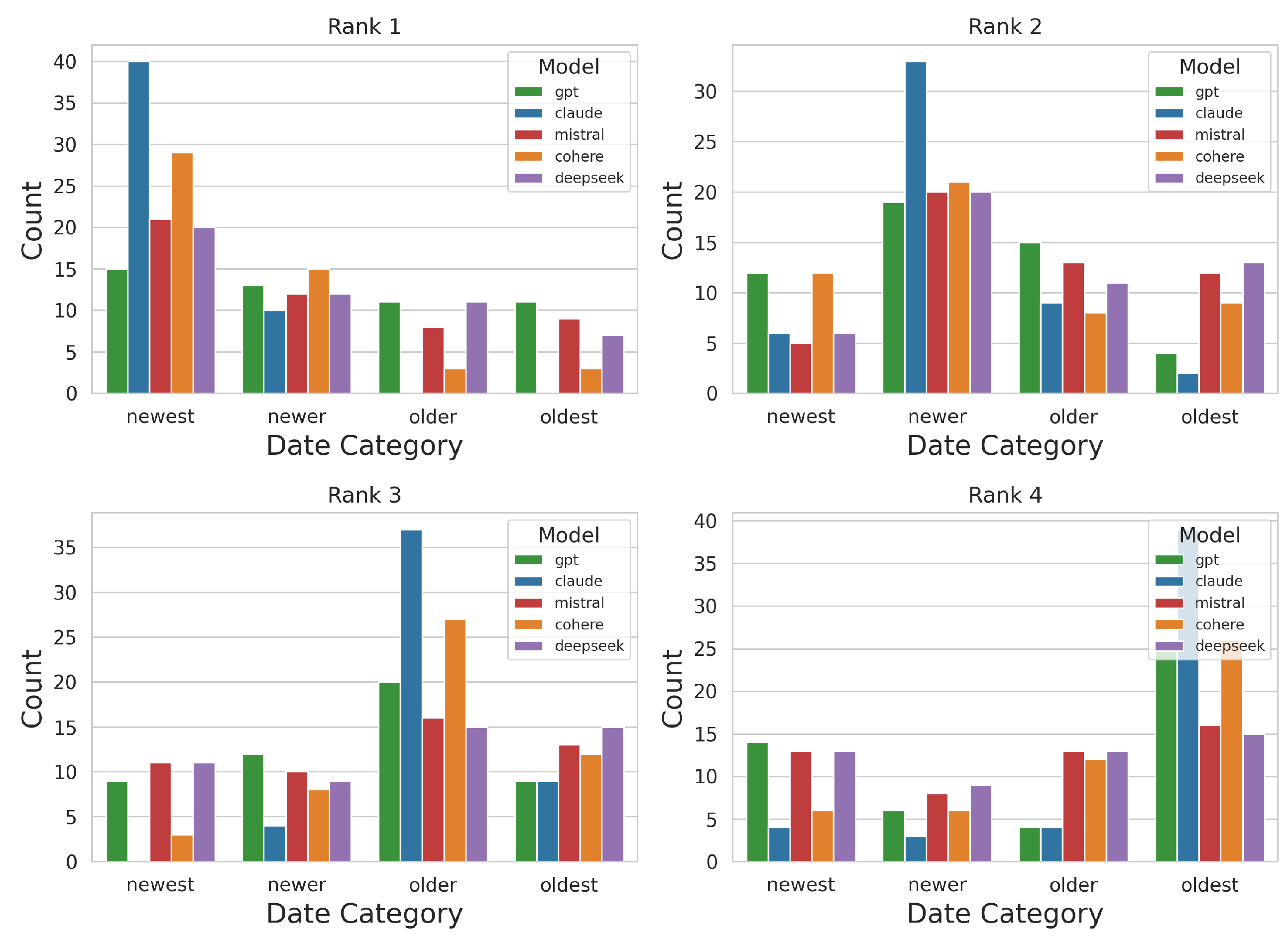

- Recency Bias: For every original document, three additional copies were manually created with identical content but with timestamps set from 0.5 to 5 years in the past. This approach ensured that only the temporal metadata varied, isolating the effect of recency on document retrieval.

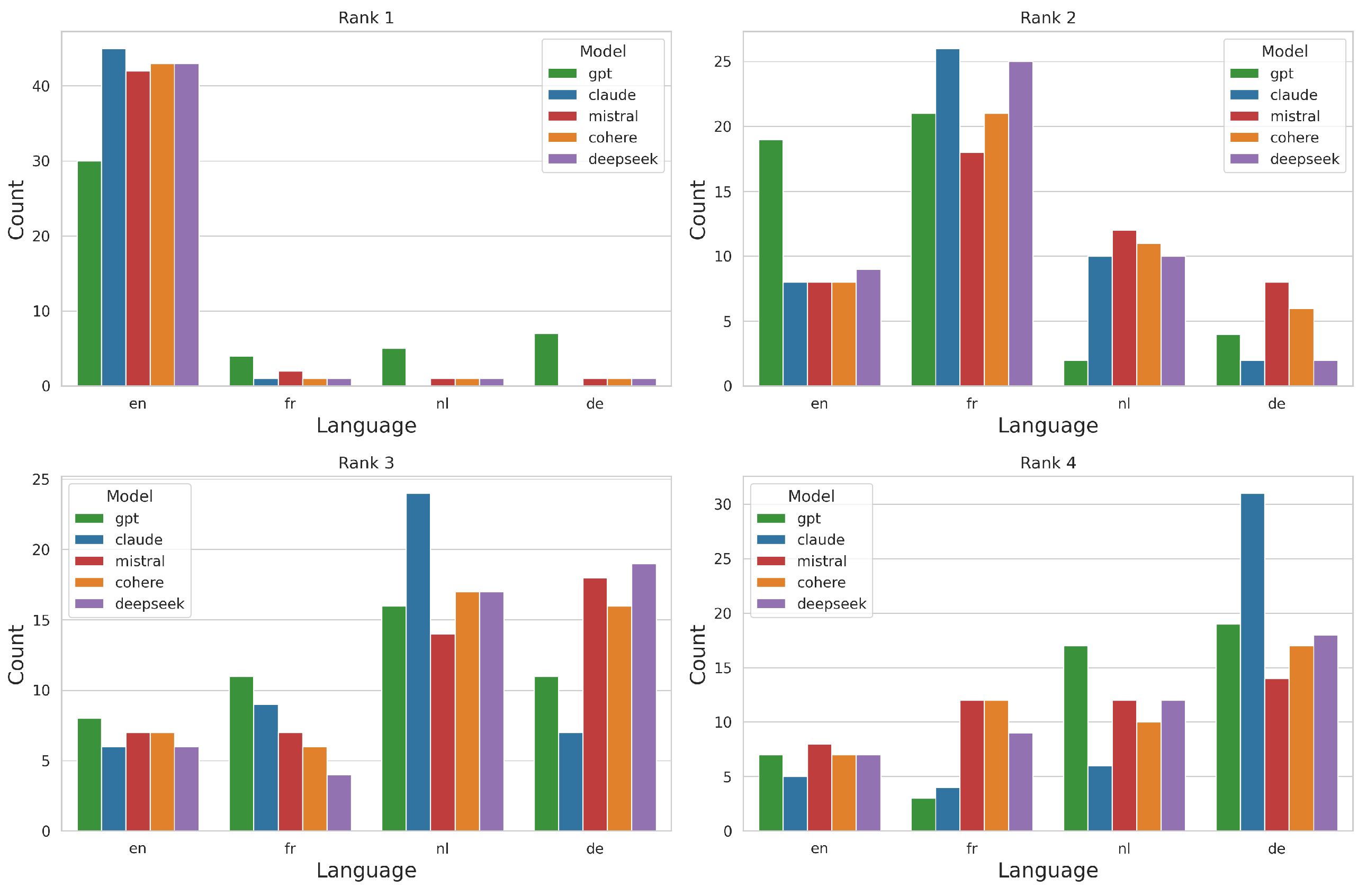

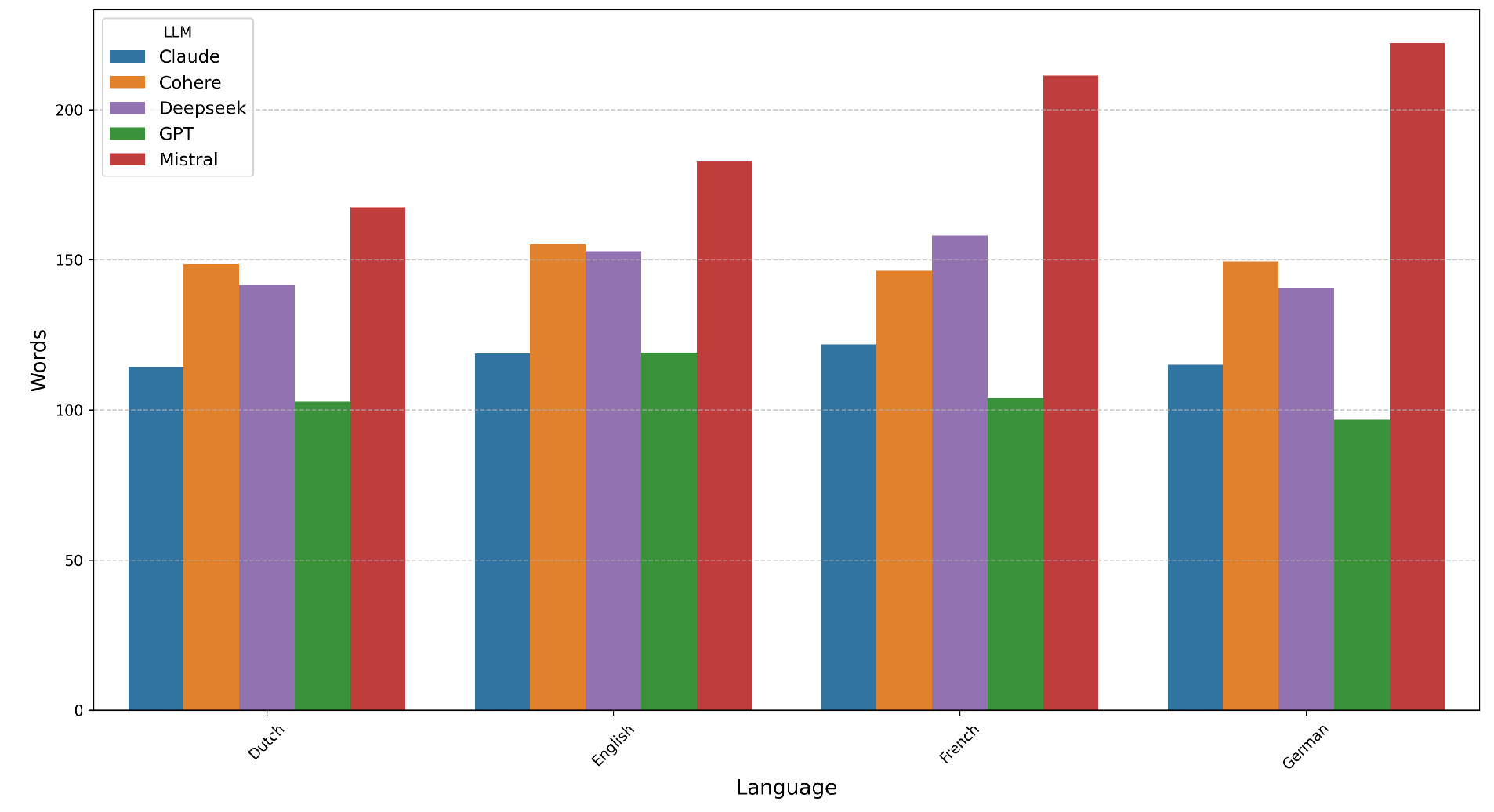

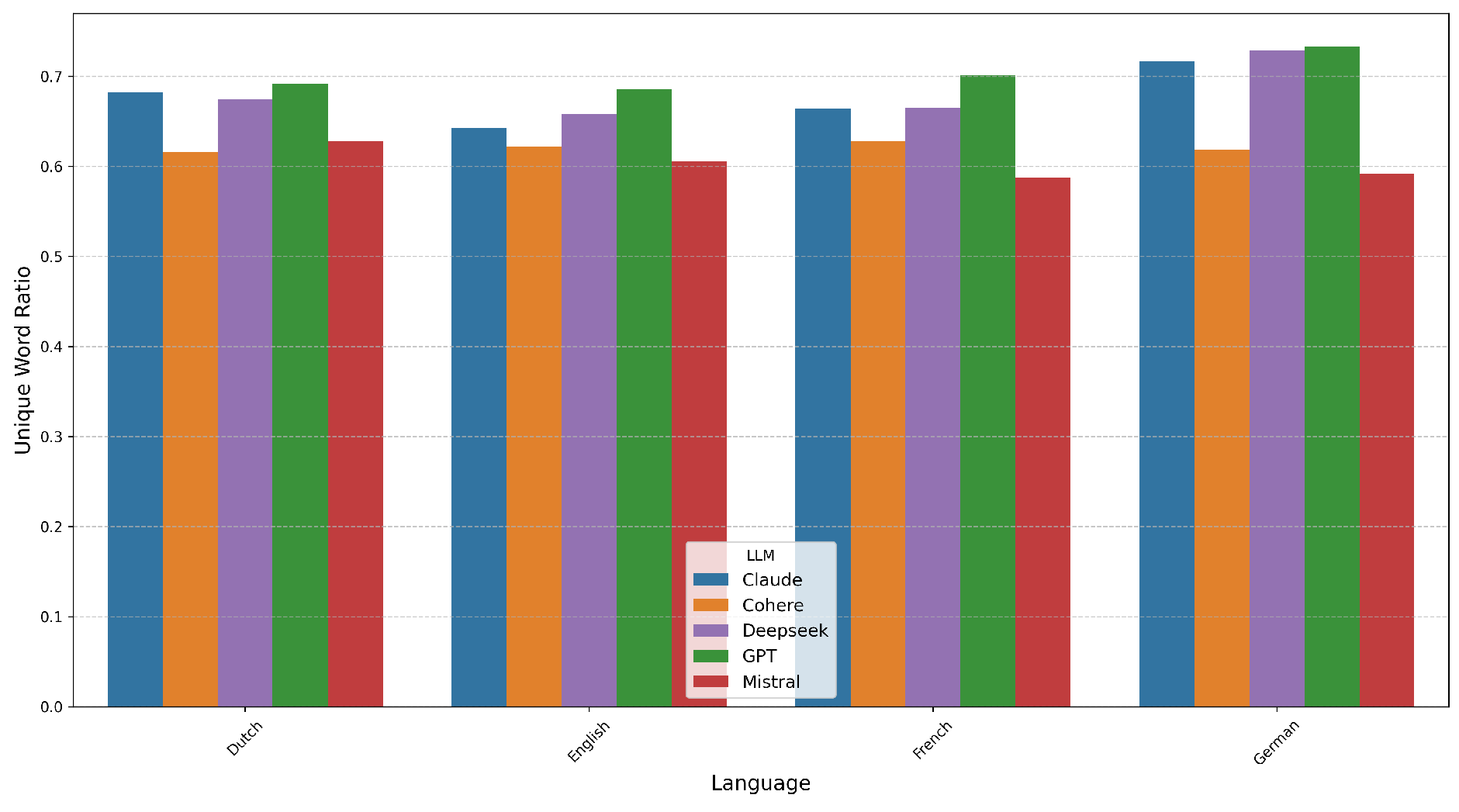

- Retrieval Language Bias: Documents were translated into four languages (English, French, Flemish/Dutch, and German) by professional translators. This manual translation process preserved semantic equivalency and accounted for cultural nuances, avoiding potential artifacts introduced by automated translation tools.

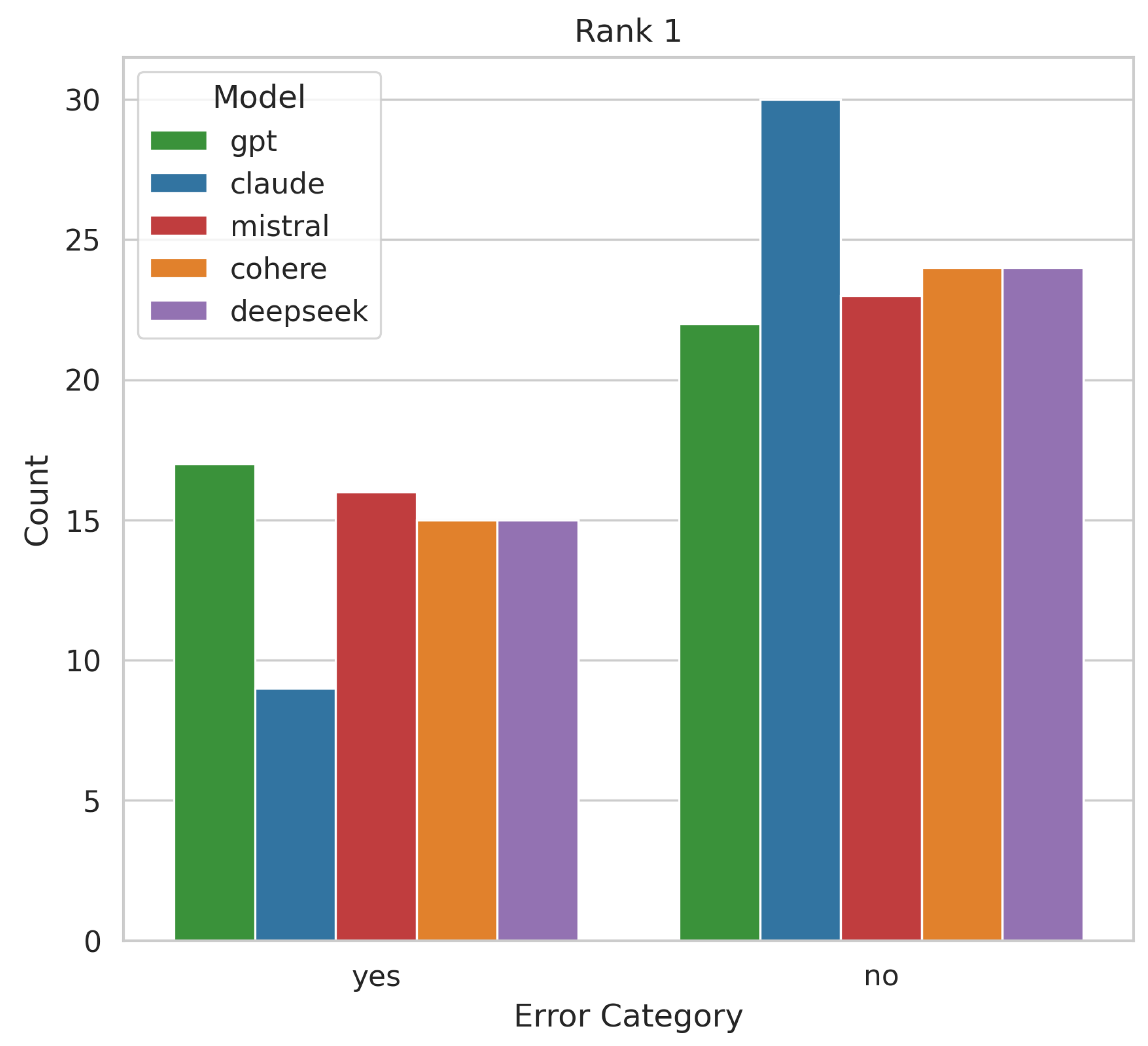

- Retrieval Grammar Bias: Each document was duplicated into two versions—one containing intentional spelling and grammatical mistakes (e.g., ‘you will able to access’ (missing auxiliary verb), ‘the administrative documents has to be read’ (incorrect conjugation)), and one kept error-free. These modifications were introduced by human editors to simulate realistic grammatical or orthographical errors without altering the core content.

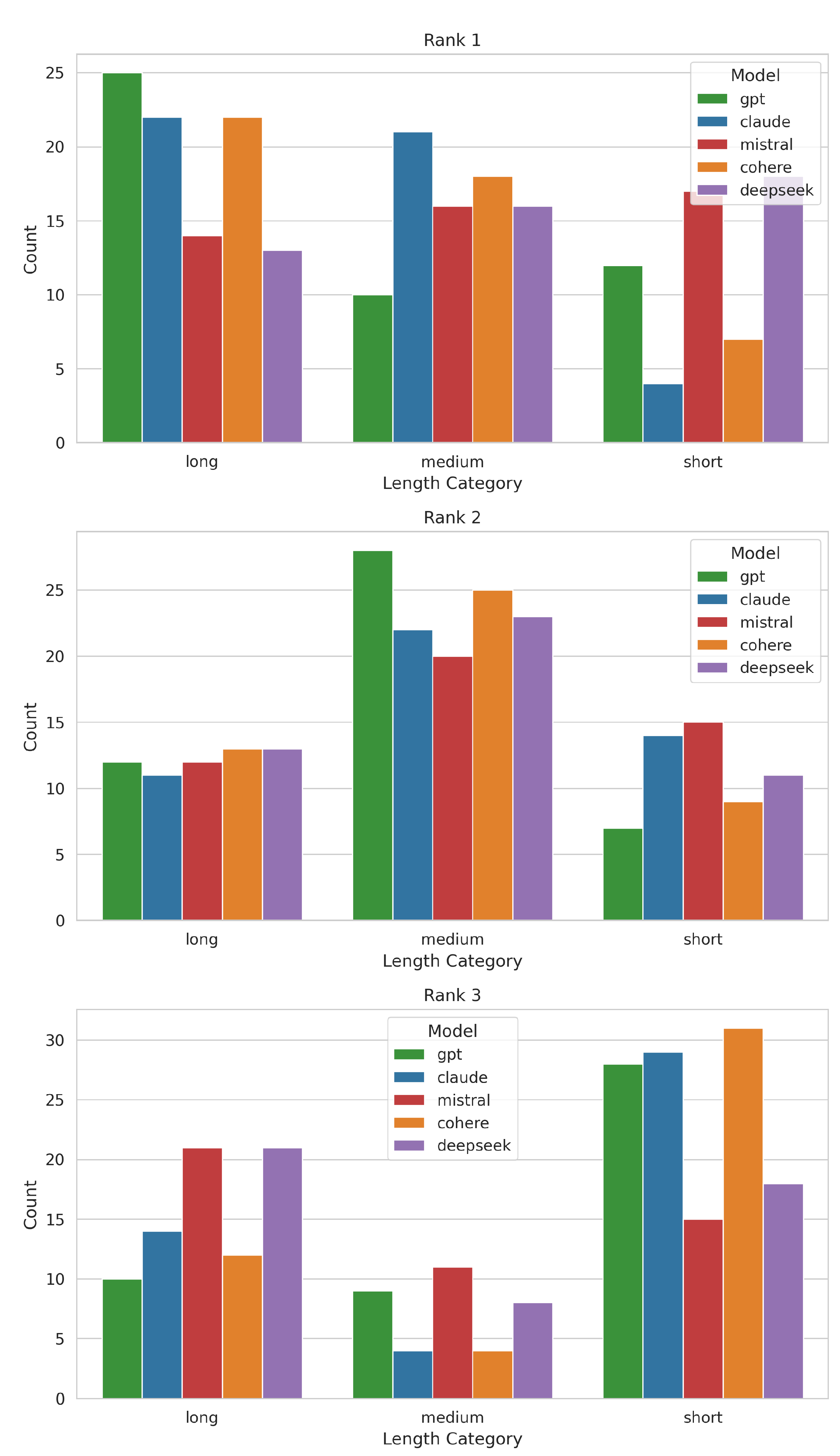

- Retrieval Length Bias: Documents were categorized into short (<600 characters), medium (600–1300 characters), and long (>1300 characters) by manually editing the content to vary verbosity. Human editors ensured that the essential information remained consistent across versions, allowing for the assessment of length-based retrieval preferences.

3.3. Retrieval Bias

3.4. Reinforcement Bias

3.5. Language Bias

3.6. Hallucination Bias

4. Results

4.1. Retrieval Bias

4.1.1. Retrieval Recency Bias

4.1.2. Retrieval Grammar Bias

4.1.3. Retrieval Length Bias

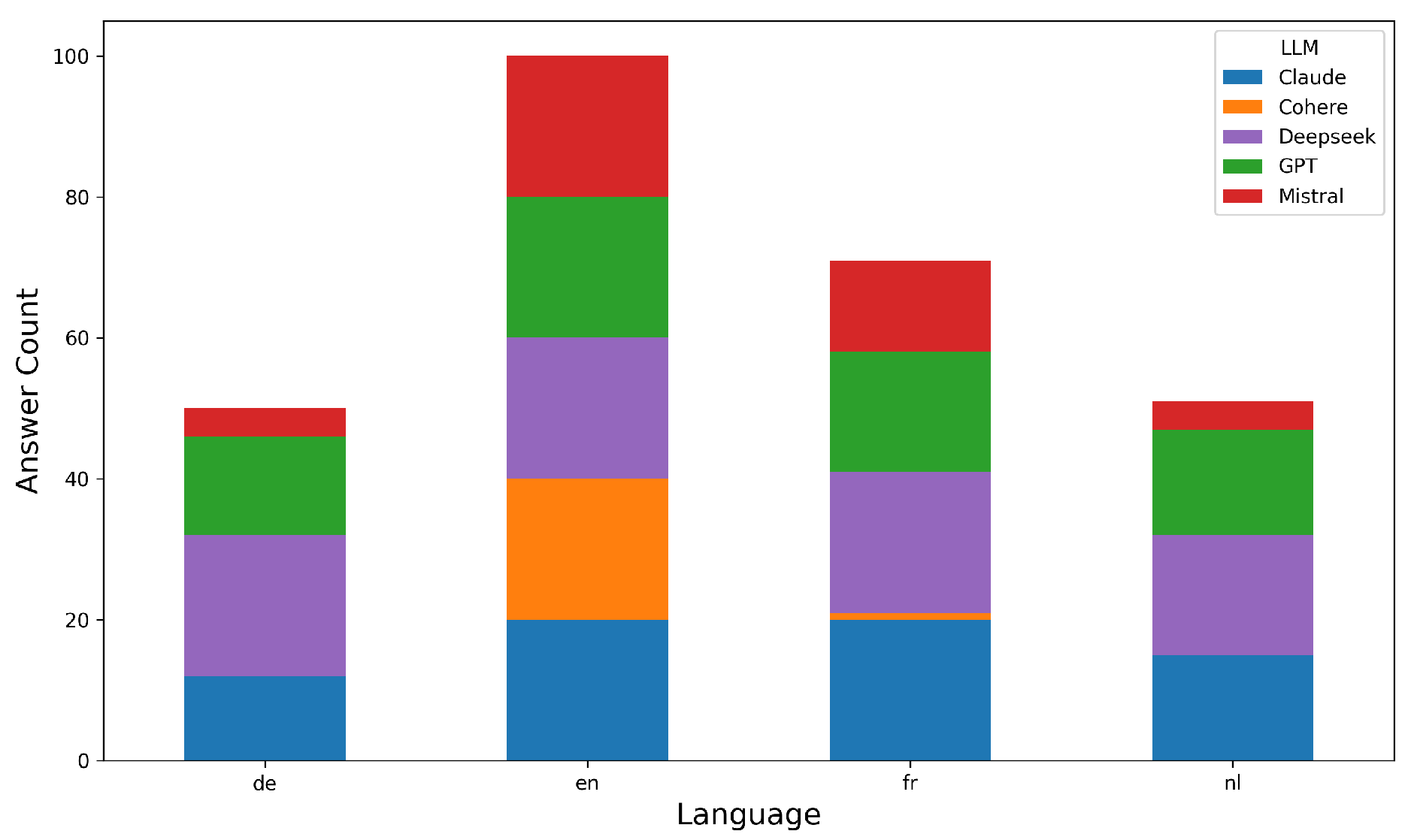

4.1.4. Retrieval Language Bias

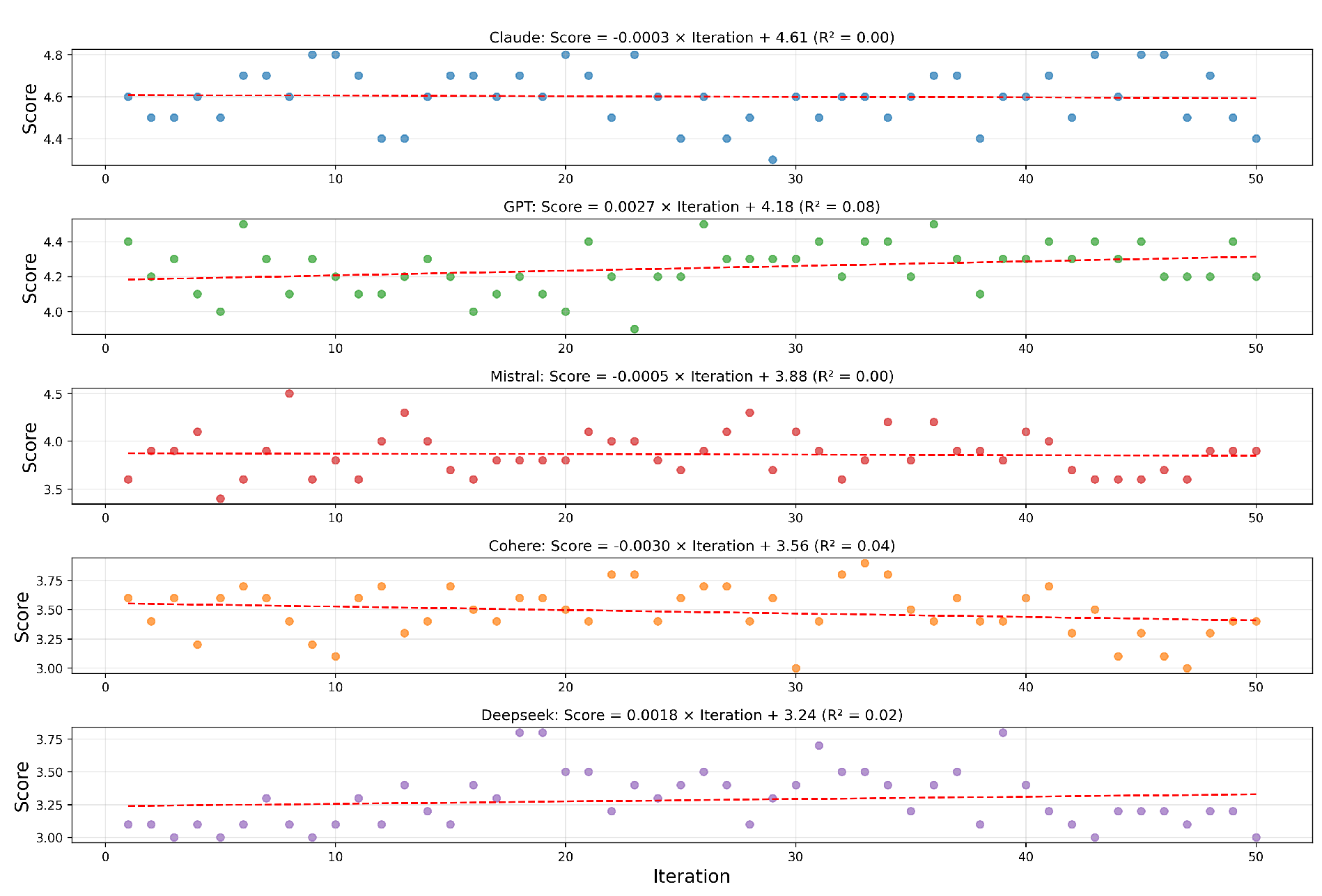

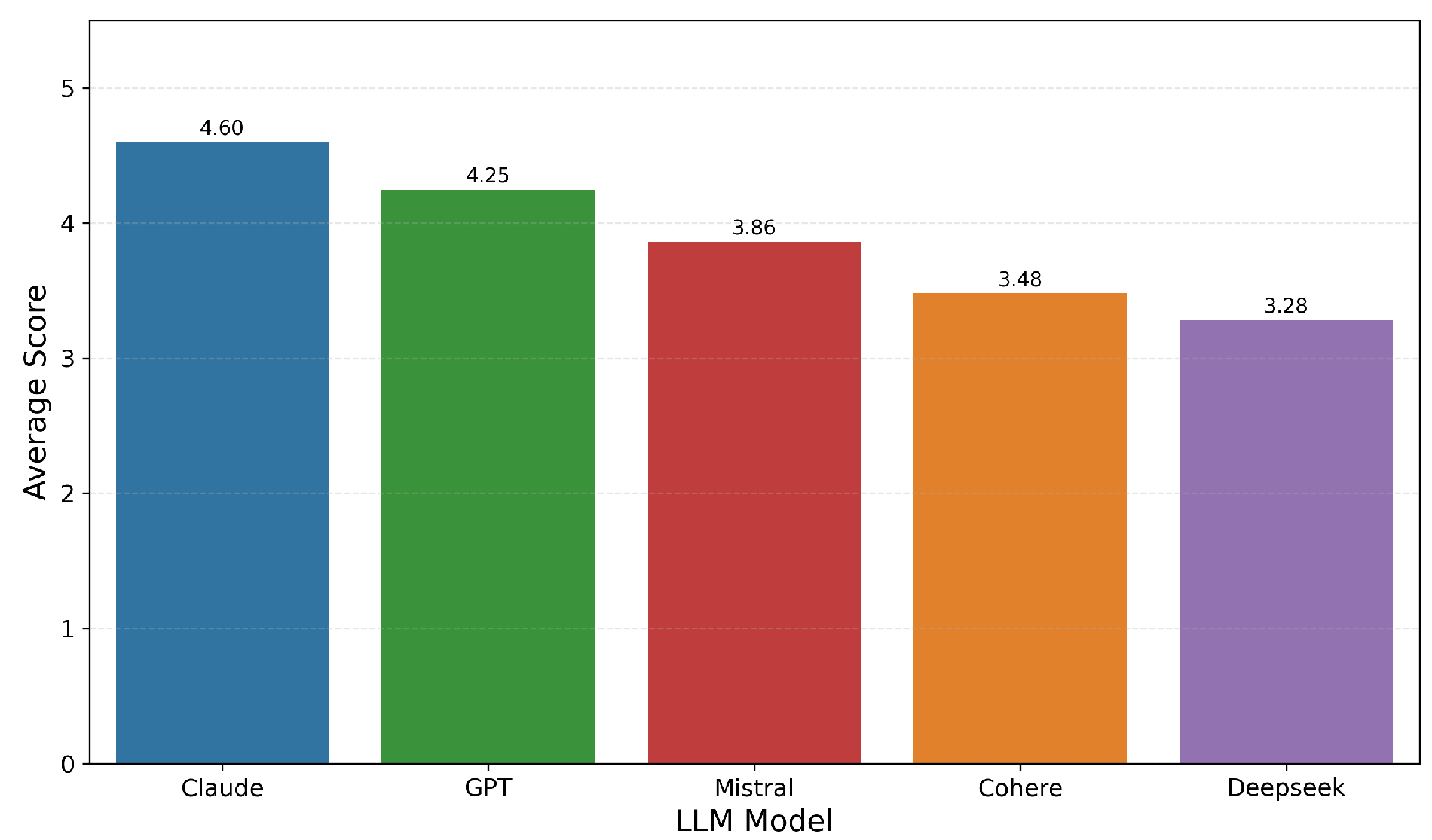

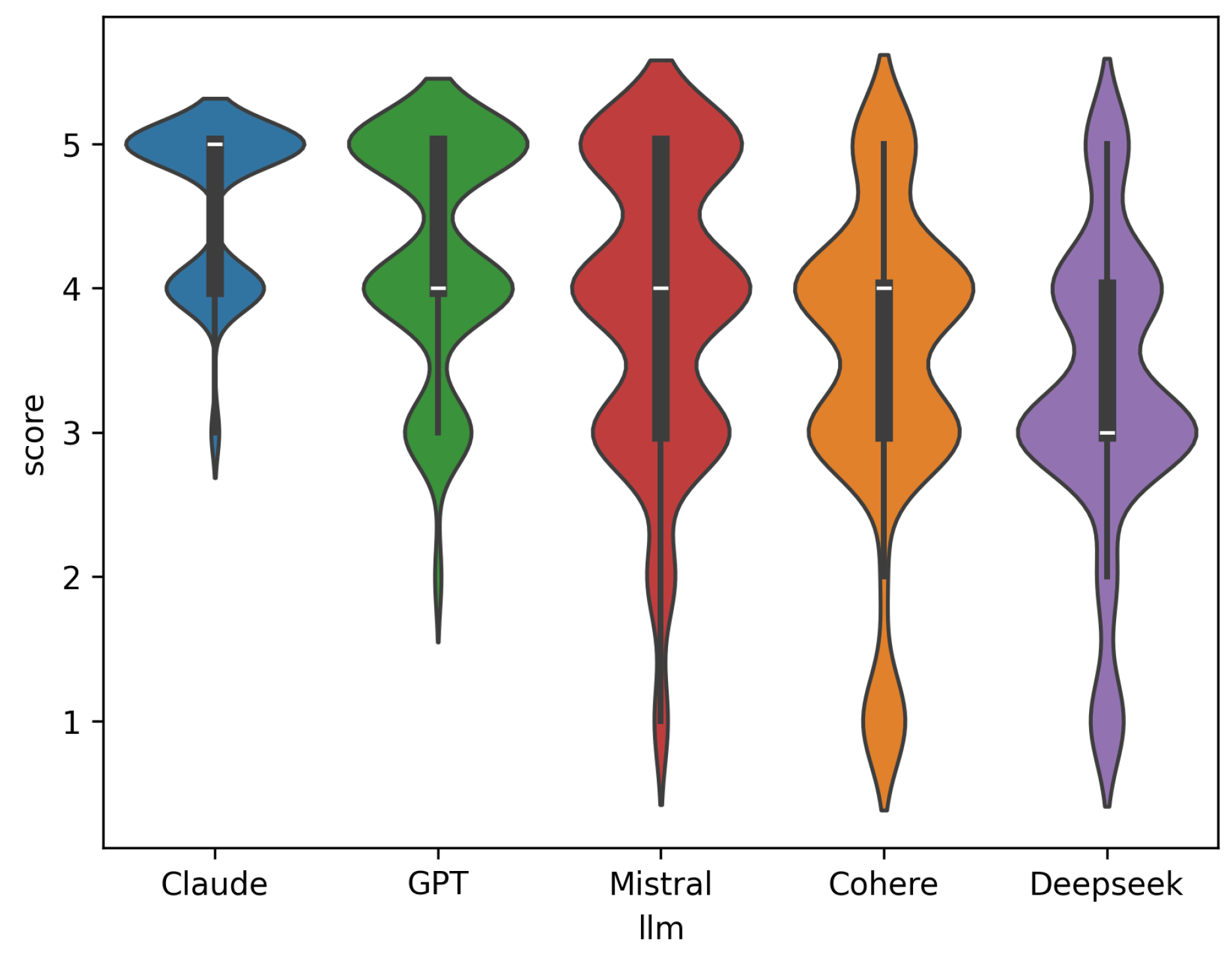

4.2. Reinforcement Bias

4.3. Language Bias

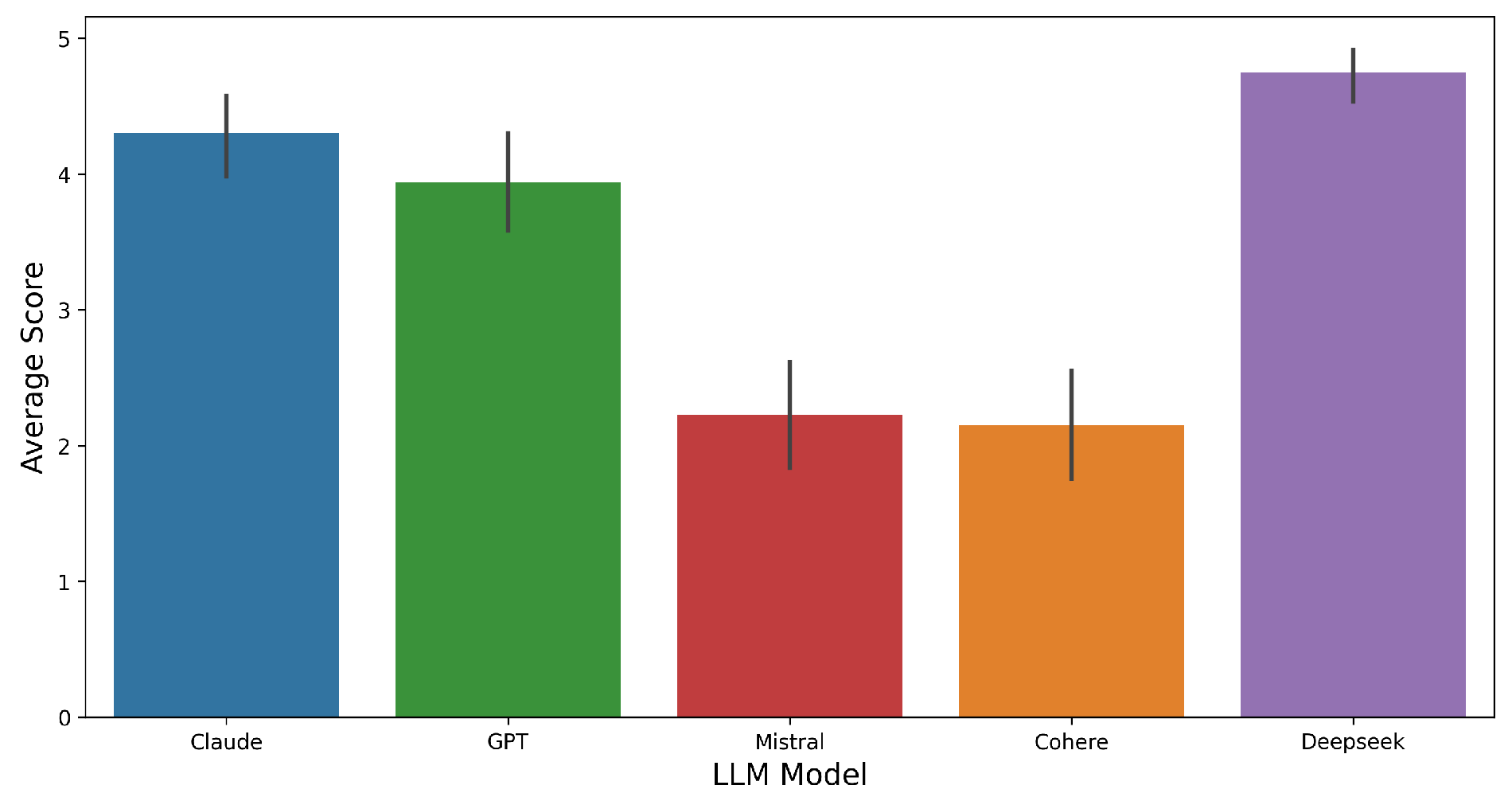

4.4. Hallucination Bias

5. Discussions

6. Conclusion

Supplementary Materials

Acknowledgments

References

- Reducing hallucinations in large language models with custom intervention using amazon bedrock agents | aws machine learning blog. AWS Machine Learning Blog, Nov 2024.

- Understanding and mitigating bias in large language models (llms). DataCamp Blog, Jan 2024.

- Ai’s recency bias problem - the neuron. The Neuron, Aug 2024.

- Ensuring consistent llm outputs using structured prompts. Ubiai Tools Blog, 2024.

- Does anyone know why instruction precision degrades over time? - gpt builders. OpenAI Developer Community, Apr 2025.

- Deepseek r1 semantic relevance issues. GitHub Issue, Mar 2025.

- Reducing llm hallucinations using retrieval augmented generation (rag). Digital Alpha Blog, Jan 2025.

- Rag hallucination: What is it and how to avoid it. K2View Blog, Apr 2025.

- C. AI. Command a: An enterprise-ready large language model, 2025a. URL https://cohere.com/research/papers/command-a-an-enterprise-ready-family-of-large-language-models-2025-03-27. Technical report.

- D. AI. Deepseek-v3 technical report, 2024a. URL https://deepseek.com/blog/deepseek-v3-release. Unpublished technical documentation.

- D. AI. Deepseek r1. 2025b.

- M. AI. Mixtral 8x22b and mistral large models, 2024b. URL https://mistral.ai/news/.

- An, H.; Li, Z.; Zhao, J.; Rudinger, R. Sodapop: Open-ended discovery of social biases in social commonsense reasoning models. In Proceedings of the 17th Conference of the European Chapter of the Association for Computational Linguistics; 2023; pp. 1573–1596. [Google Scholar]

- Anthropic. Claude 3 model family, 2023. URL https://www.anthropic.com/news/claude-3-family.

- Bird, S.; Klein, E.; Loper, E. Natural Language Processing with Python; O’Reilly Media, Inc., 2009. [Google Scholar]

- Blodgett, S.L.; Barocas, S.; Daumé, H., III; Wallach, H. Language (technology) is power: A critical survey of "bias" in nlp. In Proceedings of the 58th Annual Meeting of the Association for Computational Linguistics; 2020; pp. 5454–5476. [Google Scholar]

- Bolukbasi, T.; Chang, K.-W.; Zou, J.; Saligrama, V.; Kalai, A. Man is to computer programmer as woman is to homemaker? In debiasing word embeddings. In Proceedings of the 30th International Conference on Neural Information Processing Systems; 2016; pp. 4356–4364. [Google Scholar]

- Caliskan, A.; Bryson, J.J.; Narayanan, A. Semantics derived automatically from language corpora contain human-like biases. Science 2017, 356, 183–186. [Google Scholar] [CrossRef] [PubMed]

- Chase, H. Langchain: Framework for llm applications, 2023. URL https://www.langchain.com.

- Chen, K.E. Using deepseek r1 for rag: Do’s and don’ts. SkyPilot Blog, Feb 2025.

- Christiano, P.; Amodei, D.; Brown, T.B.; Clark, J.; McCandlish, S.; Leike, J. Training language models to follow instructions with human feedback. J. Mach. Learn. Res. 2023, 24, 1–44. [Google Scholar]

- Cohen, J. A coefficient of agreement for nominal scales. Educ. Psychol. Meas. 1960, 20, 37–46. [Google Scholar] [CrossRef]

- Conneau, A.; Rinott, R.; Lample, G.; Williams, A.; Bowman, S.R.; Schwenk, H.; Stoyanov, V. Xnli: Evaluating cross-lingual sentence representations. In Proceedings of the 2018 Conference on Empirical Methods in Natural Language Processing; 2018; pp. 2475–2485. [Google Scholar]

- Etxaniz, J.; Goikoetxea, A.; Uria, A.; Perez-de Viñaspre, O.; Agirre, E. Do multilingual language models think better in english? arXiv arXiv:2308.01223, 2023.

- Finch, W.H. An introduction to the analysis of ranked response data. Pract. Assessment, Res. Eval. 2022, 27, 1–15. [Google Scholar]

- Garg, S.; et al. Robustness of language models to input perturbations: A survey. In Proceedings of the 61st Annual Meeting of the Association for Computational Linguistics; 2023. [Google Scholar]

- Gonen, H.; Goldberg, Y. Lipstick on a pig: Debiasing methods cover up systematic gender biases in word embeddings but do not remove them. In Proceedings of the 2019 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies; 2019; pp. 609–614. [Google Scholar]

- Ji, S.; Lee, N.; Fries, L.; Yu, T.; Su, Y.; Xu, Y.; Chang, E. Survey of hallucination in large language models. ACM Comput. Surv. 2024, 56, 1–38. [Google Scholar]

- Ji, Z.; Lee, H.; Freitag, D.; Kalai, A.; Ma, Y.; West, P.; Liang, P. A survey on knowledge-enhanced pre-trained language models. ACM Computing Surveys 2023, 56, 1–35. [Google Scholar] [CrossRef]

- Joshi, P.; Santy, S.; Budhiraja, A.; Bali, K.; Choudhury, M. The state and fate of linguistic diversity and inclusion in the nlp world. In Proceedings of the 58th Annual Meeting of the Association for Computational Linguistics; 2020; pp. 6282–6293. [Google Scholar]

- Kendall, M.G. A new measure of rank correlation. Biometrika 1938, 30, 81–93. [Google Scholar] [CrossRef]

- Kulshrestha, J.; Eslami, M.; Messias, J.; Zafar, M.B.; Ghosh, S.; Gummadi, K.P.; Karahalios, K. Search bias quantification: Investigating political bias in social media and web search. Inf. Retr. J. 2019, 22, 188–227. [Google Scholar] [CrossRef]

- Lee, J.; et al. Long-context large language models: A survey. arXiv arXiv:2402.00000, 2024.

- Liang, P.; Bommasani, R.; Lee, T.; Tsipras, D.; Soylu, D.; Yasunaga, M.; Zhang, Y.; Narayanan, D.; Wu, Y.; Kumar, A.; et al. Holistic evaluation of language models. In Advances in Neural Information Processing Systems; 2022; Volume 35, pp. 34014–34037. [Google Scholar]

- Liu, P.; et al. Prompting is programming: A survey of prompt engineering. Foundations and Trends in Information Retrieval, 2023. [Google Scholar]

- Liu, X.; et al. Primacy and recency effects in large language models. arXiv arXiv:2402.12345, 2024.

- Mallen, S.; Sanh, V.; Lasocki, N.; Archambault, C.; Bavard, B.L.; Perez, G. Making retrieval-augmented language models more reliable. In International Conference on Learning Representations, May 2023. URL https://openreview.net/forum?id=u5z31A2v9a.

- Mann, H.B.; Whitney, D.R. On a test of whether one of two random variables is stochastically larger than the other. Ann. Math. Stat. 1947, 18, 50–60. [Google Scholar] [CrossRef]

- McCurdy, K.; Serbetci, O. Are we consistently biased? multidimensional analysis of biases in distributional word vectors. In Proceedings of the Eighth Workshop on Cognitive Aspects of Computational Language Learning and Processing; 2017; pp. 110–120. [Google Scholar]

- Meade, N.; Adel, L.; Buehler, M.T.; Nejad, K.; Kong, L.; Zhang, D.; Christiano, P.F.; Burns, C. A survey of reinforcement learning from human feedback. arXiv arXiv:2312.14925, 2024.

- Mehrabi, N.; Morstatter, F.; Saxena, N.; Lerman, K.; Galstyan, A. A survey on bias and fairness in machine learning. ACM Comput. Surv. 2021, 54, 1–35. [Google Scholar] [CrossRef]

- n8n Technologies. n8n: Workflow automation tool, 2023. URL https://n8n.io.

- Noble, S.U. Algorithms of Oppression: How Search Engines Reinforce Racism; NYU Press, 2018. [Google Scholar]

- OpenAI. Gpt-4 technical report, 2023. URL https://cdn.openai.com/papers/gpt-4.pdf.

- Pearson, K. On the criterion that a given system of deviations from the probable in the case of a correlated system of variables is such that it can be reasonably supposed to have arisen from random sampling. London, Edinburgh, Dublin Philos. Mag. J. Sci. 1900, 50, 157–175. [Google Scholar] [CrossRef]

- Pedregosa, F.; Varoquaux, G.; Gramfort, A.; Michel, V.; Thirion, B.; Grisel, O.; Blondel, M.; Prettenhofer, P.; Weiss, R.; Dubourg, V.; Vanderplas, J.; Passos, A.; Cournapeau, D.; Brucher, M.; Perrot, M.; Duchesnay, E. Scikit-learn: Machine learning in python. J. Mach. Learn. Res. 2011, 12, 2825–2830. [Google Scholar]

- Perez, E.; et al. Discovering language model behaviors with model-written evaluations. arXiv arXiv:2212.09251, 2022.

- Perrig, P.; Zhang, S.; Li, S.-E.; Ji, Y. Towards responsible deployment of large language models: A survey. arXiv arXiv:2312.00762, 2023.

- Powers, D.M.W. Evaluation: From precision, recall and f-measure to roc, informedness, markedness & correlation. J. Mach. Learn. Technol. 2011, 2, 37–63. [Google Scholar]

- Ribeiro, M.T.; Singh, N.; Guestrin, C.; Singh, S. Beyond accuracy: Behavioral testing of nlp models with checklist. In Proceedings of the 58th Annual Meeting of the Association for Computational Linguistics, pages 4902–4912. Association for Computational Linguistics, July 2020. URL https://aclanthology.org/2020.acl-main.444.

- Safdari, I.; Ramesh, A.V.; Zhao, T.; Zhang, D.; Ribeiro, M.T.; Dubhashi, D.V.R.; Gupta, A.; Singla, M. Is your model biased? exploring biases in large language models. arXiv arXiv:2303.11621, 2023.

- Sap, M.; Gabriel, S.; Qin, L.; Jurafsky, D.; Smith, N.A.; Choi, Y. Social bias frames: Reasoning about social and power implications of language. In Proceedings of the 57th Annual Meeting of the Association for Computational Linguistics; 2019; pp. 2338–2352. [Google Scholar]

- Schut, L.; Gal, Y.; Farquhar, S. Do multilingual llms think in english? arXiv 2025, arXiv:2502.15603. [Google Scholar]

- Seabold, S.; Perktold, J. Statsmodels: Econometric and statistical modeling with python. In Proceedings of the 9th Python in Science Conference; 2010; pp. 57–61. [Google Scholar]

- Shuster, K.; et al. Rag at scale: How retrieval augmentation reduces hallucination in large language models. arXiv arXiv:2401.00001, 2024.

- Supabase. Supabase: Open source firebase alternative, 2023. URL https://supabase.com.

- Virtanen, P.; Gommers, R.; Oliphant, T.E.; Haberland, M.; Reddy, T.; Cournapeau, D.; Burovski, E.; Peterson, P.; Weckesser, W.; Bright, J.; et al. Scipy 1.0: Fundamental algorithms for scientific computing in python. Nat. Methods 2020, 17, 261–272. [Google Scholar] [CrossRef]

- Willmott, C.J.; Matsuura, K. Mean absolute error: A robust measure of average error. Int. J. Climatol. 1986, 6, 516–519. [Google Scholar]

- Xu, W.; et al. Cross-lingual retrieval-augmented generation: Challenges and solutions. arXiv arXiv:2403.00001, 2024.

- Zhao, J.; Wang, T.; Yatskar, M.; Ordonez, V.; Chang, K.-W. Gender bias in coreference resolution: Evaluation and debiasing methods. In Proceedings of the 2018 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies; 2018; pp. 15–20. [Google Scholar]

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).