Submitted:

27 February 2025

Posted:

03 March 2025

You are already at the latest version

Abstract

Keywords:

1. Introduction

- Revealing inherent preferences of the visual masked self-supervised pre-trained model for classification label position encoding methods, providing new research directions for future fine-tuning strategies.

- Proposing a computationally efficient unsupervised label position ranking method, offering a novel optimization strategy for downstream transfer in self-supervised learning.

- Advancing stability research in self-supervised learning for downstream tasks, providing theoretical support and practical guidelines for more efficient and robust fine-tuning methods.

- Conducting extensive experiments on ImageMAE [1] and VideoMAE [2] pre-trained models, covering CIFAR-100 [10], UCF101 [11], and HMDB51 [12] public datasets, demonstrating the effectiveness of the proposed method in stabilizing label position encoding and improving fine-tuned performance for visual self-supervised models.

2. Related Work

2.1. Self-Supervised Learning

2.2. Label Position Encoding

2.3. Linear Discriminant Analysis

3. METHOD

3.1. Problem Definition

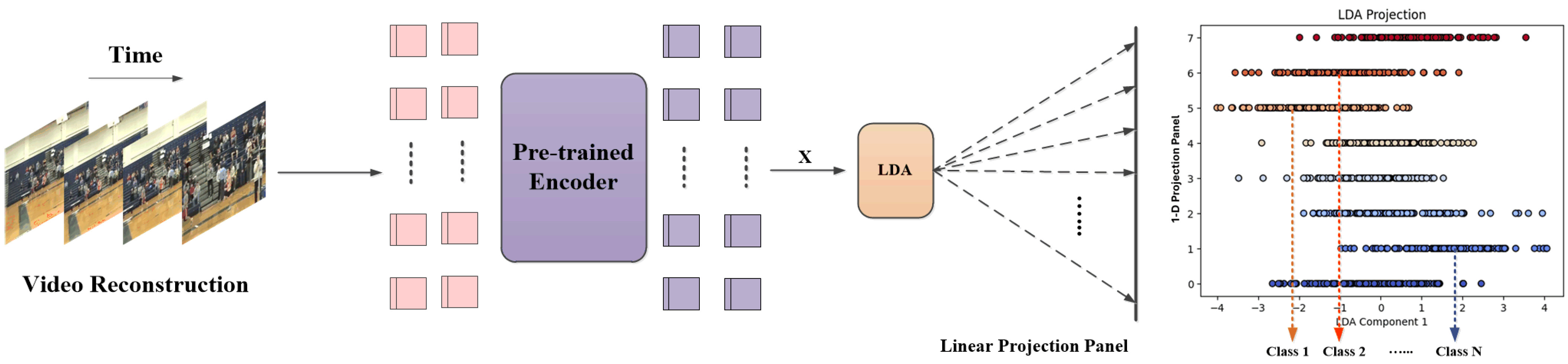

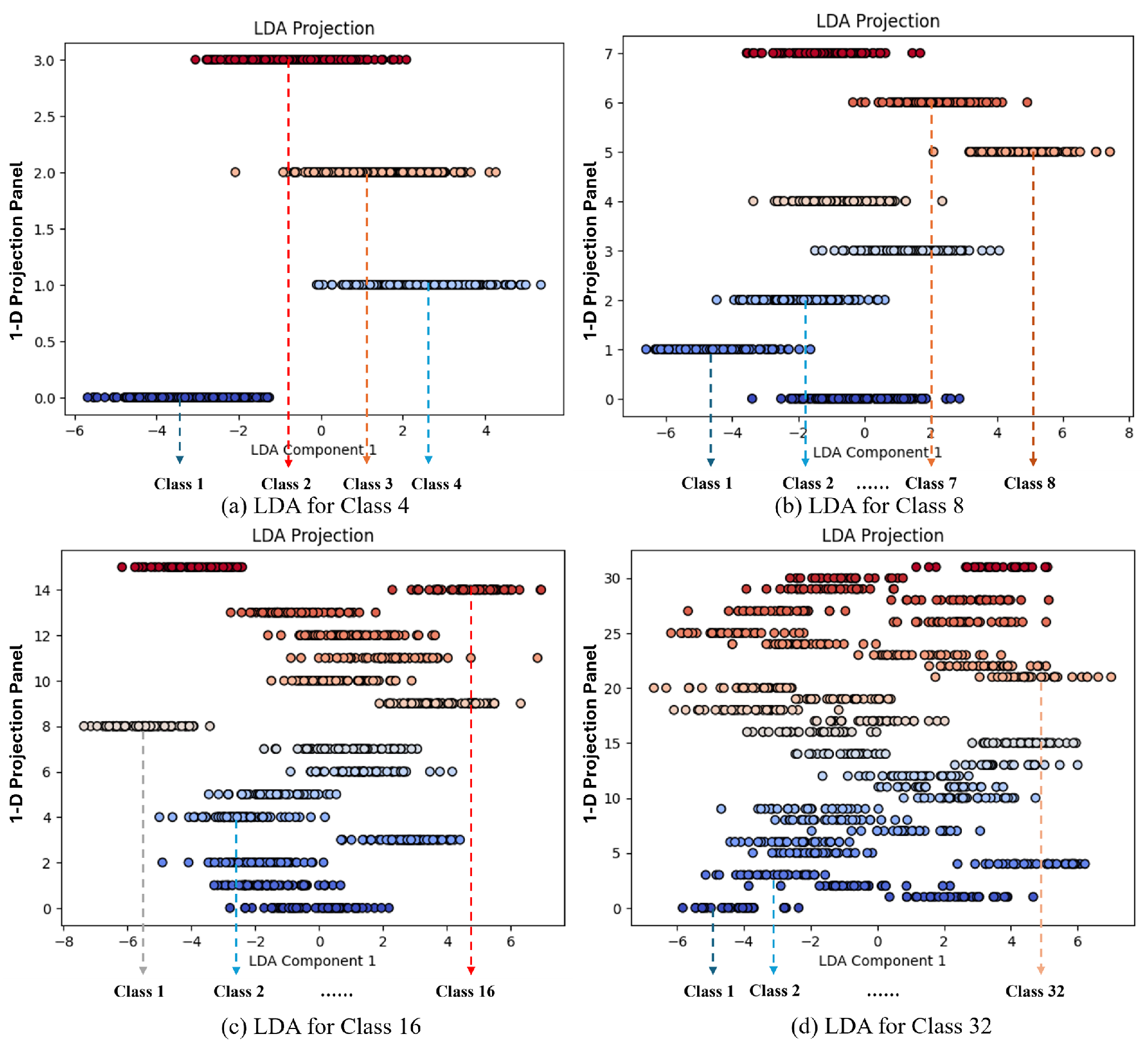

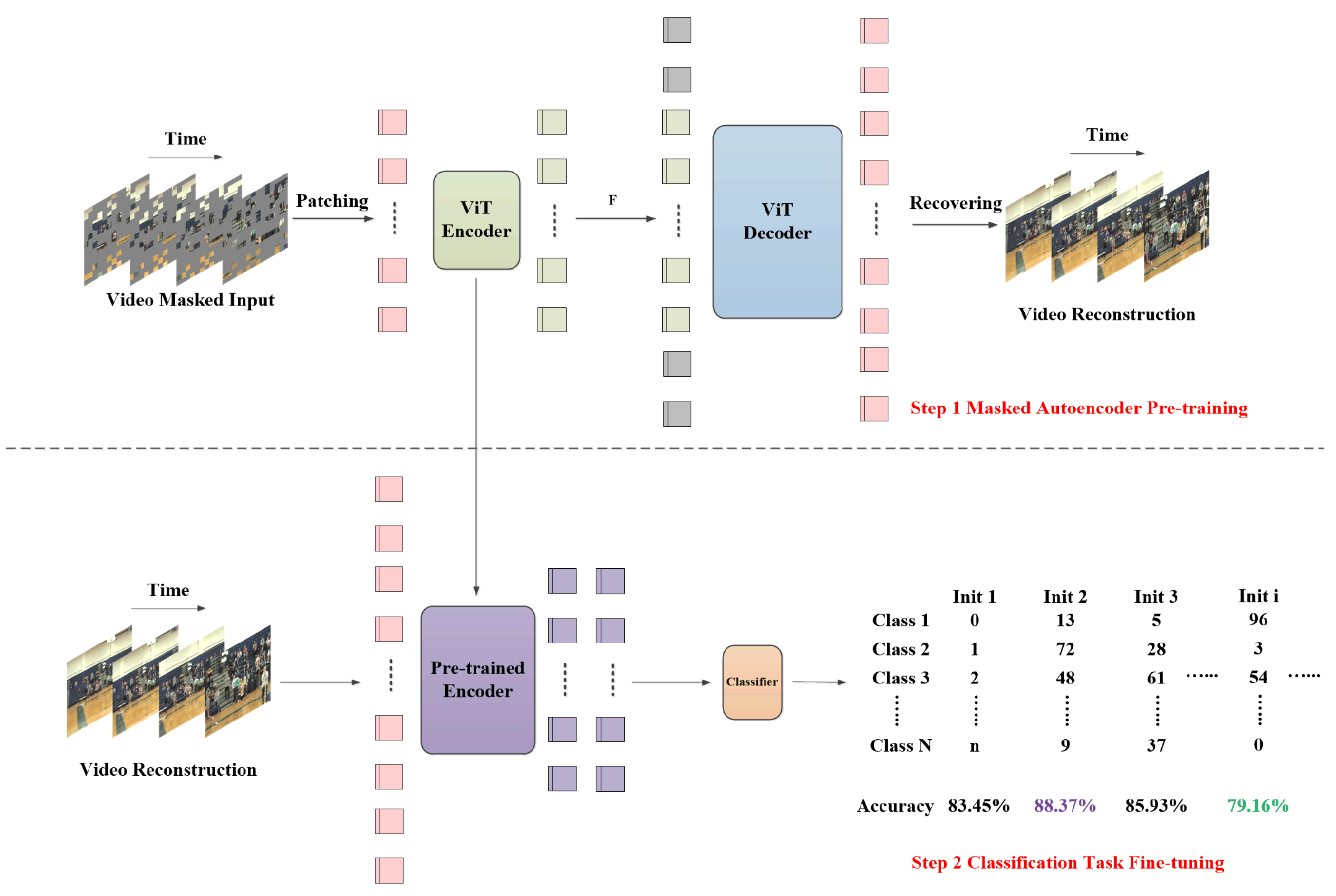

3.2. LDA Projection

| Algorithm 1:Label Ranker Algorithm |

|

Input: X

Parameter: N

Output: Y

|

3.3. Similarity Ranking

4. Experiments

4.1. Datasets

4.1.1. CIFAR-100 [10]

4.1.2. UCF101 [11]

4.1.3. HMDB51 [12]

4.2. Implementation Details

4.2.1. Pre-Training

4.2.2. Fine-Tuning

4.2.3. Algorithm Strategy

| Model | from scratch | Pre-trained Data | Label State | Accuracy |

| Vanilla ViT-B [8] | ✓ | − | Sequence | |

| ✓ | − | Random | ||

| ✓ | − | Random | ||

| ✓ | − | Random | ||

| ✓ | − | Random | ||

| ✓ | − | Random | ||

| ✓ | − | Random | ||

| ✓ | − | Random | ||

| Avg. | − | − | − | |

| ImageMAE [1] | × | ImageNet-1k [14] | Sequence | |

| × | ImageNet-1k [14] | Random | ||

| × | ImageNet-1k [14] | Random | ||

| × | ImageNet-1k [14] | Random | ||

| × | ImageNet-1k [14] | Random | ||

| × | ImageNet-1k [14] | Random | ||

| × | ImageNet-1k [14] | Random | ||

| × | ImageNet-1k [14] | Random | ||

| Avg. | − | − | − | |

| × | ImageNet-1k [14] | Label Ranker |

4.3. Results

4.3.1. Ablation Study

| Model | from scratch | Pre-trained Data | Label State | Accuracy |

| Vanilla ViT-B [8] | ✓ | − | Sequence | |

| ✓ | − | Random | ||

| ✓ | − | Random | ||

| ✓ | − | Random | ||

| ✓ | − | Random | ||

| ✓ | − | Random | ||

| ✓ | − | Random | ||

| ✓ | − | Random | ||

| Avg. | − | − | − | |

| VideoMAE [2] | × | Kinetics 400 [15] | Sequence | |

| × | Kinetics 400 [15] | Random | ||

| × | Kinetics 400 [15] | Random | ||

| × | Kinetics 400 [15] | Random | ||

| × | Kinetics 400 [15] | Random | ||

| × | Kinetics 400 [15] | Random | ||

| × | Kinetics 400 [15] | Random | ||

| × | Kinetics 400 [15] | Random | ||

| Avg. | − | − | − | |

| × | Kinetics 400 [15] | Label Ranker |

| Model | from scratch | Pre-trained Data | Label State | Accuracy |

| Vanilla ViT-B [8] | ✓ | − | Sequence | |

| ✓ | − | Random | ||

| ✓ | − | Random | ||

| ✓ | − | Random | ||

| ✓ | − | Random | ||

| ✓ | − | Random | ||

| ✓ | − | Random | ||

| ✓ | − | Random | ||

| Avg. | − | − | − | |

| VideoMAE [2] | × | Kinetics 400 [15] | Sequence | |

| × | Kinetics 400 [15] | Random | ||

| × | Kinetics 400 [15] | Random | ||

| × | Kinetics 400 [15] | Random | ||

| × | Kinetics 400 [15] | Random | ||

| × | Kinetics 400 [15] | Random | ||

| × | Kinetics 400 [15] | Random | ||

| × | Kinetics 400 [15] | Random | ||

| Avg. | − | − | − | |

| × | Kinetics 400 [15] | Label Ranker |

4.3.2. Performance Analysis and Limitations

5. Conclusions and Future Work

5.1. Conclusions

5.2. Future Work

References

- He, Kaiming, et al. "Masked autoencoders are scalable vision learners." Proceedings of the IEEE/CVF conference on computer vision and pattern recognition. 2022.

- Tong, Zhan, et al. "Videomae: Masked autoencoders are data-efficient learners for self-supervised video pre-training." Advances in neural information processing systems 35 (2022): 10078-10093.

- Feichtenhofer, Christoph, Yanghao Li, and Kaiming He. "Masked autoencoders as spatiotemporal learners." Advances in neural information processing systems 35 (2022): 35946-35958.

- Bachmann, Roman, et al. "Multimae: Multi-modal multi-task masked autoencoders." European Conference on Computer Vision. Cham: Springer Nature Switzerland, 2022.

- Xu, Suping, Lin Shang, and Furao Shen. "Latent Semantics Encoding for Label Distribution Learning." IJCAI. 2019.

- Miyato, Takeru, et al. "Virtual adversarial training: a regularization method for supervised and semi-supervised learning." IEEE transactions on pattern analysis and machine intelligence 41.8 (2018): 1979-1993. [CrossRef]

- Kingma, Durk P., et al. "Semi-supervised learning with deep generative models." Advances in neural information processing systems 27 (2014).

- Dosovitskiy, Alexey. "An image is worth 16x16 words: Transformers for image recognition at scale. arXiv:2010.11929 (2020).

- Xanthopoulos, Petros, et al. "Linear discriminant analysis." Robust data mining (2013): 27-33.

- Krizhevsky, Alex, and Geoffrey Hinton. "Learning multiple layers of features from tiny images." (2009): 7.

- Soomro, K. "UCF101: A dataset of 101 human actions classes from videos in the wild. arXiv:1212.0402 (2012).

- Kuehne, Hildegard, et al. "HMDB: a large video database for human motion recognition." 2011 International conference on computer vision. IEEE, 2011.

- Jaiswal, Ashish, et al. "A survey on contrastive self-supervised learning." Technologies 9.1 (2020): 2. [CrossRef]

- Russakovsky, Olga, et al. "Imagenet large scale visual recognition challenge." International journal of computer vision 115 (2015): 211-252.

- Kay, Will, et al. "The kinetics human action video dataset. arXiv:1705.06950 (2017).

- Loshchilov, Ilya, and Frank Hutter. "Sgdr: Stochastic gradient descent with warm restarts. arXiv:1608.03983 (2016).

- Loshchilov, I. "Decoupled weight decay regularization. arXiv:1711.05101 (2017).

- Van der Maaten, Laurens, and Geoffrey Hinton. "Visualizing data using t-SNE." Journal of machine learning research 9.11 (2008).

- Abdi, Hervé, and Lynne J. Williams. "Principal component analysis." Wiley interdisciplinary reviews: computational statistics 2.4 (2010): 433-459.

- Rong, Xin. "word2vec parameter learning explained. arXiv:1411.2738 (2014).

- Pennington, Jeffrey, Richard Socher, and Christopher D. Manning. "Glove: Global vectors for word representation." Proceedings of the 2014 conference on empirical methods in natural language processing (EMNLP). 2014.

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).