Submitted:

14 May 2023

Posted:

16 May 2023

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Related Work

2.1. Traditional Skills Assessment

2.2. Piano Performance in Unimodality

2.3. Piano Performance in Multimodality

2.4. Transfer Learning

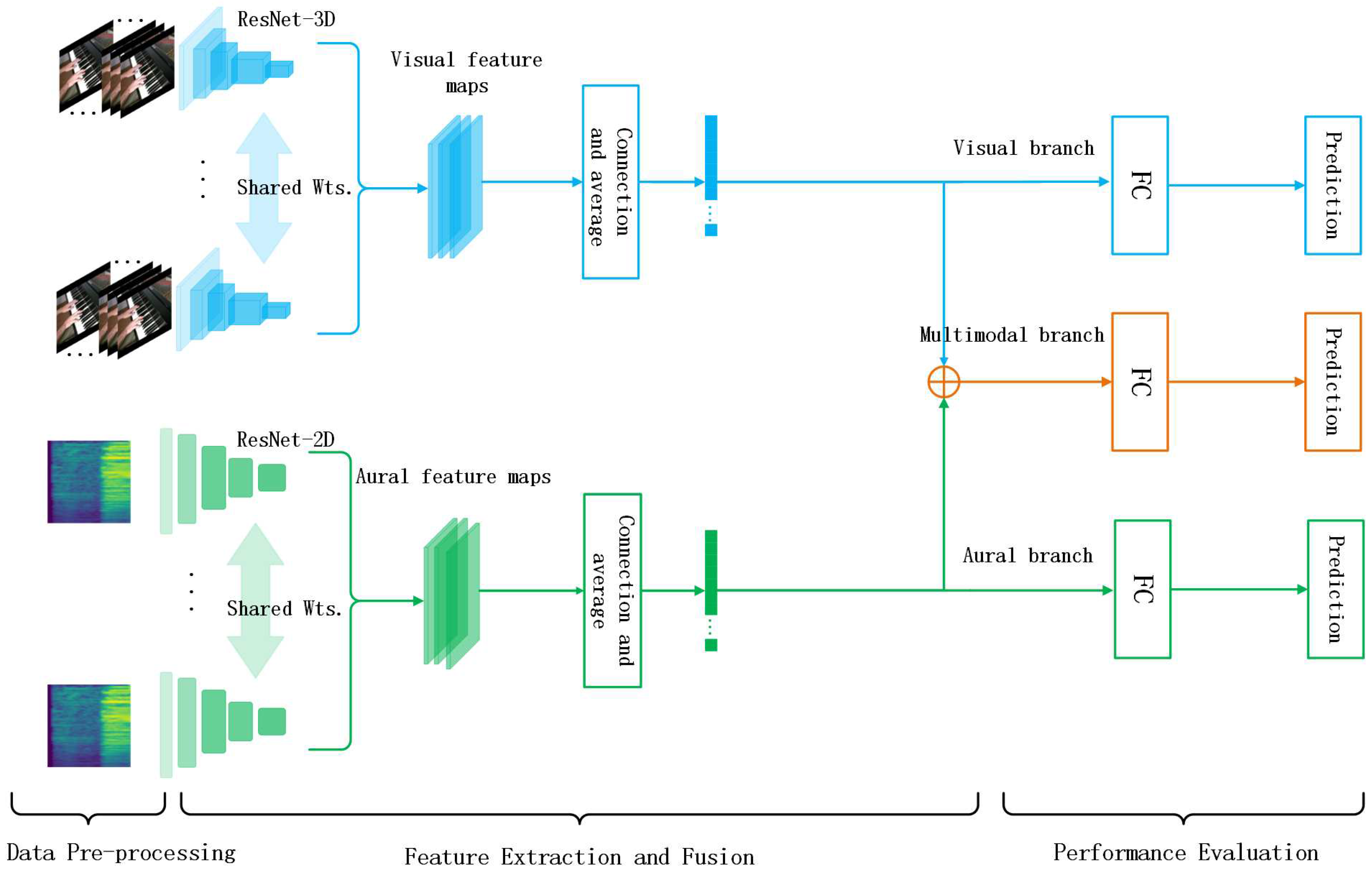

3. Methodology

3.1. Data Pre-Processing

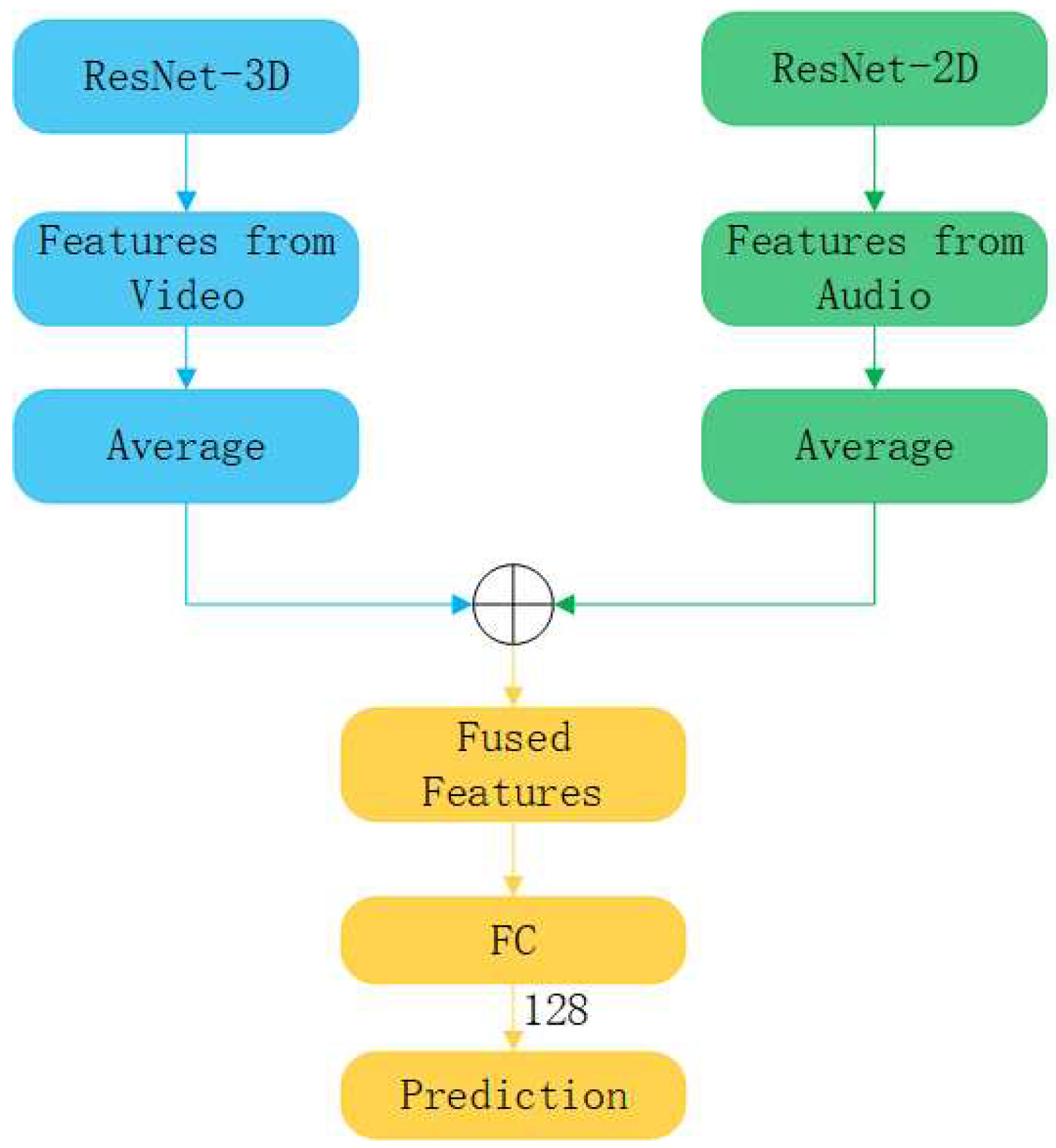

3.2. Feature Extraction and Fusion

| Algorithm 1 Model Initialization Algorithm |

|

Input: :the dictionary of model parameters; :the dictionary of pre-trained model parameters; Output: :the model dictionary after completing the update;

|

| Algorithm 2 Feature Fusion Algorithm |

|

Input: : the video features extracted by ResNet-3D; : the audio features extracted by ResNet-2D; Output: : multimodal features;

|

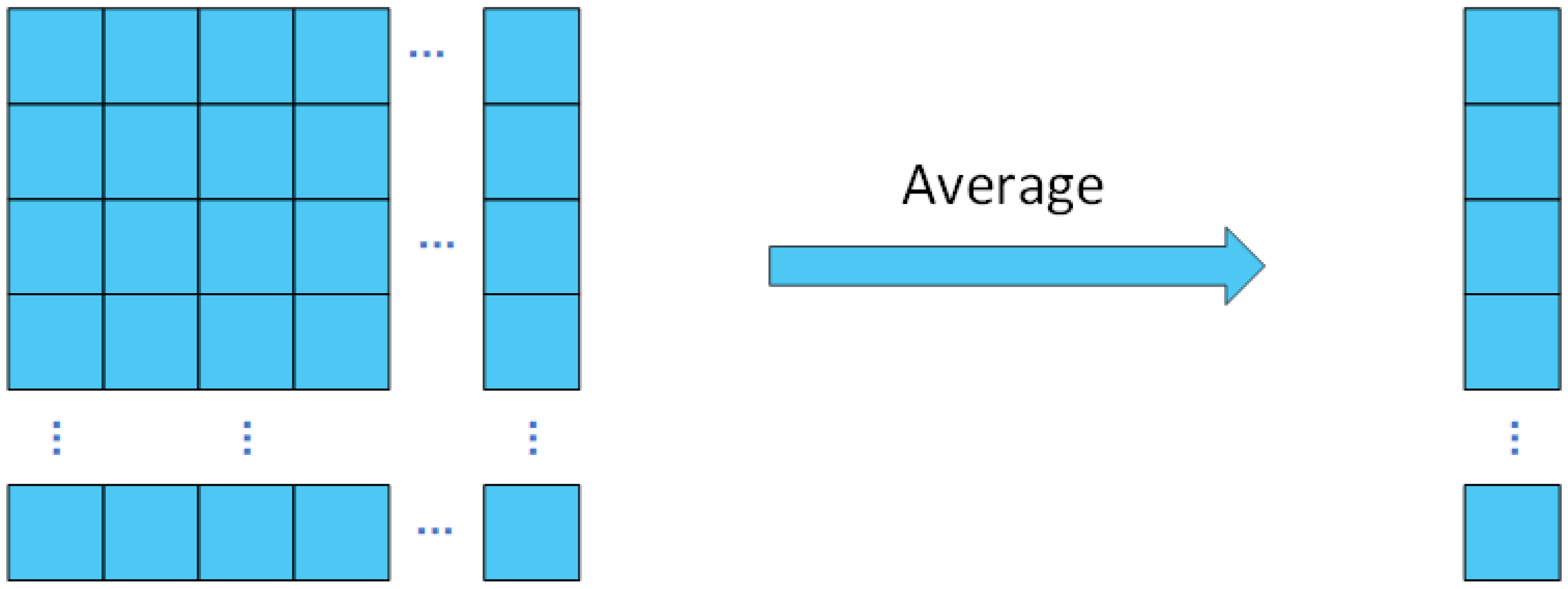

| Algorithm 3 Feature Average Algorithm |

|

Input: : The list of features obtained from the network; Output: : Features after averaging process;

|

3.3. Performance Evaluation

4. Experiments

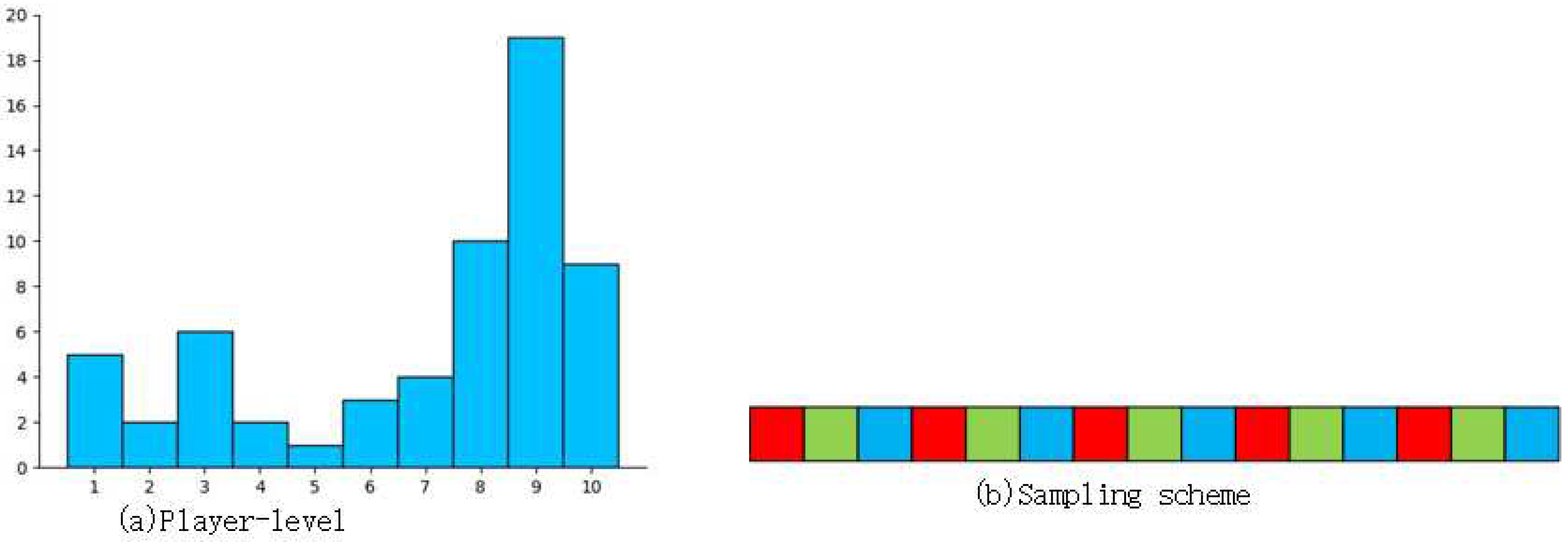

4.1. Multimodal PISA Dataset

| Total samples | Training samples | Test samples | |

| PISA Dataset | 992 | 516 | 476 |

4.2. Evaluation Metric

4.3. Implementation Details

| Stage | 18-layer | 34-layer | 50-layer |

| Block1 | Conv: 64, 7×7×7, 2×2×2 Max pool: 3×3×3, 2×2×2 |

||

| Block2_x | |||

| Block3_x | |||

| Block4_x | |||

| Block5_x | |||

| Block6 | Adaptive average pool:1×1×1 | ||

| Block7 | Linear:in = 512, out = 128 | Linear:in = 2048, out = 128 | |

| Block8 | Linear:in = 128, out = 10 | ||

| Stage | 18-layer | 34-layer | 50-layer |

| Block1 | Conv:64, 7×7, 2×2 Max pool:3×3, 2×2 |

||

| Block2_x | |||

| Block3_x | |||

| Block4_x | |||

| Block5_x | |||

| Block6 | Average pool:7×7, 1×1 | ||

| Block7 | Linear:in = 512, out = 128 | Linear:in = 2048, out = 128 | |

| Block8 | Linear:in = 128, out = 10 | ||

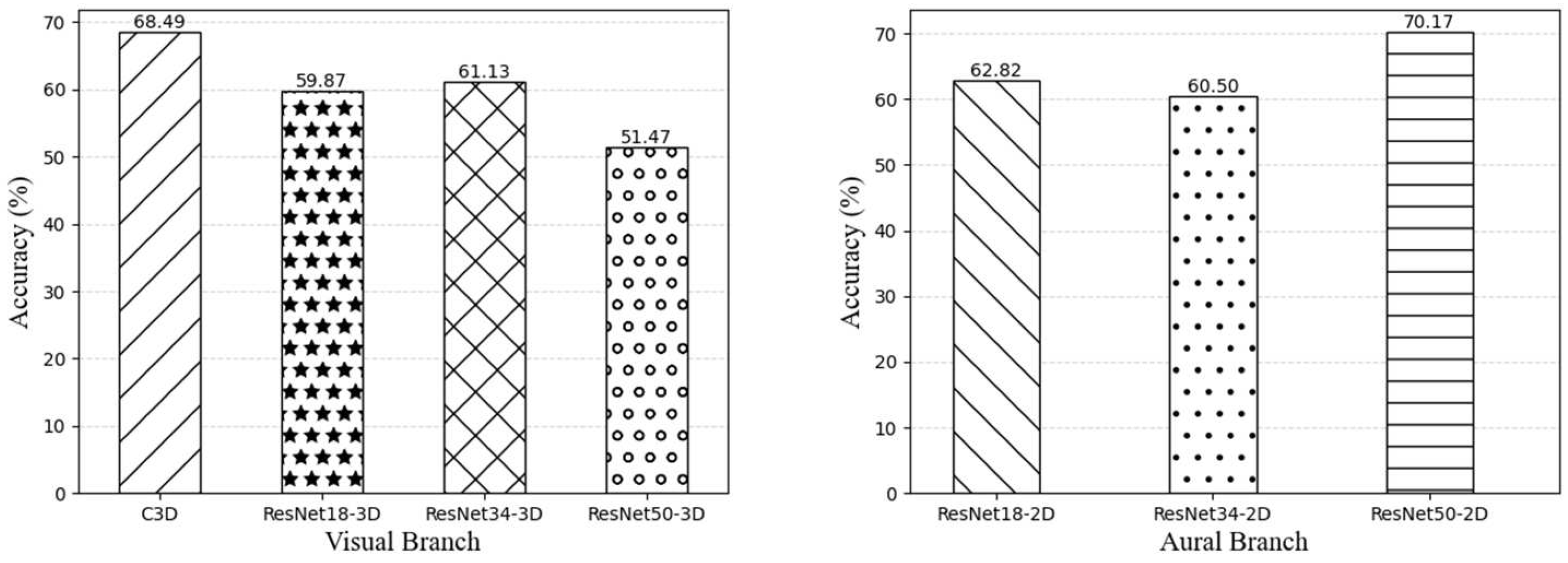

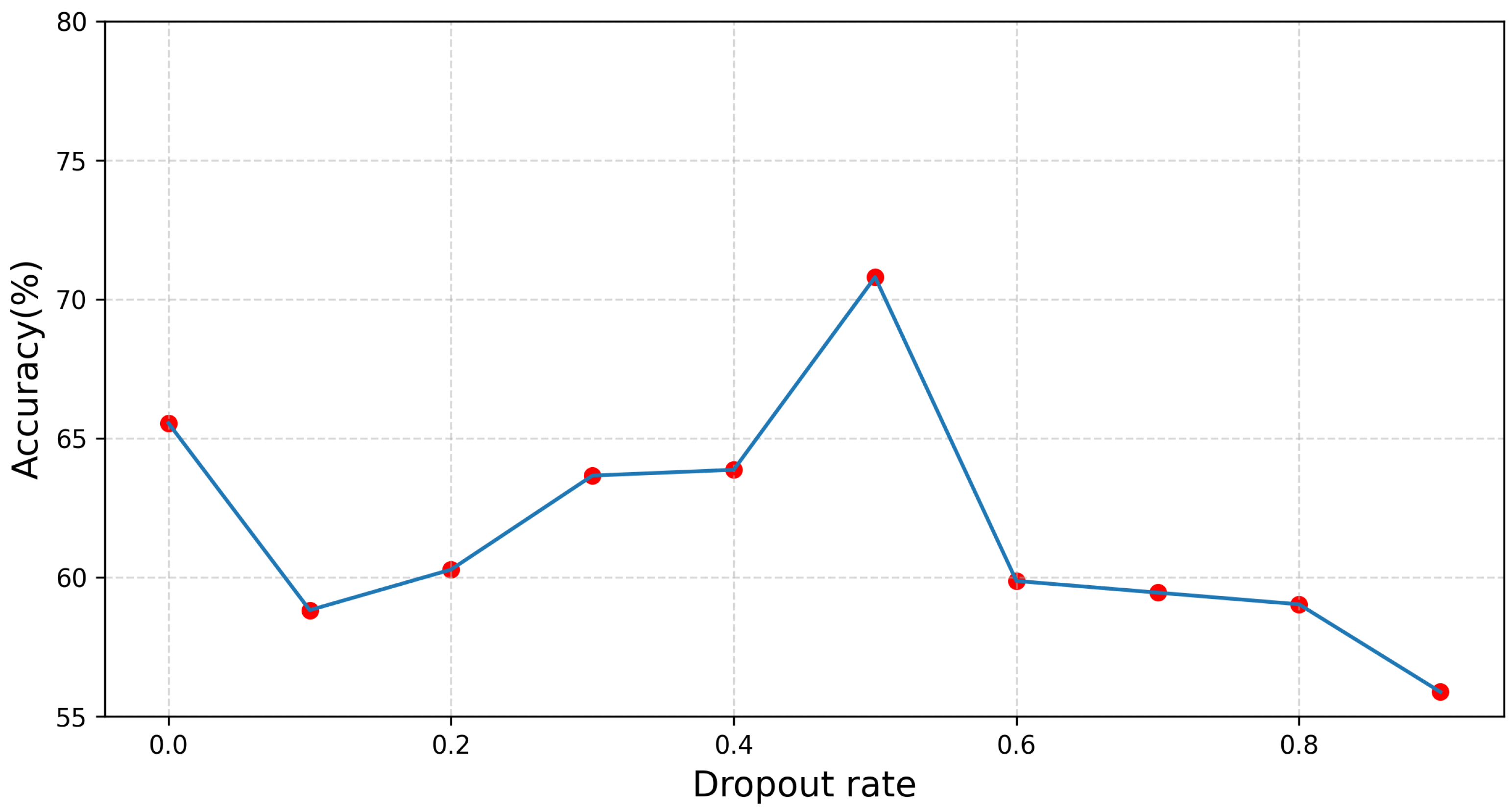

4.4. Results of Experiments

| Model | V : A | Accuracy (%) |

| C3D + ResNet18-2D [15] | 8:1 | 68.70 |

| ResNet18-3D + ResNet34-2D | 1:1 | 66.39 |

| ResNet34-3D + ResNet18-2D | 1:1 | 66.81 |

| ResNet34-3D + ResNet34-2D | 1:1 | 65.97 |

| ResNet50-3D + ResNet50-2D | 1:1 | 59.45 |

| ResNet18-3D + ResNet18-2D (Ours) | 1:1 | 70.80 |

| Model | Calculation time (s) |

| C3D + ResNet18-2D | 111.08 |

| ResNet18-3D + ResNet34-2D | 87.91 |

| ResNet18-3D + ResNet50-2D | 96.75 |

| ResNet34-3D + ResNet18-2D | 97.95 |

| ResNet34-3D + ResNet34-2D | 110.33 |

| ResNet34-3D + ResNet50-3D | 118.79 |

| ResNet50-3D + ResNet18-2D | 96.71 |

| ResNet50-3D + ResNet34-2D | 109.79 |

| ResNet50-3D + ResNet50-2D | 119.93 |

| ResNet18-3D + ResNet18-2D (Ours) | 74.02 |

| Model | V : A | Accuracy (%) |

| C3D + ResNet18-2D | 8:1 | 68.70 |

| ResNet18-3D + ResNet50-2D | 1:4 | 65.55 |

| ResNet34-3D + ResNet50-2D | 1:4 | 67.02 |

| ResNet50-3D + ResNet18-2D | 4:1 | 64.50 |

| ResNet50-3D + ResNet34-2D | 4:1 | 61.34 |

| RsetNet18-3D + ResNet18-2D (Ours) | 1:1 | 70.80 |

4.5. Ablation Study

| Accuracy (%) | Calculation time (s) | |

| Without Pretrain | 57.98 | 73.99 |

| KM + ImageNet Pretrain(Used) | 70.80 | 74.02 |

5. Conclusions

Author Contributions

Funding

References

- Chang, X.; Peng, L. Evaluation strategy of the piano performance by the deep learning long short-term memory network. Wireless Communications and Mobile Computing 2022, 2022. [Google Scholar] [CrossRef]

- Zhang, Y. An Empirical Analysis of Piano Performance Skill Evaluation Based on Big Data. Mobile Information Systems 2022, 2022. [Google Scholar] [CrossRef]

- Wang, W.; Pan, J.; Yi, H.; Song, Z.; Li, M. Audio-based piano performance evaluation for beginners with convolutional neural network and attention mechanism. IEEE/ACM Transactions on Audio, Speech, and Language Processing 2021, 29, 1119–1133. [Google Scholar] [CrossRef]

- Hara, K.; Kataoka, H.; Satoh, Y. Can spatiotemporal 3d cnns retrace the history of 2d cnns and imagenet? In Proceedings of the Proceedings of the IEEE conference on Computer Vision and Pattern Recognition; 2018; pp. 6546–6555. [Google Scholar]

- He, K.; Zhang, X.; Ren, S.; Sun, J. Deep residual learning for image recognition. In Proceedings of the Proceedings of the IEEE conference on computer vision and pattern recognition, 2016, pp.; pp. 770–778.

- Seo, C.; Sabanai, M.; Ogata, H.; Ohya, J. Understanding sprinting motion skills using unsupervised learning for stepwise skill improvements of running motion. International Conference on Pattern Recognition Applications and Methods 2019. [Google Scholar]

- Li, Z.; Huang, Y.; Cai, M.; Sato, Y. Manipulation-skill Assessment from Videos with Spatial Attention Network. Cornell University - arXiv 2019.

- Doughty, H.; Mayol-Cuevas, W.W.; Damen, D. The Pros and Cons: Rank-Aware Temporal Attention for Skill Determination in Long Videos. Computer Vision and Pattern Recognition 2019. [Google Scholar]

- Lee, J.; Doosti, B.; Gu, Y.; Cartledge, D.; Crandall, D.J.; Raphael, C. Observing Pianist Accuracy and Form with Computer Vision. Workshop on Applications of Computer Vision 2019. [Google Scholar]

- Doughty, H.; Damen, D.; Mayol-Cuevas, W.W. Who’s Better? Who’s Best? Pairwise Deep Ranking for Skill Determination. Computer Vision and Pattern Recognition 2018. [Google Scholar]

- Wang, W.; Pan, J.; Yi, H.; Song, Z.; Li, M. Audio-Based Piano Performance Evaluation for Beginners With Convolutional Neural Network and Attention Mechanism. IEEE/ACM Transactions on Audio, Speech, and Language Processing 2021, 29, 1119–1133. [Google Scholar] [CrossRef]

- Phanichraksaphong, V.; Tsai, W.H. Automatic Evaluation of Piano Performances for STEAM Education. Applied sciences 2021. [Google Scholar] [CrossRef]

- Liao, Y. Educational Evaluation of Piano Performance by the Deep Learning Neural Network Model. Mobile Information Systems 2022, p. 1–12. [CrossRef]

- Koepke, A.S.; Wiles, O.; Moses, Y.; Zisserman, A. Sight to Sound: An End-to-End Approach for Visual Piano Transcription. International Conference on Acoustics, Speech, and Signal Processing 2020.

- Parmar, P.; Reddy, J.; Morris, B. Piano skills assessment. In Proceedings of the 2021 IEEE 23rd international workshop on multimedia signal processing (MMSP). IEEE, 2021, pp. 1–5.

- Badrinarayanan, V.; Kendall, A.; Cipolla, R. Segnet: A deep convolutional encoder-decoder architecture for image segmentation. IEEE transactions on pattern analysis and machine intelligence 2017, 39, 2481–2495. [Google Scholar] [CrossRef] [PubMed]

- Iglovikov, V.; Shvets, A. Ternausnet: U-net with vgg11 encoder pre-trained on imagenet for image segmentation. arXiv preprint arXiv:1801.05746 2018. arXiv:1801.05746 2018.

- Majkowska, A.; Mittal, S.; Steiner, D.F.; Reicher, J.J.; McKinney, S.M.; Duggan, G.E.; Eswaran, K.; Cameron Chen, P.H.; Liu, Y.; Kalidindi, S.R.; et al. Chest radiograph interpretation with deep learning models: assessment with radiologist-adjudicated reference standards and population-adjusted evaluation. Radiology 2020, 294, 421–431. [Google Scholar] [CrossRef] [PubMed]

- Gulshan, V.; Peng, L.; Coram, M.; Stumpe, M.C.; Wu, D.; Narayanaswamy, A.; Venugopalan, S.; Widner, K.; Madams, T.; Cuadros, J.; et al. Development and validation of a deep learning algorithm for detection of diabetic retinopathy in retinal fundus photographs. Jama 2016, 316, 2402–2410. [Google Scholar] [CrossRef] [PubMed]

- Carreira, J.; Zisserman, A. Quo vadis, action recognition? a new model and the kinetics dataset. In Proceedings of the proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, 2017, pp. 6299–6308.

- Tran, D.; Bourdev, L.; Fergus, R.; Torresani, L.; Paluri, M. Learning spatiotemporal features with 3d convolutional networks. In Proceedings of the Proceedings of the IEEE international conference on computer vision, 2015, pp. 4489–4497.

- O’Shaughnessy, D. Speech Communication: Human and Machine; Addison-Wesley series in electrical engineering, Addison-Wesley Publishing Company, 1987.

- Lee, J.; Park, J.; Kim, K.L.; Nam, J. Sample-level deep convolutional neural networks for music auto-tagging using raw waveforms. arXiv preprint arXiv:1703.01789 2017. arXiv:1703.01789 2017.

- Zhu, Z.; Engel, J.H.; Hannun, A. Learning multiscale features directly from waveforms. arXiv preprint arXiv:1603.09509 2016. arXiv:1603.09509 2016.

- Choi, K.; Fazekas, G.; Sandler, M. Automatic tagging using deep convolutional neural networks. arXiv preprint arXiv:1606.00298 2016. arXiv:1606.00298 2016.

- Nasrullah, Z.; Zhao, Y. Music artist classification with convolutional recurrent neural networks. In Proceedings of the 2019 International Joint Conference on Neural Networks (IJCNN). IEEE, 2019, pp. 1–8.

- Paszke, A.; Gross, S.; Massa, F.; Lerer, A.; Bradbury, J.; Chanan, G.; Killeen, T.; Lin, Z.; Gimelshein, N.; Antiga, L.; et al. PyTorch: An Imperative Style, High-Performance Deep Learning Library. Neural Information Processing Systems 2019. [Google Scholar]

- Kingma, D.P.; Ba, J. Adam: A Method for Stochastic Optimization. arXiv: Learning 2014.

- Carreira, J.; Noland, E.; Hillier, C.; Zisserman, A. A short note on the kinetics-700 human action dataset. arXiv preprint arXiv:1907.06987 2019. arXiv:1907.06987 2019.

- Abu-El-Haija, S.; Kothari, N.; Lee, J.; Natsev, P.; Toderici, G.; Varadarajan, B.; Vijayanarasimhan, S. Youtube-8m: A large-scale video classification benchmark. arXiv preprint arXiv:1609.08675 2016. arXiv:1609.08675 2016.

- Deng, J.; Dong, W.; Socher, R.; Li, L.J.; Li, K.; Fei-Fei, L. Imagenet: A large-scale hierarchical image database. In Proceedings of the 2009 IEEE conference on computer vision and pattern recognition. Ieee, 2009, pp. 248–255.

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2023 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).